Building a Roadmap: Your 2026 Guide to Growth

Stop building features nobody uses. Learn the step-by-step process for building a roadmap that directly connects customer feedback to revenue & business goals.

The roadmap meeting often fails before anyone opens the deck.

Sales wants the feature tied to a late-stage deal. Support wants relief for the issue generating the most tickets. Engineering wants to clean up the architecture everyone has been ignoring. Leadership wants a marketable launch this quarter. Product sits in the middle, trying to turn competing opinions into one plan.

That’s how a lot of teams end up building a roadmap. The loudest argument wins. The highest-ranking person gets extra weight. The most recent customer anecdote becomes a priority. Then everyone acts surprised when the roadmap turns into a list of disconnected promises.

At a fast-growing SaaS company, that approach breaks quickly. You can’t afford to spend a quarter on work that feels important but doesn’t move churn, expansion, retention, or adoption. You also can’t keep telling customers you’re “listening” if your roadmap is still driven by internal volume instead of customer signal.

The shift that matters is simple to describe and hard to implement. Stop treating feedback as votes. Start treating it as evidence. Support tickets, sales calls, onboarding friction, usage gaps, and renewal objections all contain signal. The job of building a roadmap is turning that signal into a sequence of bets tied to business outcomes.

Beyond the Opinion-Based Roadmap

Most opinion-based roadmaps share the same symptoms. Teams confuse urgency with importance. They mistake stakeholder confidence for customer truth. They fill a quarter with output and later ask whether any of it changed the business.

I’ve seen the pattern enough times to know what it looks like in practice. A roadmap item gets added because a prospect asked for it loudly. Another stays because an executive mentioned it twice. A platform fix gets delayed because it doesn’t demo well. By the time the quarter ends, the team shipped a lot and learned very little.

What breaks first

The first thing that breaks isn’t delivery. It’s trust.

Engineering stops believing priorities are stable. Customer-facing teams start making promises outside the roadmap because they don’t trust the roadmap to reflect what customers need. Product gets trapped in constant re-explanation.

The roadmap stops being a decision tool and becomes a political artifact.

That’s a dangerous place to operate from. Teams start optimizing for internal agreement instead of market impact.

What a better roadmap changes

A better roadmap doesn’t remove debate. It makes the debate useful.

Instead of asking, “Who wants this most?” ask different questions:

- What customer problem keeps showing up across channels

- Which problem is tied to churn, stalled expansion, or deal friction

- What’s the expected business outcome if we solve it

- What is the true cost, risk, and dependency footprint

- What are we explicitly not doing because the return is weaker

That shift matters because roadmaps work best when they create organizational alignment, not just visibility. Research on roadmap frameworks notes 30-50% improvements in org-wide prioritization, and companies with mature roadmaps see 35% faster feature delivery and 22% higher customer retention when the roadmap ties work to strategic investments and resource constraints (dscout research roadmap).

The operating principle

When building a roadmap, the standard should be simple. Every major item should connect to a measurable business outcome and a real customer problem. If it can’t, it belongs in discovery, not on the roadmap.

That’s the difference between a roadmap that calms the room for an hour and one that helps the business grow.

Defining Your North Star and Strategic Goals

A roadmap without a destination becomes a feature inventory. It looks busy. It sounds thoughtful. It rarely creates an advantage.

The starting point is not the backlog. It’s the business objective. If leadership says the company needs stronger enterprise expansion, better retention, or more adoption in a strategic segment, product has to translate that into a product outcome the team can influence.

Start with business intent, not feature ideas

The easiest mistake is taking a company goal and immediately turning it into a list of requests.

“Improve user experience” becomes ten UI ideas. “Win more enterprise deals” becomes a security checklist. “Reduce churn” becomes a vague push to make onboarding better. None of those are roadmap anchors. They’re brainstorming prompts.

Use a tighter sequence instead:

- State the business objective clearly Example. Increase enterprise market share, reduce churn, or improve expansion motion.

- Name the customer behavior that must change If churn is the concern, what behavior predicts it. Low activation, weak usage depth, repeated support friction, or stalled admin setup.

- Turn that behavior into a product outcome Product earns its keep in this area. Not “ship a dashboard.” Instead, “help new admins reach first value faster” or “remove the workflow gap that blocks multi-team rollout.”

- Attach measurable OKRs Analytics8 notes that organizations aligning a data strategy roadmap with measurable OKRs and quarterly reviews can achieve up to 5-6x higher ROI (Analytics8 on developing a data strategy roadmap).

That last point matters because many teams still treat goals as slogans. If the roadmap isn’t tied to measurable outcomes, prioritization becomes subjective again.

Write goals that force trade-offs

A useful goal closes doors. It tells the team what matters now and what can wait.

Weak goal:

- Improve onboarding

Strong goal:

- Reduce early customer friction in the setup flow so new accounts reach value faster

Weak goal:

- Build better reporting

Strong goal:

- Help account owners identify usage risk early enough to prevent avoidable churn conversations

The wording matters because it changes what the team proposes. Vague goals attract feature sprawl. Sharp goals narrow the field.

Practical rule: If three different teams can interpret the goal in three different ways, it isn’t ready for roadmap planning.

Use a North Star that product can influence

Not every important company metric should become the product team’s North Star. Revenue matters, but revenue is downstream. Product needs a metric close enough to user behavior that the team can act on it consistently.

That’s why many teams pair a business goal with a product-level North Star and a handful of supporting metrics. If you need a clean way to think about that relationship, this breakdown of the https://www.sigos.io/blog/north-star-metric is useful because it frames the metric as a decision tool rather than a vanity KPI.

A good setup usually has three layers:

- Company outcome Retention, expansion, or strategic segment growth.

- Product North Star A behavior signal that reflects delivered value.

- Guardrails Adoption quality, support burden, or delivery constraints that keep the team honest.

Build the roadmap around themes, not scattered asks

Roadmaps improve when they stop presenting isolated features and start showing themes. That doesn’t mean hiding detail. It means organizing work around coherent outcomes.

A strong quarterly roadmap often groups work under themes such as:

- Activation and onboarding

- Retention and churn risk

- Expansion enablers

- Platform reliability and data quality

- Operational advantage for internal teams

Visual structure helps here. Mature roadmaps increasingly combine people, process, and technology so resource constraints are visible early, not discovered halfway through delivery. If you’re setting up that kind of planning motion, this AI transformation roadmap template is a useful reference because it shows how to connect strategic intent, workstreams, and execution timelines without collapsing everything into a flat task list.

The workshop that works

A goal-setting workshop doesn’t need to be complicated. It needs the right participants and the right questions.

Keep the room small enough to decide. Product, engineering, design, a revenue leader, and someone close to customer reality from support or success is usually enough.

Use prompts like these:

- Which business objective matters most this planning cycle

- Which customer behavior is most tightly connected to that objective

- What evidence do we have

- What would count as a measurable win by the end of the quarter

- What work is attractive but off-strategy right now

When those answers are clear, building a roadmap gets easier. Not easy. Easier. The team has a frame for saying yes, no, and not now.

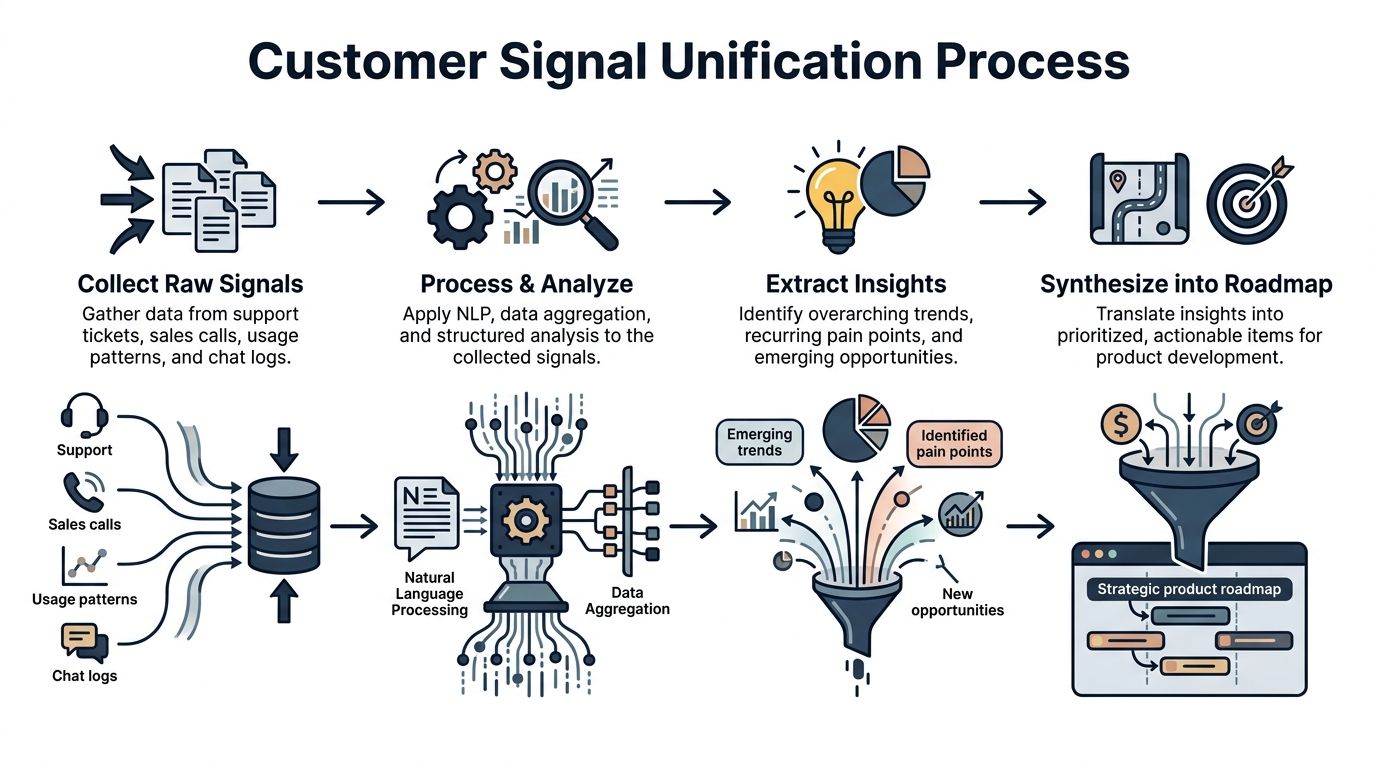

Unifying Your Customer Signal Intelligence

Most product teams already have plenty of customer input. They don’t have a signal problem. They have a fragmentation problem.

Support is in Zendesk. Sales notes live in Gong or CRM fields. Success keeps renewal risk in spreadsheets. Product looks at usage dashboards. Engineering sees bug reports in Jira. Marketing hears objections on launch calls. Each team sees a slice of reality and assumes it’s the whole thing.

That’s why roadmaps drift. The product team ends up prioritizing whatever source is easiest to access instead of whatever signal is strongest.

The raw inputs that matter

If you want to connect qualitative feedback directly to revenue impact, start by treating customer signal as an operating system, not a collection bin.

The useful inputs usually come from five places:

- Support conversations Tickets show repeated friction, language customers use, and the operational cost of unresolved issues.

- Sales and renewal calls Call transcripts reveal deal blockers, competitive objections, and features tied to expansion or hesitation.

- Behavioral product data Usage patterns tell you whether the pain is broad, shallow, recurring, or isolated.

- Customer success notes These expose risk before churn happens and show where expected value is breaking down.

- Engineering and incident data Reliability issues often surface as customer complaints long before they become roadmap items.

The point isn’t to dump all of that into one folder. The point is to create a shared model that can map signals to accounts, segments, and business outcomes.

Why manual clustering breaks down

A lot of roadmap guides still assume PMs can read through notes, cluster themes manually, and emerge with confidence. That works at small scale. It falls apart once the volume rises and feedback comes in continuously.

A major gap in traditional roadmapping guidance is the lack of AI-driven analysis for quantifying revenue impact. Manual clustering is still common, but it misses automated pattern detection. The gap matters because tools like SigOS show 87% correlation accuracy, 62% of SaaS PMs struggle with prioritizing qualitative feedback, subjective methods are associated with 25% higher churn, teams using AI quantification reduce misprioritization by 40%, and only 18% adopt that approach today (NN Group roadmapping steps).

Those numbers explain why teams feel stuck. They have more data than ever, but without structure it becomes noise.

Build one source of truth for customer signal

A practical system for building a roadmap from customer intelligence usually follows this flow:

Collect and normalize

Pull inputs from the tools your teams already use. Zendesk, Intercom, Gong, HubSpot, Jira, product analytics, and customer success platforms all matter if they capture recurring customer reality.

Normalization is the boring part and the important part. Standardize account names, segment tags, issue categories, and timestamps. If one system says “SSO” and another says “login auth,” you’ll split the same signal into fake categories.

Enrich with account context

Not all feedback should carry equal weight. A complaint from a power user in a strategic segment means something different from a one-off request from a poor-fit account.

Enrichment considers these aspects:

- Segment and plan type

- Renewal stage

- Expansion potential

- Product usage depth

- Open opportunity context

- Support intensity

Without this layer, product teams default to counting mentions. Mention counts are useful, but they’re not prioritization.

Detect patterns across channels

The strongest roadmap signals show up in multiple places. A workflow problem appears in support tickets, sales objections, and usage drop-off. That’s not just feedback. That’s evidence.

I usually look for three pattern types:

- Repeated pain with broad spread Common across many accounts or teams.

- Concentrated pain with high account value Limited volume, but tied to key renewals or expansion paths.

- Emergent risk New pattern, limited history, but rising quickly enough to merit investigation.

A useful guide to the mechanics behind this is https://www.sigos.io/blog/analyse-customer-feedback, especially if you’re trying to move from scattered comments to structured themes that the product team can score.

Translate themes into product opportunities

This is the handoff point where many teams lose the plot. They jump from “customers are complaining about reporting” to “build custom dashboards.”

Don’t do that yet.

The theme should be written as a problem statement with business context. For example:

- Admins can’t identify account-level usage risk fast enough to intervene before renewal risk grows.

- Multi-team customers struggle to roll out the product because permissions and setup create friction.

- Support volume spikes around a recurring workflow failure that interrupts core usage.

That framing keeps the team in problem space long enough to evaluate options well.

Connect feedback to revenue, not votes

This is the underserved part of roadmapping. Plenty of teams say they’re customer-centric. Far fewer can explain how a support theme affects revenue.

The better method is to ask:

- Which accounts are associated with this signal

- What is their renewal or expansion context

- Does the issue appear before reduced usage or stalled rollout

- Does sales encounter it in active deals

- If solved, what business outcome is most likely to change

That doesn’t mean every roadmap item gets a perfect dollar estimate. It means the roadmap should rank work by economic relevance, not anecdotal popularity.

A feature requested by ten low-fit accounts can be less important than a workflow fix blocking one strategic renewal motion.

What a unified signal system changes

Once customer signal is unified, the roadmap conversation gets sharper.

Support stops saying “we have too many tickets” and starts saying “this workflow issue is concentrated in high-value accounts and appears before expansion stalls.” Sales stops saying “prospects want this” and starts saying “this missing capability is showing up in deals with a specific profile.” Product stops acting as a note collector and starts acting as an allocator of scarce investment.

That’s when building a roadmap starts to look less like arbitration and more like strategy.

Prioritizing with Ruthless Objectivity

Monday morning, the roadmap review starts the same way it ended last quarter. Sales wants the feature tied to two late-stage deals. Support wants the workflow generating the highest ticket volume. Engineering wants time to clean up a part of the stack that slows every release. All three arguments are valid. The job is deciding which one earns investment now, and proving why.

Under these conditions, weak prioritization systems break. A simple value-versus-effort chart can sort a backlog. It usually cannot hold up when revenue targets, delivery constraints, and customer pressure collide.

Start with an honest As-Is audit

Before scoring opportunities, define the current operating reality. Teams skip this step when they are in a hurry, then act surprised when the roadmap is full of work that looked attractive in theory and expensive in practice.

The audit should answer five questions:

- Where will technical debt slow delivery or raise implementation risk

- Which parts of the opportunity are still based on weak evidence

- What dependencies exist across design, platform, security, compliance, or GTM

- How much real capacity is available after maintenance and customer commitments

- Which assumptions need to be tested before the team commits build resources

I have seen teams debate priorities for an hour, then realize halfway through that one item depends on a platform migration nobody staffed. At that point, the scoring exercise is theater.

Use a framework, but make it fit your business

RICE, Kano, Opportunity Scoring, and weighted models all have value. The mistake is treating any framework as objective by default. The framework only helps if the inputs reflect how the business wins.

Comparison of Prioritization Frameworks

| Framework | Primary Focus | Key Variables | Best For |

|---|---|---|---|

| RICE | Reach and expected impact | Reach, impact, confidence, effort | High-volume product decisions where audience size is measurable |

| Kano | Customer delight and expectation | Basic needs, performance needs, delight factors | Experience design and feature satisfaction trade-offs |

| Opportunity Scoring | Underserved customer needs | Importance, satisfaction gaps | Discovery-heavy work where unmet need is the main signal |

| Weighted Scoring Model | Business decision quality | Business value, feasibility, risk | Cross-functional roadmap planning with strategic and commercial trade-offs |

For B2B SaaS roadmaps, I usually choose a weighted scoring model. It handles the trade-offs that matter in real portfolio decisions: retention risk, expansion potential, strategic fit, delivery cost, and uncertainty.

A feature requested by many users can still rank below a smaller fix if that fix protects a large renewal or removes friction in a segment with high expansion potential. That is the difference between collecting customer opinions and allocating investment.

Build a scorecard tied to dollars and risk

A useful scorecard is simple enough to use every quarter and specific enough to survive executive review. My scorecard usually includes four categories.

Business value

Score the likely commercial effect. That can mean retention protection, expansion enablement, win-rate improvement, onboarding acceleration, or lower service cost. If the opportunity came from customer feedback, connect that feedback to accounts, pipeline, renewal timing, and usage patterns before assigning the score.

Strategic alignment

Some opportunities are real and still should wait. If the company is focused on enterprise expansion, work that mainly helps low-fit SMB accounts should not crowd out roadmap capacity.

Feasibility

Estimate engineering complexity, design effort, operational readiness, and dependency load. Teams that underweight feasibility create roadmaps that look ambitious and then slip for predictable reasons.

Risk

Include delivery risk, adoption risk, compliance exposure, and evidence quality. A high-upside item with weak evidence should usually earn discovery funding first, not a delivery commitment.

A spreadsheet is often enough. The point is not mathematical precision. The point is forcing explicit trade-offs in one place.

Write roadmap items as business outcomes

The wording matters more than many teams admit. Output-based items invite scope creep because nobody has defined what success looks like.

Bad roadmap item:

- Build advanced export settings

Better roadmap item:

- Reduce reporting friction for multi-team accounts that are stalling during rollout

Bad roadmap item:

- Improve notifications

Better roadmap item:

- Alert account owners to usage risk early enough for customer success to intervene before renewal conversations weaken

That change does two things. It gives the team room to solve the problem well, and it makes post-launch measurement possible. It also keeps qualitative feedback connected to revenue impact, which is the standard a roadmap should meet.

Run a scoring process that surfaces disagreement fast

The mechanics should be lightweight. The discipline should be high.

- Score independently firstProduct, engineering, and GTM should score items before the meeting. Group scoring from a blank sheet usually turns into anchoring on the loudest voice.

- Debate gaps, not every line itemIf Product scores an opportunity high on revenue impact and Engineering scores it low on feasibility, that is where discussion belongs.

- Force a rank orderTies usually mean the team has not made a real trade-off.

- Separate discovery from deliveryIf an item has promise but weak evidence, fund the learning work. Do not pretend it is ready for a committed roadmap slot.

- Trim aggressivelyA roadmap that keeps every reasonable idea is just a backlog with nicer formatting.

Teams that need more structure can use a feature prioritization matrix for product roadmap decisions to standardize criteria and make scoring easier to repeat across planning cycles.

Objectivity means explicit judgment

No scoring model removes judgment. It exposes it.

That is the primary objective. Product leaders still make bets. Good teams make those bets with visible assumptions, commercial context, delivery constraints, and a ranking they can defend in plain language. If item four beats item five, the team should be able to explain why in two minutes, with evidence tied back to strategy and likely business impact.

That standard changes the roadmap conversation. It stops being a contest between opinions and becomes a decision about where the next dollar of product investment has the best chance to pay back.

Aligning Stakeholders and Communicating Your Roadmap

Monday morning, the CRO asks why a deal-driven feature is not on the roadmap. Engineering says the current quarter is already overcommitted. Customer success wants a firm date for a retention issue affecting renewals. If the roadmap cannot explain the trade-off in commercial terms, the room defaults to opinion and urgency.

A roadmap can be well reasoned and still fail in practice if stakeholders cannot see how customer evidence turned into a priority call. The job here is not to get agreement on every item. The job is to make the decision logic clear enough that sales, engineering, executives, and customer teams understand what was chosen, what was deferred, and what would change the ranking.

Different audiences need different views

The roadmap itself should stay consistent. The view should change by audience.

Executives need the business case. Engineering needs sequencing, constraints, and unknowns. Sales and customer success need enough context to set expectations without turning tentative work into a promise. In practice, one roadmap deck rarely does all three well.

I keep three active views.

Executive view

A short, themes-based version tied to company goals. For each theme, show the customer problem, expected business effect, confidence level, major risk, and timing horizon. If feedback from support or sales influenced the call, quantify it. Revenue at risk, expansion potential, renewal exposure, or pipeline impact belongs here.

Delivery view

A planning view for engineering and design with dependencies, technical unknowns, scope boundaries, and the line between committed work and discovery. Here, hard trade-offs show up. A feature that could help a large prospect may still move down if platform work or migration risk would crowd out higher-confidence retention work.

GTM and customer-facing view

A plain-language version for sales, success, and marketing. Focus on the customer problem being addressed, who it helps, confidence level, and what should not be promised externally. That last part prevents accidental commitments created by a screenshot in a QBR or a hopeful note in a deal call.

Explain the decision, not just the output

Stakeholder pushback usually signals missing context.

The strongest roadmap updates answer four questions clearly:

- What business goal does this work support

- What customer evidence supports the choice

- What commercial impact do we expect

- What would cause us to reprioritize

This point is critical for maintaining roadmap integrity. Teams lose trust when priorities shift and nobody can explain why. Teams also lose trust when priorities do not shift even though the evidence changed.

The best roadmap conversations I have seen use a simple chain of reasoning. Support tickets revealed a repeated workflow failure in a high-retention segment. Customer success tied that issue to renewal risk. Sales confirmed the same gap in late-stage enterprise deals. Product translated that pattern into an estimated dollar impact, then compared it against other opportunities on the board. Stakeholders may still disagree with the final rank, but they can see the logic.

That is a much stronger position than saying, "customers asked for it."

Handle pushback with evidence and thresholds

Good pushback improves the roadmap. Poorly processed pushback turns every planning cycle into a lobbying exercise.

When sales brings in an urgent request, ask for account value, deal stage, frequency across similar prospects, and whether the issue maps to a strategic segment. When engineering challenges a date or scope, ask what dependency, architectural constraint, or staffing limit changes the risk profile. When executives ask why a visible request is missing, show how it ranked against retention, expansion, or platform work that carried a higher expected return.

Use language that keeps the discussion grounded:

- For executives “We ranked this lower because the expected business impact is smaller and the evidence is thinner than the work already in Now.”

- For sales “If this blocker appears in multiple target accounts or changes pipeline quality, we will rerun the score. One large prospect matters. A repeatable revenue pattern matters more.”

- For engineering “If the implementation risk is higher than our current assumption, we should reduce scope or move this from commitment to investigation.”

One sentence helps a lot here. “What new evidence would change the priority?” It turns a debate into a test.

Make change visible without making promises you cannot keep

Broad stakeholder communication works better when the roadmap shows confidence, not fake precision. I prefer a now-next-later format for this reason. It gives teams room to learn while still making real commitments.

Define the labels explicitly:

- Now means staffed, scoped enough to execute, and tied to a measured outcome

- Next means likely, but still dependent on learning, capacity, or changing business conditions

- Later means strategically relevant and not committed

Those labels only work if the company uses them consistently. If sales treats “Next” as launch-ready or leadership treats “Later” as approved budget, the format breaks down.

Consistency matters more than polish. A credible roadmap tells stakeholders what the company is solving, why it matters, what revenue or retention effect it is expected to have, and how the team will react when new customer evidence changes the call.

Measuring Success and Creating a Feedback Loop

A roadmap starts telling the truth after launch.

Before we changed our process, teams celebrated shipping and then moved on to the next request. That created a blind spot. We knew what got built, but not which work reduced churn risk, shortened sales cycles, or expanded usage in high-value accounts. If customer feedback is going to shape priorities, the team has to measure whether acting on that feedback produced the commercial result it was supposed to produce.

Treat each roadmap item as a business hypothesis. A support-driven workflow fix should reduce ticket volume in a specific area, improve completion rates for a target segment, or remove friction that sales hears in active deals. A reporting improvement should increase adoption in the accounts that asked for it, help retention conversations, or support expansion. If none of that happens, the learning is clear. The request was real, but the business case was weaker than expected, or the solution missed the actual problem.

Review the roadmap on a quarterly cadence

Quarterly reviews work because they force a hard question. Did this initiative create the outcome we funded it for?

As noted earlier, structured roadmap reviews are useful because they push teams past delivery status and into impact. The review should focus on five things:

- ImpactDid the work change the customer or business outcome the team expected

- AdoptionDid the intended users adopt it in a meaningful way, especially the segment tied to the original opportunity

- ReprioritizationHas new evidence changed what belongs in Now, Next, or Later

- ReadinessAre upcoming items clear enough, scoped enough, and de-risked enough to start

- AlignmentDoes the current roadmap still match company goals and revenue priorities

That structure sounds simple. In practice, it exposes uncomfortable gaps. Teams often discover they launched a feature without baseline metrics, or they tracked usage without tying it to renewals, expansion, or deal movement. Fixing that discipline is part of building a roadmap the business can trust.

Measure outcomes, not just usage

The common post-launch mistake is counting activity and calling it success.

Usage matters, but only in context. If a feature gets clicks from low-value accounts and does nothing for the customers tied to churn risk or pipeline friction, it should not earn the same score as work that changes a renewal conversation.

Useful post-launch checks include:

- Customer behaviorDid target users complete the workflow faster, with fewer failures or drop-offs

- Operational signalDid support volume fall in the affected area, and did escalation severity change

- Commercial effectDid the work remove friction in live deals, help save at-risk revenue, or support expansion in existing accounts

- Adoption qualityDid the right segment adopt it, not just the broad user base

The revenue lens matters most in this context. If sales logged the same blocker across six enterprise prospects and product shipped a fix, the review should look at pipeline progression for those accounts. If support flagged an issue across a set of customers with high renewal value, the review should examine retention risk before and after release. That closes the loop between qualitative feedback and financial impact. It also improves the next prioritization cycle because the team can compare assumptions with actual results.

Feed the learning back into the next roadmap

Every release should improve the next decision.

When an initiative performs well, document why the signal was reliable. Maybe the pain showed up across support, sales, and product usage for the same account segment. Maybe the scope stayed narrow and solved one expensive problem well. Those are patterns worth repeating.

When an initiative underperforms, treat it as calibration, not failure. The team may have overestimated urgency, chosen the wrong segment, or built too broad a solution. Those lessons should change future scoring. Requests that come from noisy channels but lack revenue evidence should get less weight. Signals tied to churn, expansion, or repeatable deal friction should get more.

The roadmap gets stronger when outcomes change the scoring model, not just the slide deck.

If your team is drowning in support tickets, call transcripts, and scattered customer requests, SigOS helps turn that noise into roadmap-ready signal. It connects feedback from tools like Zendesk, Intercom, Jira, and GitHub, identifies patterns tied to churn and expansion, and surfaces the issues most likely to affect revenue so product teams can prioritize with more confidence.

Keep Reading

More insights from our blog

Ready to find your hidden revenue leaks?

Start analyzing your customer feedback and discover insights that drive revenue.

Start Free Trial →