Unlock Revenue with Multi-Touch Attribution Modeling

Master multi-touch attribution modeling. Compare models, build data pipelines, & connect insights to drive real revenue growth.

Most advice on multi-touch attribution modeling starts in the wrong place. It starts with model selection, as if the hard part is choosing between linear, U-shaped, W-shaped, or algorithmic credit assignment. It isn't.

The hard part is making attribution useful enough that product, growth, sales, and support teams make concrete changes to what they do on Monday morning.

A team can have a polished dashboard, several clean channel groupings, and a respectable attribution vendor, then still make decisions the same old way. Paid search gets protected because it closes demand. Brand content gets cut because it rarely gets the final click. Product issues stay buried because nobody ties customer friction back to attributed pipeline or expansion. The output exists, but the business doesn't act on it.

That disconnect is the core issue. Funnel’s research captures it well: only 22% of marketers believe they’re using the right attribution model, yet most guidance still centers on model choice instead of operationalizing insights into decisions like fixing onboarding friction, prioritizing a feature, or addressing churn signals tied to specific touchpoints (Funnel research on the attribution-to-action gap).

A model is not a strategy. It's a decision system. If it doesn't influence budget shifts, lifecycle changes, product prioritization, or sales follow-up, it has no practical value.

Your Attribution Model Is Not Your Strategy

Teams often treat attribution like a reporting upgrade. They replace last-click with a more advanced model, present a few cleaner charts in the next QBR, and assume the job is done. Then nothing changes.

The reason is simple. Attribution answers only one layer of the problem. It helps estimate which touches influenced conversion. It doesn't, by itself, tell you what to fix, what to build, what to stop funding, or where customer experience is breaking.

Where most teams get stuck

The common failure mode looks like this:

- The dashboard is technically correct: Marketing ops has stitched together campaign data, CRM stages, and website events.

- The readout is interesting: Teams can see that multiple channels contributed to closed revenue.

- The decision process stays untouched: Budgeting still follows last-quarter precedent, product planning still follows the loudest requests, and support trends still live in a separate system.

That is the attribution-to-action gap.

Practical rule: If your attribution output can't be tied to a specific action owner, it will become a reporting artifact.

A strong attribution practice needs three things at once. It needs a model that reflects reality, data that can support that model, and an operating rhythm that converts insight into action. Most companies focus heavily on the first item and underinvest in the other two.

What strategy actually looks like

In practice, attribution becomes strategic only when teams use it to answer questions like these:

- Growth question: Which touchpoint mix consistently creates qualified pipeline, not just form fills?

- Product question: Which in-app or onboarding moments appear in high-value journeys, and where do users stall?

- Support question: Which recurring complaints show up disproportionately in customers from specific acquisition paths?

- Revenue question: Which touchpoint patterns correlate with expansion, not just initial conversion?

That shift matters because customer journeys aren't made of channels alone. They're made of interactions, delays, objections, product experiences, and follow-through.

Multi-touch attribution modeling is useful. But the value doesn't come from having a prettier credit distribution. It comes from using that distribution to drive a better business response.

Beyond Last-Click The Case for Multi-Touch Attribution

Last-click survives because it produces a simple answer, not because it accurately reflects how buyers convert.

In a real SaaS journey, a customer might discover you through search, return through content, attend a webinar, click a retargeting ad, start a trial, get nudged by lifecycle email, and only then book a demo or buy. Giving 100 percent of the credit to the final touch makes reporting cleaner. It also makes budget decisions worse.

That is the core case for multi-touch attribution. It does not just improve measurement. It fixes a decision problem.

Why single-touch breaks down

Single-touch models fail in predictable ways because they flatten a sequence into one event.

- Last-click overstates closers: Branded search, retargeting, affiliate coupon traffic, and bottom-funnel email absorb credit created by earlier touches.

- First-touch overstates discovery: Awareness campaigns look more productive than they are if later education, proof, and follow-up never get counted.

- Middle touches disappear: Comparison pages, case studies, webinar attendance, sales follow-up, onboarding milestones, and product usage signals vanish from the record.

That creates a practical problem, not just an analytical one. Teams keep funding whatever shows up at the point of conversion and cut the work that made conversion possible in the first place.

If you need a quick refresher on the mechanics, this guide on What is multi-touch attribution gives a solid overview of how credit is distributed across a journey rather than assigned to a single event.

Why multi-touch matters in practice

Multi-touch attribution gives operators a better map of influence across the full path. That matters most in longer, messier buying cycles where multiple channels, people, and product interactions shape the outcome.

For growth teams, the immediate benefit is better budget allocation. For product and lifecycle teams, the bigger benefit is visibility into the touches that change behavior. That distinction matters. A model can show that webinar attendance appears in high-converting journeys. The useful next question is whether attendees activate faster, invite teammates sooner, or retain better after onboarding.

That is where many teams stall. They adopt a multi-touch model, build a cleaner dashboard, and still struggle to turn credit into action. The gap usually appears because attribution data stays trapped at the channel level while actual decisions sit elsewhere: onboarding flow, lead routing, nurture timing, sales follow-up, and in-app prompts.

A useful companion concept is revenue attribution tied to actual business outcomes. It pushes the conversation past lead volume and toward pipeline quality, expansion, and payback.

What multi-touch changes

Multi-touch attribution does not remove uncertainty. It reduces a specific bias: the habit of giving all strategic importance to the touchpoint closest to the conversion event.

Instead of asking, "Which channel got the last click?" better teams ask, "Which sequence of touches consistently creates qualified pipeline, activation, and revenue?" That shift leads to better experiments, better sequencing, and fewer cuts to programs that assist conversion without closing it directly.

The value is not the model itself. The value comes from using that model to decide what to change.

Comparing Attribution Models From Simple Rules to Smart Algorithms

Picking a model is the easy part. The harder question is whether the model will change budget decisions, lifecycle design, and product experiments. If it cannot survive a finance review or help a growth team decide what to change next, it is just a prettier way to argue about channel credit.

The right choice depends on three practical constraints: how your funnel works, how clean your data is, and how much complexity your team can explain and maintain. A startup with patchy CRM stages should not jump straight to algorithmic attribution. A larger B2B team with reliable event streams, opportunity history, and enough conversion volume may outgrow simple rules quickly.

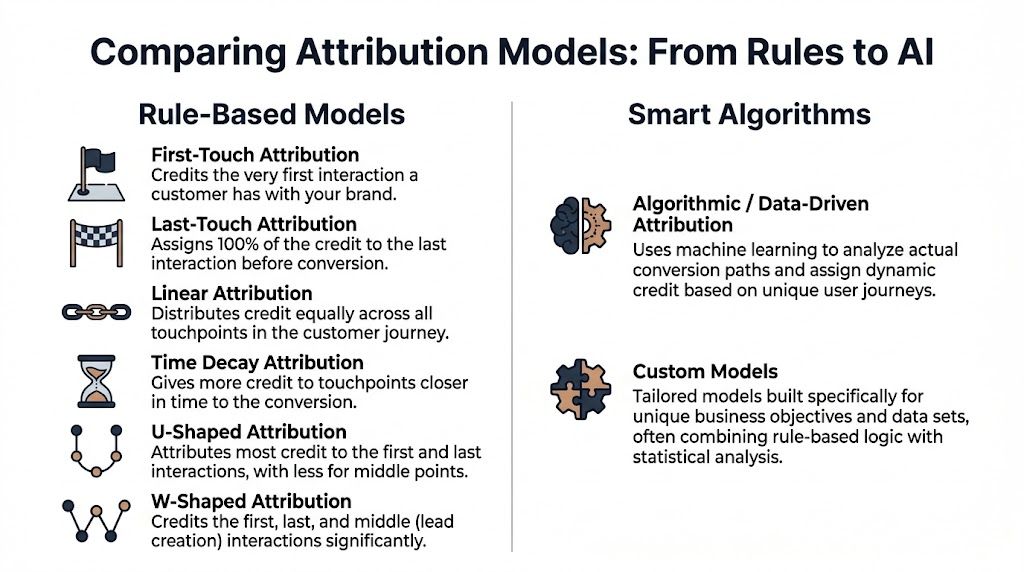

Rule-based models

Rule-based models assign credit using fixed logic. Their strength is clarity. Everyone can see how the credit was assigned, challenge the assumptions, and compare results over time.

That transparency matters more than many teams admit.

First-touch and last-touch

These models put all credit on one interaction. First-touch credits the channel that introduced the buyer. Last-touch credits the final interaction before conversion.

They are useful for narrow questions, not broad strategy. First-touch can help evaluate top-of-funnel sourcing. Last-touch can help review channels that close demand. In a real buying journey, both leave out too much to guide spend allocation on their own.

Linear

Linear attribution splits credit evenly across each recorded touchpoint, as outlined in Improvado’s explanation of multi-touch models.

It is a solid starting point because it is simple, fair enough, and hard to manipulate in meetings. The weakness is obvious. A pricing page visit, a webinar, and a branded search click rarely deserve equal weight. Linear gives teams a baseline, not a final answer.

Time-decay

Time-decay assigns more credit to touches that happen closer to conversion. That usually fits short consideration cycles, retargeting-heavy programs, and sales motions where recent interactions have strong influence.

It can also distort reality. If your pipeline depends on early education, category creation, or analyst content months before opportunity creation, time-decay will tend to under-credit the work that made later conversion possible.

U-shaped

A U-shaped model emphasizes the first and last touch, then spreads the remaining credit across the middle interactions.

This can work when introduction and conversion are the two clearest moments in the funnel. It breaks down when the middle of the journey carries meaningful weight, such as demo attendance, sales qualification, or activation milestones. Teams often like U-shaped models because they feel intuitive. They should still treat the weighting as a business choice, not a discovered truth.

W-shaped

W-shaped attribution adds a mid-funnel milestone, often lead creation or qualification, to the weighting logic.

For B2B SaaS teams, this is often the most practical rule-based model because it reflects how pipeline is reviewed in the CRM. It gives credit to demand creation, lead capture, and conversion without pretending every touch matters equally. The trade-off is that it inherits the quality of your stage definitions. If lead creation is noisy or qualification rules change every quarter, the model becomes unstable.

Algorithmic models

Algorithmic attribution estimates contribution from observed path data instead of applying a fixed rule. The appeal is straightforward. It can account for sequence, interaction effects, and changing channel behavior in ways a static model cannot.

It also asks much more from the business.

Teams need consistent event collection, usable identity stitching, enough conversion volume, and clear governance over model updates. They also need a reporting layer that people can trust. Fast, well-structured pipelines matter here, especially if the business wants to react to campaign changes quickly, which is why real-time analytics for attribution workflows can be useful once the foundations are in place.

Why algorithmic models feel better and fail more often

The difference is not that the model sounds smarter. The difference is that it can detect patterns a rule-based model cannot represent.

- Sequence effects: a paid social touch may perform better when it comes before direct traffic or branded search.

- Conditional influence: a webinar may matter more for enterprise accounts than for self-serve signups.

- Dynamic weighting: channel contribution can shift as creative, targeting, pricing, or sales follow-up changes.

A rules model reflects your assumptions. An algorithmic model estimates contribution from historical behavior.

That does not make algorithmic attribution the default winner. I have seen teams buy advanced tooling before fixing campaign naming, UTM discipline, or contact-to-account mapping. The result is usually complex confusion. If you need outside help cleaning reporting logic and event structure inside GA4 before you model credit, Google Analytics consulting services can be a sensible step.

Attribution model comparison

| Model | How it Works | Best For | Pros | Cons |

|---|---|---|---|---|

| First-touch | Assigns all credit to the first interaction | Awareness analysis | Very easy to understand | Ignores later influence |

| Last-touch | Assigns all credit to the final interaction | Closing channel analysis | Simple reporting | Overstates bottom-funnel channels |

| Linear | Splits credit evenly across all touches | Baseline multi-touch adoption | Easy to explain, fairer than single-touch | Assumes equal influence |

| Time-decay | Gives more credit to recent touches | Shorter cycles, retargeting-heavy programs | Reflects recency | Can undervalue early education |

| U-shaped | Prioritizes first and last interactions | Lead gen funnels with clear opening and closing moments | Highlights awareness and conversion | Compresses the middle of the journey |

| W-shaped | Prioritizes first touch, lead creation, and final conversion | B2B SaaS with distinct funnel stages | Better aligns with CRM milestones | Still rule-based and still opinionated |

| Algorithmic | Uses statistical or machine learning analysis to assign dynamic fractional credit | Mature teams with strong data pipelines | Adapts to observed path behavior | Needs high-quality data and stakeholder trust |

| Custom hybrid | Combines rules with business-specific logic | Companies with unique motions | Flexible and often practical | Maintenance and governance can get messy |

A practical selection framework

Start with the model your team can support operationally, then earn the right to add complexity.

- Use linear when you need a credible baseline and the organization is still stuck in single-touch habits.

- Use time-decay when recency carries real weight in the sales cycle.

- Use W-shaped when CRM milestones are reliable enough to anchor the model.

- Use algorithmic when identity resolution, conversion volume, and event quality are already strong.

The best model is the one your team will use to make decisions. That is the attribution-to-action gap in practice. A slightly less precise model that changes budget mix, follow-up timing, or onboarding design is worth more than a complex model that lives in a dashboard and goes nowhere.

The Data and Tools You Need for Accurate Attribution

Teams rarely fail at attribution because they picked the wrong model. They fail because the data cannot support the decision they want to make.

That distinction matters. A neat dashboard can assign fractional credit across channels and still be useless for budget, lifecycle, or product decisions if the underlying journey is incomplete. That is the attribution-to-action gap. Credit gets assigned, but nobody trusts it enough to change spend, sales follow-up, or onboarding.

Start with the minimum viable journey data

Accurate attribution depends on a connected record of touches across the systems that shape pipeline and revenue. For a typical SaaS company, that usually means four groups of data:

- Ad platform data: Google Ads, LinkedIn Ads, Meta, affiliates, and partner campaigns

- Web and product analytics: landing page visits, form fills, signup paths, activation events, and key in-app behaviors

- CRM data: lead creation, qualification, opportunity stage changes, closed-won, and expansion activity

- Lifecycle and engagement systems: email platforms, webinar tools, sales engagement tools, support systems, and sometimes community or event platforms

Coverage matters, but consistency matters more. If UTMs are messy, campaign naming changes by team, or lead source fields get overwritten in the CRM, the model will produce tidy output from broken inputs. That usually leads to the wrong action. Paid search gets too much credit. Product-qualified signals get ignored. Sales touches disappear from the story entirely.

For teams cleaning up collection and reporting basics, outside support can help. This overview of Google Analytics consulting services shows the kind of implementation work companies often need when tracking quality is uneven.

Identity resolution decides how believable the model is

The hard part is not collecting events. The hard part is connecting them to the same buyer or account over time.

A real path often looks messy. Someone clicks a paid social ad on a phone, reads comparison content later from organic search on a laptop, joins a webinar from a work email, then starts a trial after an SDR follow-up. If those touches live under separate IDs, attribution will undercount influence in the middle of the journey and over-credit the final conversion step.

That problem gets worse in B2B. Shared devices, forwarded emails, account-level buying committees, and offline sales activity all create stitching gaps. Rule-based models can tolerate some of that mess. More advanced models are less forgiving. If identity is weak, sophistication gives a false sense of precision.

Choose tools that make attribution operational

A practical stack has three jobs. Capture touchpoints. Standardize them. Put the output where teams already make decisions.

| Layer | What it does | Common examples |

|---|---|---|

| Collection | Captures events and source data | Google Analytics, product analytics tools, ad platforms |

| Unification | Standardizes and joins records | Data warehouse, CDP, ETL pipelines |

| Attribution and reporting | Applies model logic and delivers readouts | Attribution software, BI tools, CRM dashboards |

The right stack is usually the one your team can maintain without heroics. A warehouse-first setup gives more control, but it asks more of analytics and engineering. Off-the-shelf attribution tools speed up reporting, but they can hide assumptions your finance, product, or sales teams will eventually question. The trade-off is speed versus control, not good versus bad.

Freshness matters too. Teams that rely on delayed reports often spot channel shifts after the budget is already spent or after poor-fit users have moved through onboarding. Stronger real-time data analytics practices help teams inspect path behavior while they still have time to change campaign mix, routing, or lifecycle messaging.

One rule holds up in practice. Use fewer data sources well before adding more data sources poorly.

Start with reliable conversion events, stable naming conventions, durable identifiers, and a clear join between marketing, CRM, and product data. That foundation does more for attribution accuracy than another vendor demo or a more advanced model.

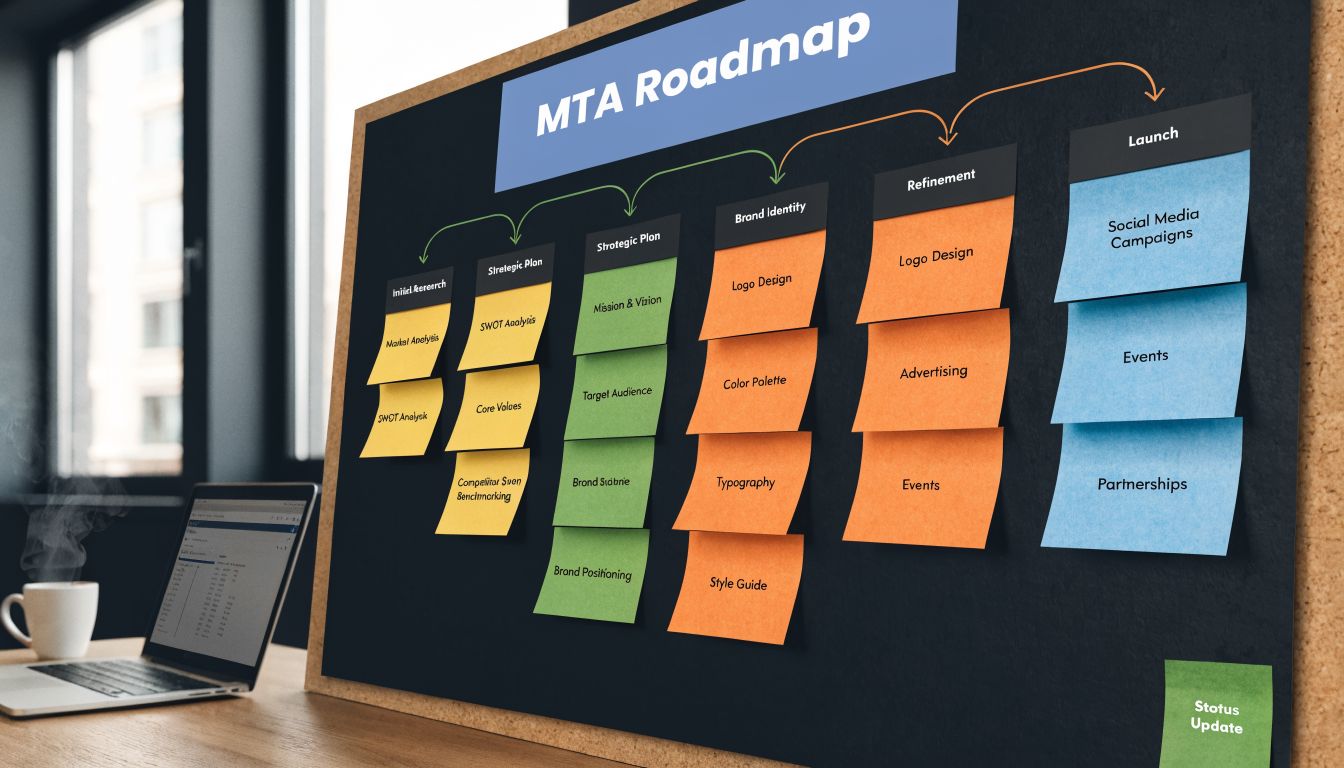

A Practical Roadmap to Implement Multi-Touch Attribution

Teams rarely fail at attribution because they picked the wrong model. They fail because no one decided what the model should change. A dashboard gets built, reports start circulating, and budget, onboarding, and sales routing stay exactly the same.

A practical rollout starts with one decision the business is willing to act on. Keep the scope narrow enough that people can inspect the output, challenge it, and still trust it.

Step 1: define the decision, not just the metric

Start with the operating question.

Examples:

- Budgeting: Which channels should get more spend because they influence qualified pipeline, not just lead volume?

- Lifecycle: Which touches consistently move trial users toward activation?

- Sales alignment: Which early interactions produce leads that progress cleanly through the pipeline?

This step sounds basic, but it is where many programs go off course. If the only output is a credit split by channel, teams admire the chart and keep making the same decisions they made under last-click.

Step 2: map the journey your revenue process actually follows

Channel maps are usually too shallow. They capture ad clicks, sessions, and form fills, then ignore the moments that change deal quality or conversion odds.

For B2B SaaS, the useful map usually runs from first touch to lead creation, opportunity creation, closed-won, and early product adoption. It should also include touches outside classic marketing reporting, such as SDR outreach, webinar attendance, demo completion, onboarding milestones, and support contact. If your team already uses behavioral segmentation for product and lifecycle analysis, use those segments here too. They help distinguish a high-intent path from a noisy one.

Step 3: build the minimum viable pipeline

The first version should answer the question from Step 1 with data your team can defend.

A sensible starting set often includes:

- Acquisition signals: ad platform data, UTMs, referral source

- Conversion milestones: form fills, demo requests, trial starts

- CRM movement: lead creation, opportunity stage changes, closed-won

- Lifecycle signals: email engagement and a small set of activation events

Resist the urge to ingest every event. In early implementations, clean timestamps, stable campaign names, and consistent IDs matter more than event volume.

Step 4: choose a baseline model people can use

A baseline model should match how the business sells. It should also be simple enough for finance, sales, and growth to debate without a statistics seminar.

For many B2B SaaS teams, a W-shaped model is a practical starting point because it gives meaningful credit to first touch, lead creation, and conversion instead of overweighting only the first or last interaction. That makes it useful in funnels where the lead creation moment materially changes sales follow-up and deal quality. The point is not that W-shaped is universally correct. The point is that it often reflects how revenue is generated better than last-click, and operators can understand what the model is rewarding.

Step 5: review the output with the teams who own the funnel

Attribution reviews should include more than marketing.

Product can pressure-test whether high-credit sources also activate well. Sales can flag channels that create leads but stall before opportunity. Lifecycle can inspect whether nurture touches show up in paths that convert faster. Customer success can identify acquisition sources tied to poor onboarding or early churn.

Ask specific questions:

- Which touches appear repeatedly before strong opportunities?

- Where do journeys break before lead creation or activation?

- Which channels generate volume but weak downstream progression?

- Which sources look efficient in acquisition reporting and expensive once product behavior is included?

The attribution-to-action gap either closes or widens depending on the model's practical application. A model that changes budget allocation, routing rules, or onboarding priorities has value. A model that produces interesting slides does not.

Field note: If no one leaves the review with an owner, deadline, and change to test, the attribution program is still in reporting mode.

Step 6: earn trust, then add complexity

Once the baseline is stable, expand carefully. Add harder identity stitching problems. Bring in offline touches. Compare alternate models against decisions already in motion and see whether they improve outcomes or just reshuffle credit.

That order matters. Teams trust attribution when they can trace the logic from touchpoint to business action. Mature programs are built through repeated cycles of instrumentation, review, action, and refinement. That is how attribution becomes an operating system for growth instead of another analytics project.

Connecting Attribution to Action with Behavioral Signals

Picking a multi-touch model is the easy part. Getting teams to act on it is where attribution programs usually stall.

Attribution can show that webinars, paid search, and lifecycle email all influenced the same deal. That still leaves the harder question unanswered. What actually made that journey convert well, or break down after conversion?

A team might see that webinar-sourced leads create a healthy share of pipeline. Good start. A deeper assessment follows. Do those accounts reach activation faster? Do they hit the same onboarding bottleneck? Do they open more support tickets in the first month? Do they retain, expand, or stall?

That is the attribution-to-action gap. A model assigns credit. Behavioral signals explain what to fix, where to invest, and which customer journeys are worth repeating.

Attribution answers contribution. Behavioral data answers causality.

Channel and touchpoint data are useful for budget allocation, but they rarely tell a product, lifecycle, or sales team what to do next. To make attribution operational, teams need to connect acquisition paths to what users do after they arrive.

That usually means joining attribution data with signals such as:

- activation events in product analytics

- onboarding completion and time-to-value

- support ticket themes in Zendesk or Intercom

- sales objections from call notes and CRM activity

- renewal, expansion, and early churn indicators

Without that layer, teams overreact to channels that generate conversions but underperform in product adoption or retention.

A practical example

Suppose the model shows that one campaign influences a large share of qualified pipeline. A channel-only read says to increase spend. A better read checks whether those accounts behave like strong customers after the handoff.

Review the cohort. Look at activation rates, onboarding drop-off, support issues, and sales feedback. If the same friction appears again and again, the next action may have nothing to do with media budget.

The right move could be to:

- fix one onboarding step that slows down a high-value cohort

- change the sales handoff because intent is high but post-demo progression is weak

- rewrite nurture around a feature customers expect but cannot find

- deprioritize a source that looks efficient on lead volume and expensive on retention

That is where multi-touch attribution starts influencing growth, product, and customer success instead of staying trapped in marketing reporting.

Add a behavior layer to every attributed journey

A workable setup does not need perfect data from every system on day one. It needs a few shared milestones that teams trust.

| Signal type | Example source | What it adds |

|---|---|---|

| Product engagement | in-app events, usage analytics | Shows whether attributed users reach activation and adoption |

| Support friction | Zendesk, Intercom | Identifies recurring blockers by acquisition cohort |

| Sales context | CRM activity, call notes, transcripts | Explains objections, timing, and qualification quality |

| Retention outcomes | renewal data, health scores | Connects early journey quality to long-term value |

For teams trying to make those patterns usable, behavioral segmentation helps because it groups customers by actions and product behavior, not just acquisition source.

One caution matters here. More signals do not automatically improve decisions. If identity resolution is weak, event definitions are inconsistent, or support tags are messy, the analysis turns into storytelling. Start with a narrow set of behaviors that map to business outcomes: activation, adoption, support friction, retention.

Use attribution to change decisions, not decorate dashboards

The strongest attribution programs produce recommendations with owners. Marketing changes budget mix. Product fixes a recurring onboarding break. Sales updates qualification or follow-up sequences. Customer success flags acquisition cohorts with higher early-risk patterns.

That operating model matters more than the model family you choose.

A useful attribution view sounds like this: this path generates revenue, but only when users complete these two onboarding actions within the first week. Or: this source creates cheap pipeline, but the cohort churns early and generates heavy support load.

Those are decisions teams can use. Plain channel credit is not enough.

Common Pitfalls in Attribution Modeling and How to Avoid Them

Attribution projects usually fail in ordinary ways. Not because the math is impossible, but because the operating habits around the model stay weak.

Treating the model like a one-time setup

Teams launch attribution, validate the first dashboard, then leave it untouched for quarters. Meanwhile campaigns change, GTM motions evolve, tracking breaks, and the model gradually drifts away from reality.

Do this instead: review model assumptions on a set cadence. Recheck naming conventions, funnel stages, and major event definitions whenever the business changes how it acquires or converts customers.

Building on siloed or inconsistent data

One team uses CRM source fields. Another uses UTMs. Product events use different IDs than marketing events. Attribution reports still get published, but everyone argues with them.

Do this instead: align on a shared source taxonomy and a small set of trusted conversion milestones. Fewer clean signals beat a large volume of disputed ones.

Over-reading one channel

Even after adopting multi-touch attribution modeling, teams can slip back into single-channel thinking. They use the model to validate an existing bias rather than understand interaction across the full journey.

Do this instead: inspect path combinations, not only channel totals. Look at how touches work together and where the sequence changes quality.

Attribution should challenge your assumptions. If it only confirms what the loudest stakeholder already believed, you're not using it well.

Getting stuck in analysis paralysis

Some teams keep slicing the same data without changing anything operationally. They compare models, rebuild dashboards, and debate weights while the pipeline and product issues stay the same.

Do this instead: require every attribution review to end with a decision. Shift budget, test a sequence, fix a handoff, or investigate a friction point. No action means no value.

Ignoring qualitative evidence

Quantitative path data shows movement. It doesn't capture frustration, confusion, urgency, or buyer objections very well on its own.

Do this instead: pair attribution readouts with support trends, sales notes, onboarding observations, and usage behavior. That combination usually reveals what the numbers alone can't.

Frequently Asked Questions About Multi-Touch Attribution

How does privacy change multi-touch attribution modeling?

Privacy constraints make attribution harder, especially when teams depend heavily on third-party cookies or weak identity stitching. The practical response is to rely more on first-party data, durable identifiers where consent allows, server-side collection where appropriate, and cleaner integration across CRM, product, and lifecycle systems. Privacy doesn't make attribution impossible. It forces better data discipline.

Can multi-touch attribution include non-marketing touchpoints?

Yes, and it often should. In SaaS, some of the most important influences happen after the initial acquisition event. Onboarding steps, product activation milestones, sales calls, support interactions, and lifecycle emails can all shape whether a customer converts, expands, or churns. If your model stops at campaign touches, it misses part of the journey that determines value.

How much historical data do I need before I start?

You can start sooner than generally assumed if you use a simple rule-based model and clean inputs. What's required depends on complexity. Rule-based models can work with modest but consistent data. Algorithmic models need much stronger coverage and enough conversion volume to train reliably. If your tracking is still unstable, spend your time fixing instrumentation before chasing sophistication.

What is the difference between attribution and marketing mix modeling?

Attribution focuses on user- or journey-level touchpoints and tries to estimate how specific interactions contribute to conversion. Marketing mix modeling works at a broader aggregate level and is usually better for understanding channel impact across larger time periods, especially where direct user-level tracking is limited. In practice, many mature teams use both for different decisions.

Should product teams care about attribution?

Yes, if they care about revenue impact. Attribution can show which journeys create valuable users. Product data can show where those users succeed or struggle. When those datasets are connected, roadmap discussions get much sharper because teams can tie customer friction and feature demand back to commercial outcomes instead of anecdotes.

If your team is trying to close the gap between attribution insights and product action, SigOS can help. It connects support tickets, chat transcripts, sales calls, and usage signals so product and growth teams can see which issues correlate with churn, expansion, and revenue impact, then prioritize fixes with clearer commercial context.

Keep Reading

More insights from our blog

Ready to find your hidden revenue leaks?

Start analyzing your customer feedback and discover insights that drive revenue.

Start Free Trial →