Why Companies Fail: Prevent Business Disasters with AI

Discover why companies fail, from market need to customer neglect. Spot predictive signals in your data and use AI to prevent disaster in 2026.

Most companies don't fail in a dramatic instant. They fail in slow motion, while leadership teams mistake weak signals for temporary noise.

The cleanest place to start is survival data. According to 2024 BLS-based analysis summarized by Commerce Institute, 20.4% of businesses fail within their first year, and 49.4% fail by the fifth year. That alone reframes the conversation. The popular line that half of all businesses die in year one is wrong, but the corrected version isn't comforting. It shows that failure usually takes time, and that gives operators something valuable: a window to detect it.

That window is where many teams underperform. They watch revenue, pipeline, and cash. They don't watch the leading indicators buried in support tickets, onboarding friction, sales objections, usage drop-offs, and repeated workarounds. By the time the board sees the problem in topline metrics, customers have often been describing it for months.

Why companies fail isn't a mystery. In many cases, it's a pattern recognition problem.

The Uncomfortable Truth About Business Failure

Business failure is common enough to study, but still misunderstood in practice. The popular explanation focuses on dramatic external shocks. The better explanation is slower and more measurable. Companies often spend months, sometimes years, accumulating evidence that demand is weaker than expected, onboarding is harder than buyers anticipated, or the product solves a narrower problem than leadership believes.

CB Insights found that lack of market need was the most commonly cited reason startups failed. That matters because it shifts the discussion away from execution mistakes in isolation. A company can hire well, ship on time, and still underperform if the underlying customer problem is not painful enough, frequent enough, or valuable enough to solve at scale.

The pattern usually appears before the financials make it obvious.

The first signs rarely show up in board slides. They show up in messy operational data. Support tickets start repeating the same friction points. Sales calls contain the same stalled objection. Onboarding sessions require the same workaround. Renewal conversations drift toward value confusion rather than price. Each signal looks small on its own. In aggregate, they describe weakening product-market fit with surprising precision.

That is the uncomfortable part. Many leadership teams are not short on customer input. They are short on systems that convert raw feedback into ranked, decision-ready evidence. As a result, they overvalue lagging metrics such as revenue, churn, and pipeline coverage, and undervalue the leading indicators sitting in call transcripts, survey comments, implementation notes, and lost-deal summaries.

Practical rule: If the same complaint appears across sales, support, and onboarding, treat it as a measurable risk to retention, expansion, or conversion, not as anecdotal noise.

A failed company often looks irrational only in hindsight. In real time, the issue is usually analytical. The warning signs exist, but they are scattered across tools, teams, and customer language. The firms that avoid preventable failure are often the ones that can detect those patterns early enough to act before the income statement confirms them.

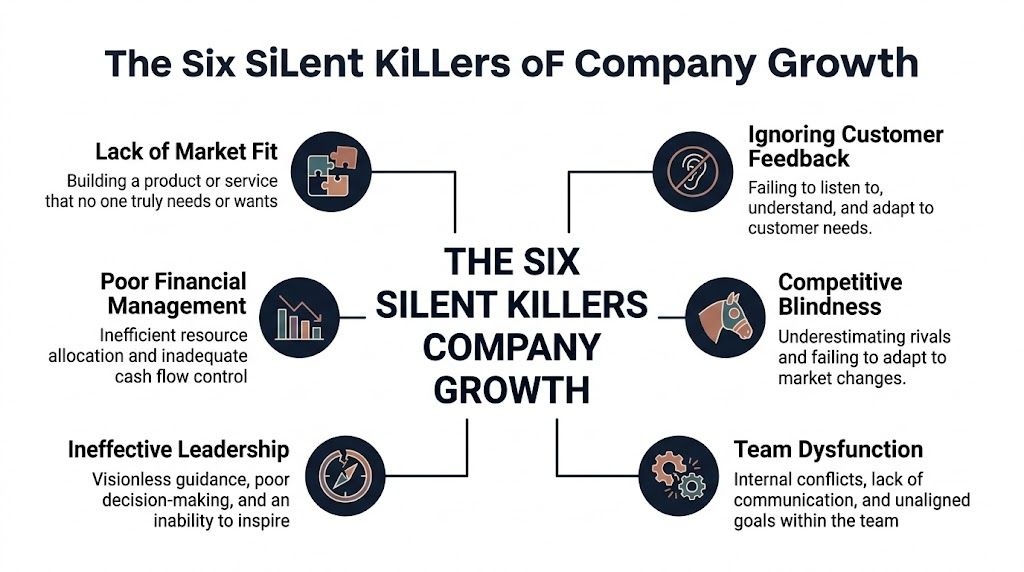

The Six Silent Killers of Company Growth

Growth problems rarely travel alone. They stack. A team launches without enough market pull, spends inefficiently to compensate, makes rushed product decisions, and then stops hearing customers clearly because every function is defending its own interpretation of reality.

A useful mental model is a cracked foundation. One crack may not collapse the structure. Several connected cracks will.

The six killers work together

- Lack of market fitTeams build something they can explain, demo, and fund, but not something buyers urgently need. Sales then becomes education instead of conversion.

- Poor financial managementCash gets assigned to activity rather than learning. Companies spend to preserve the appearance of traction, not to sharpen the product's value.

- Ineffective leadershipLeaders set goals, but weak leaders don't create an evidence standard for decisions. They let intuition outrank customer reality for too long.

- Ignoring customer feedbackThis doesn't always mean refusing to listen. More often, it means collecting feedback without separating broad patterns from one-off noise.

- Competitive blindnessTeams don't need to obsess over rivals, but they do need to understand substitute behavior. If customers solve the problem another way, that still counts as competition.

- Team dysfunctionSupport hears one thing, sales hears another, product sees something else in usage data, and nobody reconciles the story. Misalignment becomes strategy.

Why these causes compound

Each failure mode makes the next one harder to reverse.

A company with weak market fit often spends more on acquisition to force growth. That raises pressure on leadership. Under pressure, leaders can centralize decisions and discount contradictory customer evidence. Team friction follows because departments are now measured against targets the product can't support naturally.

A company can survive one weakness for a while. It usually can't survive several interacting weaknesses that all distort decision-making.

This is why lists of failure reasons often feel incomplete. They present causes as separate categories when they're better understood as a system. Financial stress is often downstream of product confusion. Team dysfunction is often downstream of leadership that never established a shared truth source. Customer blindspots aren't a side issue. They're the mechanism that lets every other problem persist longer than it should.

A better diagnostic question

Instead of asking which one of these six applies, ask which one appears first in your operating rhythm:

- In strategy reviews: are decisions justified by evidence or conviction?

- In roadmap planning: are priorities tied to customer pain or internal preference?

- In support workflows: do repeated complaints trigger analysis or just resolution?

- In leadership meetings: does anyone reconcile qualitative feedback with revenue outcomes?

If the answer is inconsistent, the issue isn't isolated. The company may already be operating with fractured feedback loops.

Building for No One The Product-Market Fit Delusion

Some failed companies look absurd in hindsight because the product was overbuilt for a problem buyers didn't feel strongly enough. Juicero became the symbol of that mistake. It wasn't just an expensive gadget. It was a company that treated engineering sophistication as proof of demand.

The lesson isn't that ambitious products are bad. It's that teams often confuse product elegance with market pull. Those are not the same thing.

Funding can hide the truth

According to Harvard Business School Working Knowledge's summary of startup failure research, a FRACTL study of 200+ post-mortems ranks non-viable business models, including product-market fit gaps, as the top reason for failure. The same source notes that excessive funding can allow management to ignore whether, in blunt terms, "dogs aren't eating the dog food," and that fewer than 20% of startups conduct ongoing customer behavior analysis amid funding.

That's one of the least discussed answers to why companies fail. Capital can delay feedback. When the company has enough money, bad fit doesn't create immediate pain. Teams can subsidize churn, over-hire ahead of demand, and interpret slow adoption as a marketing issue rather than a product problem.

The product-market fit delusion in SaaS

In SaaS, this shows up in a familiar sequence:

- Early logos create false confidence: a few customers buy because the vision is compelling, not because the workflow is indispensable.

- The roadmap expands too fast: teams add breadth before proving depth.

- Vanity metrics replace behavioral truth: demos booked, pilots launched, and feature announcements start standing in for durable usage.

- Renewals become the ultimate referendum: by then, the company has built cost structure around assumptions that should have been tested earlier.

A delusional product-market fit narrative sounds polished. Leadership says the market is "early," customers "love the vision," and adoption friction is "part of category creation." Sometimes that's true. Often it's a story the team tells itself to avoid the harder conclusion that the product still isn't solving an urgent enough problem.

"Dogs aren't eating the dog food" is crude, but it's operationally useful. It forces a behavior question, not a branding question.

What honest validation looks like

Strong teams look for evidence that customers are changing behavior, not just expressing interest. They ask whether users return without prompting, whether support volume points to missing value or normal onboarding friction, and whether sales objections repeat in patterns that suggest a structural mismatch.

They also resist the temptation to frame every request as validation. A customer asking for a feature isn't always a buying signal. It may be a sign that your current workflow doesn't fit their actual job.

One of the better reads on this tension is finding product market fit is a trap. Its value isn't that it dismisses fit. It challenges the simplistic idea that product-market fit is a milestone you declare once and then scale from forever. In practice, fit is dynamic. It has to be re-earned as segments shift, competitors evolve, and customer expectations change.

The dangerous version of optimism in SaaS isn't ambition. It's scaling before customer behavior has made the case.

The Dangerous Echo Chamber of Ignoring Customers

Companies often state they listen to customers. Many do. The failure happens one step later, when they can't interpret what they're hearing.

Feedback by itself is not insight. It's raw material. If a company collects surveys, support tickets, sales call notes, chat transcripts, and customer success updates without a reliable way to reconcile them, the result is often an echo chamber. Teams hear what confirms their priors, or what comes from the loudest account, or what arrived most recently.

Listening isn't the same as understanding

This problem gets worse as companies scale. According to the US Chamber's discussion of business failure and AI-era feedback challenges, 68% of SaaS leaders struggle with "feedback overload," manual triage misses 75% of revenue-impacting issues, and a CB Insights 2025 update shows a 28% failure rise in Series B+ SaaS from undetected churn risks, compared with 12% for AI-integrated peers. Because those are post-2025 claims, they should be read as recent reported trends rather than timeless truths. Their importance is directional. More feedback isn't automatically better if the operating system for interpreting it doesn't improve too.

The result is familiar. Product hears enterprise requests and overfits to edge cases. Support sees recurring frustration but can't quantify its commercial weight. Sales champions "must-have" features that matter only in late-stage deals. Leadership gets a stack of anecdotes and calls it customer centricity.

The loudest signal is often the wrong one

Teams commonly overweight three kinds of feedback:

- Escalated feedback: the issue that reaches executives feels strategic even when it's isolated.

- Articulate feedback: customers who explain a problem well can sound more representative than they are.

- Recent feedback: fresh pain displaces persistent patterns that have larger long-term cost.

A better practice starts with disciplined collection. If your inputs are fragmented, the analysis will be fragmented too. This guide on how to gather customer feedback is useful because it treats collection as an operational design problem, not just a survey problem.

The second step is synthesis. Teams need one view across support, sales, product analytics, and retention conversations. Without that, "customer voice" becomes a competition between departments.

Here's a useful reset on the whole topic:

The echo chamber is a strategic failure

The most dangerous customer blindspot isn't ignoring complaints outright. It's believing you've heard the market because every team brought examples. Examples don't equal evidence.

If five departments describe the same customer in five different ways, the company doesn't have a feedback function. It has competing narratives.

That distinction matters because companies usually don't lose customers over a single ticket theme. They lose them when several small frictions cluster around one broken job to be done. Manual review misses those clusters. Humans are good at hearing urgency. They're less reliable at spotting repeated relationships across thousands of interactions.

That's why the old advice to "listen to customers" now feels incomplete. Listening is table stakes. Interpretation is the hard part.

Decoding the Data Finding Predictive Failure Signals

Most failure analysis is retrospective. By the time the company names the root cause, the evidence has already become obvious in churn, contraction, or stalled pipeline. Product and growth teams need a forward-looking system instead.

The obstacle isn't lack of data. It's operationalization. According to data science PM analysis on why big data projects fail, 85% of big data projects fail, often because of a deployment gap where models don't make it into production. The same source says that without continuous monitoring, models degrade, leading to unreliable churn predictions and correlating to 10-25% higher customer attrition rates.

That is the hidden trap in predictive work. Building a dashboard or model is not the same as creating an early-warning system. If the analysis isn't embedded in daily decisions, it's just a smarter archive.

What leading indicators actually look like

The most useful signals are combinations of behavior and business consequence. A rise in support volume alone may mean growth. A rise in support volume concentrated around one workflow, paired with lower activation or renewal friction, means something else entirely.

Use this kind of mapping:

| Failure Mode | Leading Indicator (Qualitative) | Measurable Signal (Quantitative) |

|---|---|---|

| Lack of market fit | Customers describe the product as interesting but not essential | Repeated drop-off after onboarding or trial, plus weak repeat usage |

| Flawed product experience | Users create workarounds or contact support around the same workflow | Rising concentration of tickets tied to a single feature area |

| Financial mismanagement | Leadership funds roadmap items with unclear buyer pull | Spend and headcount growth detached from retention and expansion outcomes |

| Customer feedback blindspots | Teams disagree on what customers care about most | High volume of uncategorized or unresolved feedback themes |

| Competitive blindness | Deals are lost to alternatives solving the same job differently | Sales call notes repeatedly reference the same substitute or objection |

| Team dysfunction | Support, product, and sales report conflicting priorities | Long delay between signal detection and roadmap response |

Build a health dashboard, not a vanity dashboard

A useful dashboard answers four questions:

- **What friction is repeating?**Look for recurring themes across support tickets, call notes, and onboarding conversations.

- **Which accounts are showing risk behavior?**Usage decline matters more when it appears with complaint themes or stalled implementation.

- **What requests correlate with retention or expansion?**Not every feature request deserves equal weight. Some are noise. Some are proxies for major commercial opportunity.

- **How fast does the team act on patterns?**Detection matters. Response time matters more.

Teams exploring this area often start with churn prediction in isolation. That's too narrow. Better work links support language, behavior changes, and account outcomes. A practical reference point is predicting customer churn, especially if you're trying to connect feedback patterns to account risk rather than treating churn as a purely historical metric.

Operator's lens: A leading indicator becomes valuable only when someone owns the action that follows it.

Why prediction fails inside the company

Many companies don't lack analytical sophistication. They lack decision plumbing. Data sits in Zendesk, Intercom, Jira, CRM notes, and warehouse tables that never reconcile in time for product decisions. Or teams do the work once, ship a model, and stop monitoring drift.

That creates a false sense of confidence. Executives think the company is "data driven" because a model exists. In reality, they may be steering on stale assumptions.

The right question isn't whether you have analytics. It's whether your analytics can still catch the thing that will hurt you next quarter.

The AI Advantage How Product Intelligence Prevents Failure

Manual analysis breaks first in exactly the companies that need clarity most. More customers create more tickets, more calls, more transcripts, more usage events, and more opportunities to miss the pattern hiding across them.

AI product intelligence stops being a convenience and becomes infrastructure. The point isn't just speed. The point is that machines can continuously classify, correlate, and prioritize across a volume of feedback no human team can reliably synthesize on its own.

Why manual feedback analysis fails at scale

According to Magnimind Academy's analysis of data strategy failure, poor data quality and lack of real-time visibility cause 60-70% of data strategy failures in SaaS. The same source notes that delayed support ticket ingestion can hold back pattern detection by hours, missing churn signals and leading to decisions that can inflate churn by 15-30%.

Those numbers matter because they explain why so many "customer-centric" companies still act late. It's not always apathy. Often it's architecture. Data arrives too slowly, from too many systems, in formats that don't naturally connect.

What AI changes in practice

A strong AI-driven product intelligence workflow does a few things human teams struggle to do consistently:

- Unifies fragmented inputs: support tickets, chat transcripts, sales calls, usage metrics, and issue trackers stop living in separate narratives.

- Finds recurring patterns: repeated complaints get clustered even when customers describe the same pain differently.

- Links patterns to business outcomes: teams can see whether a bug, objection, or missing feature is associated with churn risk, stalled adoption, or expansion opportunity.

- Prioritizes by impact: roadmap debates move from opinion to evidence-backed tradeoffs.

- Runs continuously: analysis doesn't wait for the quarterly review or the next board deck.

That's the key advantage. AI doesn't replace product judgment. It sharpens it by removing the filtering problem. Leaders still decide what to build, what to fix, and what to defer. But they decide with a more reliable map of what customers are already telling them in aggregate.

The operating shift from reactive to preventative

For years, software teams used customer data mostly to explain what had already happened. AI product intelligence makes it possible to use the same data to intervene earlier.

That shift changes several habits:

| Old operating habit | Better AI-enabled habit |

|---|---|

| Review tickets by queue | Review themes by business impact |

| Prioritize by stakeholder intensity | Prioritize by recurring evidence and revenue risk |

| Treat support as a service function | Treat support data as product intelligence |

| Wait for churn or lost deals to confirm a problem | Act when patterns start clustering across accounts |

A good companion piece here is AI is going to kill Company X. Its strongest point is strategic, not theatrical. Companies don't get displaced by AI because AI is fashionable. They get displaced when competitors use it to collapse response times, connect systems, and operationalize signals that slower firms still process manually.

What product leaders should implement now

You don't need an abstract "AI strategy." You need a failure-prevention workflow.

Start with a narrow operating loop:

- Ingest customer-facing data continuouslyPull from systems where friction is recorded, not just from analytics dashboards.

- Create a shared taxonomy of painDecide how the organization labels bugs, blocked workflows, missing capabilities, onboarding confusion, pricing friction, and competitive objections.

- Score patterns by business relevanceThe important complaint isn't the most emotional one. It's the one that repeats across valuable accounts and aligns with poor outcomes.

- Push findings into execution systemsInsights should move into roadmap and engineering workflows, not stay in slide decks.

- Review signal quality every weekIf the analysis isn't producing decisions, either the taxonomy is weak, the data is fragmented, or nobody owns the loop.

For teams exploring that stack, AI for product development is a useful framing because it treats AI as an operational layer across discovery, prioritization, and execution.

The winning companies won't be the ones with the most feedback. They'll be the ones that turn feedback into action before competitors notice the pattern.

That is the modern answer to why companies fail. Many of them don't fail because the market gave no warning. They fail because the warning existed in forms the company couldn't process fast enough.

Building a Resilient Company in an Age of AI

Resilient companies don't rely on heroic intuition. They build systems that make reality hard to ignore.

The central lesson isn't just that many businesses fail. It's that failure often announces itself early through customer behavior, repeated friction, and misalignment between what teams believe and what users do. That makes resilience less mystical than most leadership books suggest. It's an operating discipline.

Resilience comes from quantified listening

Companies become fragile when they treat customer evidence as anecdotal, delayed, or politically negotiable. They become stronger when they turn that evidence into shared decision criteria. Support stops being a cost center. Sales objections stop being "field noise." Product analytics stops living in a separate technical lane.

When those signals are reconciled, leaders can see the difference between a rough patch and a structural problem. They can also distinguish a loud request from a high-impact one.

The new standard is predict and prevent

The old model was react and repair. A release underperformed. Churn rose. Sales pushed back. Then leadership launched a clean-up project.

That model is too slow now.

AI has changed the cost of ignorance. Companies that can detect patterns across feedback, usage, and commercial outcomes will make better prioritization calls earlier. Companies that can't will keep learning from lagging indicators and calling it discipline.

Why companies fail has always been partly about cash, execution, and leadership. Now it's also about whether the company can convert messy customer evidence into timely action. That isn't a side capability anymore. It's core operating infrastructure.

The firms that last will be the ones that build a culture of quantified listening, connect insight to execution, and treat weak signals as assets instead of interruptions.

If your team wants to move from reactive feedback review to continuous product intelligence, SigOS is built for that shift. It helps product, support, and growth teams turn customer conversations, usage signals, and issue data into prioritized actions so you can catch churn risks, roadmap gaps, and revenue opportunities before they become business problems.

Keep Reading

More insights from our blog

Ready to find your hidden revenue leaks?

Start analyzing your customer feedback and discover insights that drive revenue.

Start Free Trial →