Your SaaS Strategy for Engagement: A Revenue-Driven Guide

Build a strategy for engagement that drives revenue. This guide covers KPIs, data-driven frameworks, and how to use AI to quantify the impact of user behavior.

Most advice on engagement still points teams in the wrong direction. It tells you to push up activity, celebrate open rates, track daily active users, and treat time-on-page like proof of value. That playbook creates dashboards that look busy and roadmaps that stay subjective.

A real strategy for engagement does something harder. It asks which behaviors predict retention, which friction points show up before churn, and which requests map to expansion. That changes the operating model. Product, support, success, and growth stop debating the loudest anecdote and start ranking signals by business impact.

In SaaS, engagement only matters when it connects to customer outcomes and company outcomes at the same time. A user clicking around a feature is not the same as a user adopting it into a workflow. A burst of support tickets is not just a service issue. It may be an onboarding breakdown, a pricing mismatch, or a blocker sitting between your team and an expansion conversation.

Beyond Vanity Metrics An Introduction

The common assumption is that engagement is soft. It belongs in a customer marketing deck, not in a revenue meeting. That assumption breaks fast when you look at how modern teams are instrumenting product behavior, support interactions, and customer sentiment in one system.

The market is already moving in that direction. The global customer engagement tools market is projected to more than double by 2030, and 79% of organizations have automated routine tasks, reflecting a shift from reactive metrics like ticket volume to predictive ones like churn rate that tie more directly to revenue, according to Zendesk's customer engagement metrics overview.

That shift matters because vanity metrics are easy to collect and dangerous to trust. Teams can improve email clicks, lengthen sessions, or increase app visits without improving retention. If you've worked through the discipline of moving beyond vanity metrics, you already know the pattern. A number can rise while the business problem remains untouched.

Why the old model breaks

The old model usually looks like this:

- Marketing owns engagement: They report campaign interactions.

- Product owns usage: They report activity trends.

- Support owns complaints: They report volume and queue health.

- Revenue teams own renewals: They report outcomes after the fact.

Each team sees a slice. Nobody owns the link between behavior and money.

That’s why many SaaS companies end up with feature prioritization by intuition. One account executive says a request is urgent. Support says another issue is generating frustration. Product analytics says usage is flat. Without a common metric structure, every function is technically right and strategically misaligned.

The contrarian view

A stronger strategy starts with one belief. The most valuable engagement signal often isn’t in a survey response. It’s hidden in patterns across clicks, tickets, transcripts, and workflow behavior.

Engagement should be treated as evidence, not sentiment.

That’s also why a single north star metric rarely works if it’s disconnected from revenue mechanics. If you want a cleaner way to think about that relationship, this piece on north star metrics for product teams is useful because it forces the question that matters most: what customer behavior represents delivered value?

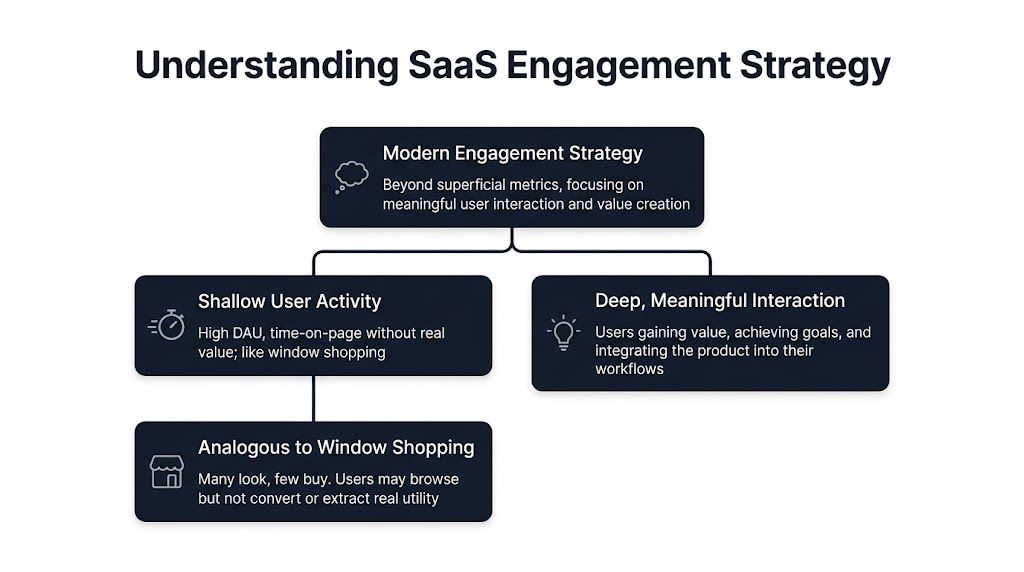

What Engagement Strategy Really Means for SaaS

In SaaS, engagement isn't “someone used the product.” It’s “someone got value, repeated that value, and widened their use of the product in a way that makes retention more likely.”

A retail analogy helps. Plenty of people walk into a store, browse shelves, and leave. That’s activity. The stronger signal is a customer who asks a detailed question, compares options, buys, comes back, and brings a colleague next time. SaaS works the same way. Logging in is browsing. Building a workflow around your product is buying behavior.

The three layers that matter

I think about engagement in three layers.

Activation

This is the first moment where a user reaches meaningful value. Not the signup. Not the first login. The first outcome.

For one SaaS product, that might be connecting a data source. For another, it might be resolving a support case through automation. Activation is fragile because teams often mistake setup completion for value realization. Those are not the same thing.

Adoption

Adoption starts when a user repeats value often enough that the product becomes part of a workflow. At this stage, feature usage becomes more informative than general traffic.

If a customer touches a feature once after a launch webinar, that’s curiosity. If the same customer integrates that feature into a weekly or daily process, that’s adoption. The difference is operational habit.

Expansion

Expansion happens when customers discover additional value and use more of the product in ways that deepen the account relationship. This can come from new seats, broader team involvement, adjacent use cases, or advanced features that solve a second problem.

Expansion signals are easy to miss if your strategy for engagement only measures top-of-funnel activity. Teams often invest in broad engagement programs while ignoring the narrower behavioral signs that an account is ready for a cross-sell or a larger renewal conversation.

Internal engagement predicts external quality

There’s another reason to stop treating engagement as a fluffy metric. Gallup’s research found that business units with top-quartile employee engagement show 21% less turnover and 32% fewer quality defects, as summarized in this Gallup-based employee engagement analysis. That’s internal data, but the lesson carries over. Better engagement produces better execution.

For SaaS teams, that means two things:

- Support teams resolve patterns faster when they’re engaged enough to document issues cleanly and escalate with context.

- Product teams prioritize better when they trust the data and understand the customer problem behind it.

What doesn’t work

A weak engagement strategy usually has one of these flaws:

| Common mistake | Why it fails |

|---|---|

| Tracking logins as the main signal | Logins don’t prove value creation |

| Treating all feature usage equally | Some actions are exploratory, others are sticky |

| Letting support volume stand alone | Volume without account context creates false urgency |

| Measuring campaign response without product follow-through | Marketing engagement can rise while customer value stays flat |

If your core metric can go up while churn risk stays hidden, it isn't a strategy metric. It's a reporting metric.

A better working definition

A modern strategy for engagement is a system for identifying behaviors that show value delivered, value repeated, and value expanding.

That definition is practical because it changes what teams monitor. Instead of asking, “Are users active?” the better questions are:

- Which actions predict retention?

- Which support patterns show blocked value?

- Which requests come from accounts with expansion potential?

- Which engagement drops require intervention before renewal risk appears?

That’s the SaaS version of engagement that deserves executive attention.

Identifying Your Most Valuable Engagement Signals

Many teams already collect more engagement data than they can use. They have product analytics in one place, support tickets in Zendesk, chats in Intercom, call notes in Gong or similar tools, and roadmap requests spread across Jira, Linear, or spreadsheets. The failure isn't lack of input. The failure is ranking the wrong input.

The starting point is separating observable activity from meaningful signals. Session duration can matter, but only in context. A long session may indicate deep product use. It may also indicate confusion. A spike in tickets may reflect adoption momentum around a new workflow, or it may signal a broken release.

Quantitative signals first

Start with behavior you can observe directly inside the product. Focus on actions that sit near value creation, not generic traffic.

Useful categories include:

- Activation events: The actions that show a customer reached the first real outcome.

- Repeat usage patterns: Behaviors that suggest the product has entered a routine.

- Feature depth: Whether customers use one narrow capability or several connected ones.

- Drop-off points: Moments where users consistently stop before completing an important workflow.

- Account-level change: Whether engagement is broadening across users or concentrating in one champion.

Those categories give product teams a cleaner baseline than broad activity metrics. They also make it easier to discuss risk with customer success and revenue teams, because the behaviors map to actual use rather than abstract interest.

The bigger opportunity sits in unstructured data

Most engagement programs stay underpowered. Product and growth teams often rely heavily on dashboards from usage analytics while underusing the most revealing data they already have: support tickets, chat transcripts, and sales call notes.

That’s a gap in the market conversation too. Research summarized in this analysis on AI-driven behavioral analysis in product intelligence notes that platforms like SigOS reach 87% correlation accuracy in linking patterns in unstructured data to revenue outcomes, yet only 15% of product intelligence content discusses this automated approach over subjective prioritization.

The practical implication is simple. The loudest customer is often not the most important customer. The ticket with the clearest emotional language is not always the ticket with the biggest commercial impact. Without pattern detection across accounts and behaviors, teams overweight anecdotes.

What to pull from support and sales data

When I review support and sales input for engagement signals, I look for three things.

Repeated friction attached to a shared workflow

A single complaint may be noise. A recurring complaint tied to the same product path across multiple accounts is a signal. If those accounts also show declining usage or stalled adoption, the issue deserves immediate attention.

High-intent requests from commercially relevant accounts

Not every feature request should influence the roadmap. The useful requests are the ones connected to meaningful product behavior, renewal risk, or clear expansion conversations.

Sentiment shifts that appear before usage collapse

Support and call language often changes before product metrics visibly decline. Customers start describing workarounds, expressing uncertainty, or narrowing how they use the product. That’s early warning data.

Practical rule: Don’t score feedback by volume alone. Score it by recurrence, account quality, workflow proximity, and observed downstream behavior.

Build a signal inventory

A workable exercise is to create a signal inventory across three buckets:

| Bucket | Examples of signals | Why it matters |

|---|---|---|

| Product behavior | activation completion, repeated feature use, drop-off points | Shows whether customers are reaching value |

| Customer communication | support themes, chat friction, call transcript requests | Explains why behavior is changing |

| Commercial context | renewal stage, account tier, expansion interest | Tells you how urgent the signal is |

Once teams have that inventory, they can stop treating all engagement events equally.

For teams refining event and account analysis, a deeper look at behavior analytics for product decisions is a useful complement because it helps translate raw activity into patterns that product and revenue teams can act on.

One adjacent discipline is worth borrowing here. Webinar teams learned long ago that “attendance” says very little without downstream action, which is why resources like 10 webinar metrics and KPIs to track for demonstrable ROI are useful outside webinars too. The broader lesson applies to SaaS engagement: measure what predicts business value, not just what proves exposure.

A Data-Driven Framework for Engagement Initiatives

Once you know which signals matter, the next mistake is trying to solve everything at once. Strong teams don’t launch broad engagement campaigns and hope the metrics move. They run a disciplined cycle. One signal, one hypothesis, one intervention, one readout.

Start with a weighted score, not a raw list

An engagement backlog becomes manageable when every signal receives a score tied to business relevance. That score should combine behavior, customer context, and likely outcome.

A strong scoring model uses weighted metrics based on correlation with outcomes like churn. According to Accrease's guide to building an engagement scoring model, teams that apply these models can achieve 25-40% higher prioritization accuracy for high-impact features, validating against benchmarks like 87% pattern-revenue correlation.

That doesn’t mean every company needs an elaborate model on day one. It means your strategy for engagement needs a ranking system that is better than “the team feels this is important.”

The operating cycle

I’ve seen this work best as a repeated five-part loop.

1. Isolate one meaningful signal

Choose a signal that is behaviorally clear and tied to a specific customer journey. Examples include stalled activation, sudden decline in repeat use, or a recurring support theme attached to a high-value workflow.

Avoid combining too many symptoms into one initiative. A broad problem statement creates blurry action.

2. Write a revenue-linked hypothesis

The initiative should answer one question: if this signal improves, what business outcome should move?

Good hypotheses sound like this:

- If users complete the key setup step more reliably, adoption should strengthen in the target cohort.

- If we reduce friction in the reporting workflow, support pressure should ease and account confidence should improve.

- If we address the request pattern emerging in sales conversations, expansion conversations should become easier to progress.

Weak hypotheses focus only on engagement mechanics. “Increase clicks” is not a strategy. “Reduce the workflow friction that precedes account stagnation” is.

3. Design the smallest credible intervention

Don’t overbuild. The first move might be an in-app guide, a revised onboarding sequence, a UI copy change, a customer success playbook, or a support macro that steers users into the right path.

The point is to learn quickly, not to defend a large launch.

The best engagement initiatives look small in the sprint plan and large in the customer workflow.

Measurement has to happen in two layers

The first layer is the target signal itself. Did activation improve? Did support sentiment change? Did repeat feature use recover?

The second layer is the business proxy closest to the initiative. That may be reduced churn risk, stronger account health, or increased expansion readiness. If teams only measure the first layer, they end up celebrating motion instead of progress.

This walkthrough is a useful complement to the framework because it shows how to structure the evaluation loop in practice.

What to include in the score

A practical model usually benefits from mixing different signal types instead of relying on one source.

- Behavioral evidence: Product actions that indicate activation, habit, or decline.

- Friction evidence: Support and chat patterns linked to specific workflows.

- Commercial relevance: Whether the account is tied to renewal urgency or broader account potential.

- Confidence level: Whether the signal appears consistently across accounts and channels.

That structure prevents a common mistake. Teams often overweight what is easiest to measure. In reality, the strongest engagement strategy often combines hard product events with harder-to-parse communication data.

Kill weak initiatives quickly

A lot of engagement work persists because nobody wants to declare it ineffective. That’s expensive. If the signal doesn’t move or the business proxy stays flat, end the experiment, capture the learning, and move on.

Here’s a simple review format:

| Review question | Keep going when | Stop when |

|---|---|---|

| Did the target signal improve? | The behavior moved in the expected direction | No meaningful movement appears |

| Did downstream account quality improve? | Teams observe stronger retention or expansion indicators | Customer outcomes remain unchanged |

| Is the intervention scalable? | Product, support, or success can operationalize it without heavy manual work | The effort depends on one-off heroics |

A strategy for engagement becomes durable when teams treat it like product development. Define the problem, score it, test a constrained solution, and keep only what changes business outcomes.

Real-World Scenarios and Quantifying Impact

Frameworks matter, but teams trust them when they can see the mechanics in real operating situations. The fastest way to understand a revenue-driven strategy for engagement is to look at how different functions use the same signals differently.

Scenario one product spots friction before churn appears

A product manager notices a rising cluster of support complaints tied to a recently launched workflow. In a traditional setup, the team would count ticket volume, read a few examples, and decide whether the issue “feels big enough” for the roadmap.

A better approach combines three inputs. First, support tickets show the recurring complaint. Second, product analytics show that users entering that workflow fail to complete the intended task at a lower rate than expected. Third, account context shows the pattern is concentrated among commercially important customers.

That combination changes the Jira ticket the PM writes. It isn’t “customers are confused.” It’s “a defined workflow is producing friction across relevant accounts, and the affected behavior sits close to retention risk.” That gives engineering and leadership a reason to prioritize without a political argument.

Scenario two growth validates expansion potential from transcript patterns

A growth leader hears a familiar request appear in several sales conversations. Normally that request would enter a spreadsheet and compete against everything else. It might get attention because sales is persuasive, not because the opportunity is validated.

The stronger move is to test whether the request aligns with product behavior. Are prospects asking because the capability is essential, or because positioning is muddy? Are existing customers trying to replicate the workflow another way? Are accounts showing the request also demonstrating signs of deeper product maturity?

If the answer is yes, the request becomes more than market noise. It becomes an expansion signal. Product can then assess whether solving it creates broader account value rather than serving a narrow list of edge cases.

Scenario three customer success acts on engagement decay early

Customer success usually sees the downside of poor engagement too late. The account is less responsive, executive sponsors disappear from calls, usage narrows, and only then does the team label the account “at risk.”

A stronger model flags declines earlier. If account engagement weakens across product behavior and support tone, CS can trigger a focused intervention. That may be a workflow review, a retraining session, or specific guidance tied to the part of the product the customer has stopped using effectively.

This is where cross-channel execution matters. According to Cometly's analysis of cross-channel engagement through data, strategies that merge behavioral data with sentiment metrics can drive a 20-35% uplift in session resumption rates, and optimizing messaging based on multi-touch analytics has increased cross-sell conversion by 1.8x. The lesson for SaaS operators is not “send more messages.” It’s “use behavior plus sentiment to trigger the right message at the right moment.”

Engagement Signals From Qualitative Noise to Quantitative Impact

| Signal Source | Traditional Analysis (Subjective) | AI-Driven Analysis (Quantitative) |

|---|---|---|

| Support tickets | Team reads a sample and escalates what sounds urgent | Pattern detection groups repeated friction by workflow and account context |

| Sales call notes | Reps report what prospects asked for most often | Transcript analysis identifies requests that align with product maturity and expansion potential |

| In-app behavior | Team watches top-line usage trends | Event patterns show which actions lead to adoption, drop-off, or account decline |

| Customer success feedback | CSMs describe account health from memory and meeting notes | Signals are compared against actual behavior and sentiment shifts over time |

When teams combine product behavior with customer language, they stop guessing which issue matters and start ranking which issue changes the business.

What these scenarios have in common

These teams don’t succeed because they have more data. They succeed because they attach decisions to interpretable patterns. That makes roadmapping cleaner, support escalation sharper, and success outreach more timely.

It also changes how teams justify investment. Instead of saying a request is important because a customer asked loudly, they can frame the issue through retained value, blocked adoption, or unrealized expansion.

If you need a simple way to make those conversations more concrete with finance or leadership, this ROI template for product and revenue decisions is a practical aid. It pushes teams to convert signal quality into a business case instead of leaving value implied.

Monitoring and Governance for Long-Term Success

A strategy for engagement fails when it lives inside one enthusiastic team. Product may care about user behavior. Support may catalog friction well. Success may understand account risk. None of that matters if the company lacks a shared cadence, common ownership, and one place where signals are reviewed together.

Set up a cross-functional engagement squad

You don’t need a new department. You need a working group with decision rights.

The right participants usually include:

- Product leadership: Owns prioritization and experiment design.

- Customer success leadership: Brings account context and intervention pathways.

- Support leadership: Surfaces workflow friction with operational detail.

- Growth or revenue leadership: Connects signals to expansion and pipeline implications.

- Analytics or operations: Maintains data quality and model logic.

This group should own the engagement score definitions, review the highest-priority signals, and decide what enters the next operating cycle.

Create a weekly review ritual

Weekly is usually the right rhythm because engagement signals move faster than quarterly planning and slower than daily firefighting. The meeting should be short, decision-focused, and anchored in one dashboard.

A strong review agenda includes:

| Agenda item | Decision to make |

|---|---|

| Top changing engagement signals | Which patterns need investigation now |

| Accounts or cohorts with visible decline | Which teams need to intervene |

| Emerging requests with commercial relevance | Whether product should validate or ignore |

| Results of recent initiatives | Which experiments to scale, revise, or end |

What doesn’t work is a meeting full of status recaps. The ritual should resolve trade-offs. Which issue gets engineering capacity? Which account needs proactive outreach? Which workflow deserves a test this sprint?

Guardrails matter as much as dashboards

Without governance, teams drift into familiar failure modes. Sales tries to pull roadmap decisions toward active deals. Support pushes volume-heavy issues to the top. Product favors what is easiest to instrument. Success escalates based on account pressure.

Good governance makes those tensions productive.

Operating principle: No single channel should outrank the combined signal.

That means a request from a strategic customer still needs behavioral validation. A product usage anomaly still needs customer context. A support theme still needs workflow relevance.

Keep one source of truth

The biggest operational drag is data fragmentation. If support, product, and success each work from separate reports, they spend more time reconciling than deciding.

The better model is a centralized intelligence layer where behavioral events, customer language, and account context meet. That shared view prevents duplicate analysis and reduces “my metric versus your metric” debates.

A durable strategy for engagement also needs maintenance rules:

- Review signal definitions periodically: Customer behavior changes as the product changes.

- Audit weighting logic: What predicted value last year may not be the strongest signal now.

- Document interventions and outcomes: Teams should learn from failed tests, not repeat them.

- Protect data handling standards: Sensitive customer data requires clear boundaries and careful access control.

When companies operationalize engagement this way, the conversation changes. Engagement stops being a campaign theme and becomes an execution discipline.

Conclusion From Insight to Impact

The popular version of engagement is too shallow for SaaS. It rewards visibility, activity, and reporting convenience. It doesn’t reliably tell you which customers are finding value, which accounts are drifting toward risk, or which signals deserve roadmap attention.

A stronger strategy for engagement starts with a different question. Not “How do we get customers to do more?” but “Which behaviors prove value, and which changes in behavior predict revenue outcomes?” That shift changes everything. Product teams prioritize with more confidence. Support stops operating as a separate complaint channel. Customer success acts earlier. Growth teams validate expansion opportunities with evidence instead of optimism.

The practical path is straightforward. Define meaningful engagement through activation, adoption, and expansion. Pull signals from both product behavior and unstructured customer data. Use a weighted model to rank opportunities. Run narrow initiatives tied to explicit business hypotheses. Review the system weekly with shared ownership.

That’s how engagement moves from a soft concept to an operating system.

The biggest advantage now is that teams no longer have to choose between qualitative understanding and quantitative rigor. The right tools can connect customer language, in-product behavior, and account context in one view. Once that happens, every ticket, transcript, and usage pattern becomes more than feedback. It becomes evidence you can use to protect retention, improve product decisions, and create growth.

If your team wants to turn customer feedback, usage patterns, and support signals into a daily revenue-prioritized view, SigOS is built for that job. It helps product, success, and growth teams quantify which issues are driving churn risk, which requests point to expansion, and where to focus next without relying on subjective prioritization.

Keep Reading

More insights from our blog

Ready to find your hidden revenue leaks?

Start analyzing your customer feedback and discover insights that drive revenue.

Start Free Trial →