Behavioral Data Analyst: The Ultimate 2026 Guide

What is a behavioral data analyst? This guide explains the role, skills, salary, and tools they use to turn user behavior into revenue for SaaS companies.

Your roadmap probably has three competing versions right now.

Support wants the bug that triggered a spike in angry tickets. Sales wants the feature a late-stage prospect asked for on a call. Product wants to finish the onboarding redesign because activation feels soft. All three arguments sound reasonable. None of them tells you, with confidence, which issue is costing revenue.

That is where a behavioral data analyst earns their keep in SaaS. This role does not start with opinions, ticket volume, or the loudest customer in Slack. It starts with behavior. Which users dropped off, which actions changed before churn, which workflows slowed down after a release, and which patterns show up across support, product usage, and expansion conversations.

Most content about behavior analysts still leans toward healthcare and therapy contexts. In SaaS, the job looks different. The analyst sits closer to product, growth, support, and revenue. Their work turns messy interaction data into decisions that can survive executive scrutiny.

The Signal and the Noise in Product Decisions

A familiar Monday looks like this. The PM opens Zendesk and sees a thread of complaints about a broken workflow. Customer success flags two accounts at risk. Sales forwards a transcript where a prospect asks for an integration. Engineering says the issue affects only a narrow edge case. Everyone has evidence. Nobody has the full picture.

The problem is not lack of data. It is lack of behavioral context.

A behavioral data analyst cuts through that noise by asking better questions:

- Who is affected: Is this showing up in trial users, power users, or expansion-stage accounts?

- What changed: Did usage drop after a release, pricing change, onboarding update, or support incident?

- What is the business impact: Is the issue tied to retention risk, slower activation, or expansion blockers?

In practice, this means linking what customers say with what they do. A ticket saying “the dashboard is confusing” matters more when you can also show that users who hit that screen stop completing a key workflow. A sales request for a feature matters more when the accounts asking for it share a high-value usage pattern.

Good product teams listen to feedback. Strong teams also verify it against behavior before they prioritize.

The demand for this kind of work is not a passing trend. The global behavior analytics market is **projected to grow from ****2.06 billion in 2026 to **7.63 billion by 2034, at a 17.81% CAGR, reflecting how central behavioral data has become to business decisions, according to Fortune Business Insights on the behavior analytics market.

For SaaS leaders, the takeaway is simple. The behavioral data analyst is the person who turns a noisy backlog into a ranked list of decisions with evidence behind it.

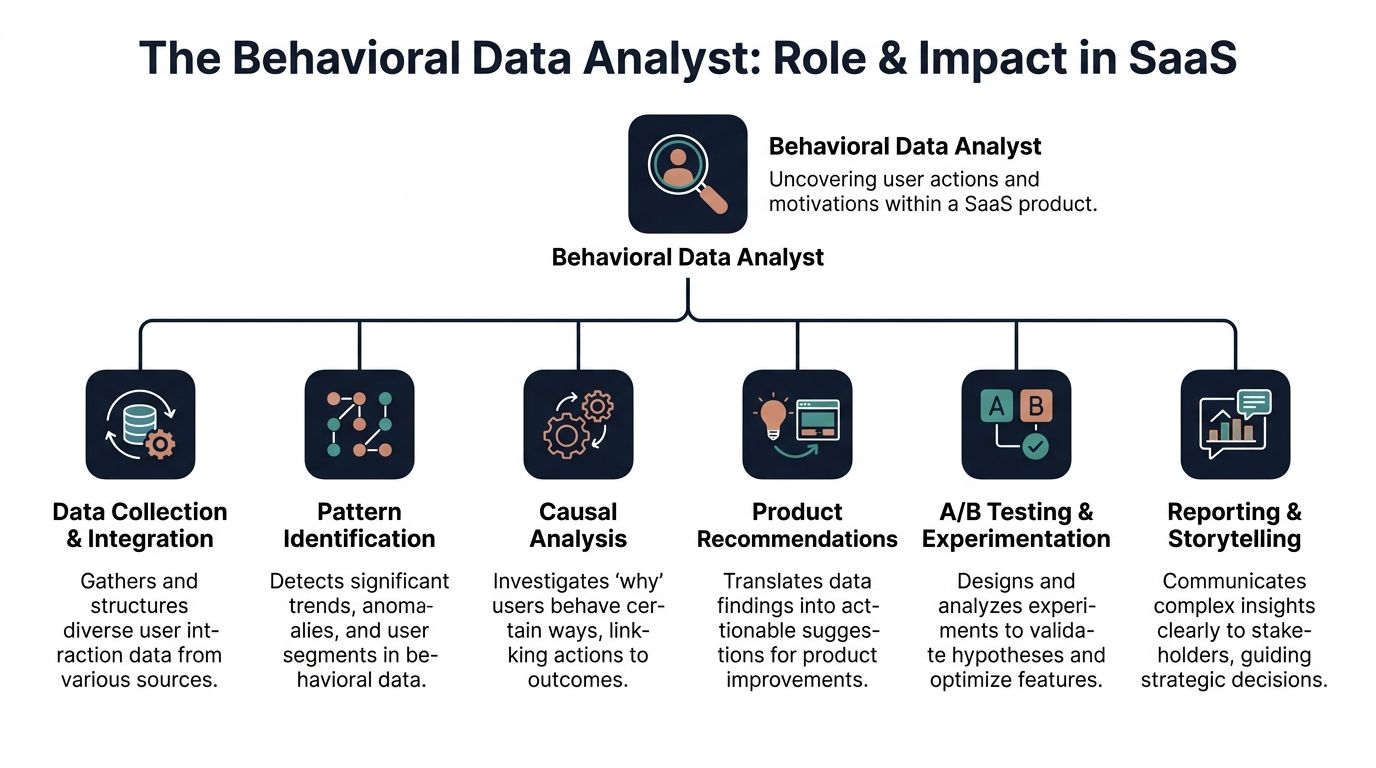

What a Behavioral Data Analyst Does

The role is broader than dashboard maintenance and narrower than a generic “data person.” In SaaS, a behavioral data analyst owns the path from raw interaction data to a product or growth decision.

The mission is straightforward: connect user actions, friction signals, and business outcomes so teams can decide what to fix, build, or test next.

What shows up in the day-to-day work

A strong analyst usually works across several layers of the stack:

- Instrumentation and tracking: Define events that matter. Not vanity clicks. Real steps like onboarding completion, integration setup, report export, or first teammate invite.

- Journey analysis: Reconstruct user paths across product analytics, CRM, support systems, and sometimes call transcripts.

- Friction detection: Find points where behavior changes sharply. A broken import flow, a feature nobody returns to, a release that adds extra effort to a core task.

- Segmentation: Compare new users, mature accounts, self-serve customers, enterprise teams, and at-risk cohorts by behavior rather than static firmographics.

- Experiment support: Help product managers test whether a change improved activation, adoption, or retention for the right users.

- Decision support: Translate findings into a recommendation a PM, growth lead, or VP can act on this week.

What separates this from a general analyst role

A general analyst may report on revenue, pipeline, or campaign performance. A behavioral data analyst goes deeper into cause and sequence.

That matters because product behavior is rarely linear. Users complain after friction builds up. Churn appears after a chain of smaller warning signs. Expansion tends to follow repeated signs of value, not one isolated action.

Top-tier analysts take end-to-end ownership of the pipeline, from ingesting support tickets and usage telemetry to surfacing patterns with sub-minute latency. That workflow is what makes findings like emergent patterns in Linear issues predicting revenue leakage operationally useful, as described in these behavioral data analyst job patterns on Indeed.

What good output looks like

The best outputs are not giant decks. They are compact, usable assets:

| Output | What it answers |

|---|---|

| Funnel breakdown | Where users abandon a key journey |

| Cohort analysis | Which behaviors separate retained users from churned users |

| Issue-impact brief | Which bugs or requests affect the highest-value accounts |

| Experiment readout | Whether a product change improved a meaningful outcome |

| Daily alert | Which new pattern needs immediate attention |

If your team is still prioritizing mostly on ticket counts, it helps to review practical examples of using behavior analytics in product decisions. The key shift is moving from “customers mentioned this a lot” to “this behavior predicts a costly outcome.”

The Essential Skills That Define the Role

Hiring managers often over-index on tools. Candidates often over-index on coding. Both miss the point. A behavioral data analyst succeeds by combining technical fluency with product judgment.

Demand keeps rising. Job postings for behavior analysts in the US increased 28% from 2024 to 2025, according to the BACB Lightcast report. In SaaS, that demand shows up when teams realize they have plenty of data but limited decision-quality insight.

The skill mix that matters

The easiest way to evaluate the role is to separate technical skills from domain skills.

| Skill Category | Core Skills | Why It Matters |

|---|---|---|

| Technical | SQL, event modeling, data cleaning, Python or R, BI tools such as Tableau or Power BI | Raw behavioral data is messy. The analyst needs to query, structure, test, and visualize it reliably. |

| Technical | Exploratory data analysis, cohorting, funnel analysis, anomaly detection | These methods expose where behavior changes and which signals deserve investigation. |

| Technical | Experiment analysis and statistical reasoning | Product teams need someone who can test whether a change helped or only looked promising. |

| Domain | Product intuition | The analyst must know which behaviors indicate value and which are just noise. |

| Domain | User psychology | People do not always explain their behavior clearly. The analyst has to infer intent from patterns. |

| Domain | Business acumen | A drop in usage matters differently if it affects trial users versus expansion-ready accounts. |

| Domain | Storytelling and stakeholder communication | Findings need to change decisions, not sit in a dashboard. |

What works and what does not

Teams get better results when the analyst can move between warehouse logic and roadmap trade-offs.

What works:

- Analysts who ask product questions: “What behavior would prove this feature matters?”

- Analysts who challenge weak metrics: They do not accept page views as a proxy for value.

- Analysts who explain uncertainty clearly: They can say when a result is directional versus decision-ready.

What does not work:

- Pure reporting profiles: Good at chart production, weak on causal reasoning.

- Data scientists with no product context: Strong models, weak prioritization.

- Analysts buried in one function: If they only serve marketing or only serve support, they miss the cross-functional pattern.

Skills to test during hiring

Ask candidates to walk through a behavior problem, not just a SQL challenge.

A useful prompt is: “Trial users complete onboarding, but paid conversion is weak. What would you investigate first?” Strong candidates will talk about user paths, segmentation, competing hypotheses, and instrumentation gaps.

For teams refining their evaluation process, this guide to hypothesis testing in product analysis is a useful benchmark for how rigorous analysts frame evidence before making recommendations.

The best behavioral data analysts do not just answer questions. They improve the questions the business asks.

Sample Analyses and Real-World Queries

The role becomes clearer when you look at the work. Most of it starts with a business question and a messy set of logs.

A core function is exploratory data analysis on user logs. Done well, this reveals patterns hidden inside clickstreams and session data. One example from the verified data set is that a 25% decline in a specific feature’s usage can correlate with a 15% to 20% higher churn risk, validated with 95% confidence intervals, as noted in 365 Data Science’s discussion of data analyst skills.

Finding the aha moment

Suppose your team wants to know which early behaviors predict long-term retention.

The first move is usually cohort comparison. Take users who stayed active and compare them with users who dropped off. Look for actions that appear early, repeat consistently, and align with product value.

, Which actions happen in the first 7 days for retained users?

SELECT

event_name,

COUNT(DISTINCT user_id) AS users

FROM product_events

WHERE event_timestamp <= signup_timestamp + INTERVAL '7 days'

AND user_id IN (

SELECT user_id

FROM user_retention

WHERE retained_status = 'retained'

)

GROUP BY event_name

ORDER BY users DESC;

This query does not prove causation. It gives you a candidate list. The next step is checking whether those actions still matter after segmenting by plan, company size, and acquisition path.

Diagnosing feature adoption friction

Now take a feature launch that looked strong in demos but feels weak in production.

A behavioral data analyst maps the adoption funnel, then isolates the drop-off step. Often the issue is not top-of-funnel awareness. It is a setup step, permission issue, or workflow break that only appears after the first click.

, Feature adoption funnel by step

SELECT

user_id,

MAX(CASE WHEN event_name = 'feature_viewed' THEN 1 ELSE 0 END) AS viewed,

MAX(CASE WHEN event_name = 'feature_started' THEN 1 ELSE 0 END) AS started,

MAX(CASE WHEN event_name = 'feature_completed' THEN 1 ELSE 0 END) AS completed

FROM product_events

WHERE event_timestamp >= CURRENT_DATE - INTERVAL '30 days'

GROUP BY user_id;

A table like this gets more useful when joined with support data. If users who start but never complete also open tickets about setup confusion, the product recommendation becomes sharper.

A good walkthrough of event thinking and analyst workflow can help non-technical stakeholders follow this process:

Building a basic churn-risk view

The practical version of churn analysis is rarely a huge machine learning system on day one. It often starts with simple flags:

- Usage decline: Core feature usage trends down over time.

- Breadth contraction: Fewer teammates engage with the product.

- Support distress: Ticket language shifts toward blockers or failed workflows.

- Workflow interruption: A repeated task now takes more steps or fails more often.

# Simple behavioral churn flag logic

df["risk_flag"] = (

(df["core_feature_usage_trend"] == "down") |

(df["active_teammates_trend"] == "down") |

(df["support_blocker_tag"] == True)

)

This approach is straightforward, but it is useful. Product and CS teams can act on a directional model long before a fully productionized one exists.

Career Paths and Hiring a Great Analyst

Behavioral data analysis is becoming a strong career track because it sits close to revenue, product strategy, and customer truth.

The labor market reflects that. The US Bureau of Labor Statistics forecasts significant job market growth for data analysts by 2032, with notable increases in average and entry-level salaries, based on information from an earlier market reference.

How the career path usually develops

In SaaS, the path often looks like this:

| Level | Typical focus | What changes |

|---|---|---|

| Junior analyst | Reporting, QA on event tracking, basic funnel work | Learns the product and builds trust with PMs |

| Mid-level analyst | Cohorts, adoption analysis, churn indicators, experiments | Starts shaping roadmap decisions |

| Senior analyst | Cross-functional insight generation, prioritization, executive communication | Owns high-stakes questions tied to retention and growth |

| Principal or lead | Data strategy, taxonomy governance, analyst team standards | Influences how the company measures value |

The jump from mid-level to senior usually has less to do with coding and more to do with judgment. Senior analysts know when a spike is noise, when a segment matters, and when leadership is asking the wrong question.

What to look for when hiring

Resumes are imperfect here. Plenty of candidates can list SQL, Python, and Looker. Fewer can connect a workflow issue to lost expansion potential in a way product leaders trust.

Good interview prompts include:

- Open-ended diagnosis: “A new feature gets attention but low repeat usage. How would you investigate?”

- Prioritization trade-off: “Support volume is high for one bug, but revenue impact may be higher elsewhere. What do you need to know?”

- Communication test: “Explain a behavior pattern to a VP of Product without using statistical jargon.”

A take-home case should resemble real work. Give a sample event table, a few support notes, and a retention outcome. Ask for a recommendation, not just a chart.

Adjacent roles worth understanding

Some companies also evaluate whether they need a traditional analyst, a product analyst, or an AI-assisted analyst workflow. If you want a useful comparison point, What Is an AI Data Analyst is a good primer on how AI-supported analysis differs from analyst judgment.

Hire for curiosity about user behavior and business trade-offs. Tools can be taught faster than product sense.

How AI Platforms like SigOS Accelerate Analysis

Manual behavioral analysis breaks down in the same place every time. The analyst spends too much time collecting data and too little time interpreting it.

Support tickets live in one system. Product events live in another. Call notes sit in a CRM. Jira issues contain delivery history, but not revenue context. By the time someone joins the data, cleans the fields, and compares segments, the moment to act has often passed.

Where AI helps most

AI platforms are useful when they remove repetitive analyst work without replacing judgment.

That usually means automating tasks like:

- Multi-source ingestion: Pulling support, sales, and product data into one analytical workflow.

- Pattern matching: Surfacing clusters of issues that show up across accounts or behaviors.

- Signal ranking: Highlighting which patterns appear tied to churn, expansion, or workflow failure.

- Operational handoff: Pushing findings into Jira, GitHub, or team alerts fast enough to matter.

One example is SigOS analysis workflows, which connect support tickets, chat transcripts, sales calls, and usage data, so teams can inspect patterns tied to churn, expansion, and revenue impact. The analyst still decides what deserves action. The platform reduces the lag between observation and response.

What AI does not solve on its own

AI does not define your product’s key behaviors. It does not know which workflow represents value for your best customers. It also does not resolve weak instrumentation or bad event naming.

The analyst still has to provide:

- A clear measurement model

- Business context for segmentation

- A decision framework for prioritization

Without those, AI only makes confusion faster.

The best setup is a partnership. The platform handles correlation, synthesis, and monitoring at machine speed. The behavioral data analyst handles framing, validation, and strategic trade-offs.

From Data Points to Lasting Dollar Impact

The primary output of a behavioral data analyst is not a dashboard. It is a better sequence of product decisions.

When the role works well, support data stops being anecdotal. Feature usage stops being a vanity report. Sales requests stop sitting outside the product evidence loop. Everything moves closer to one question: which user behaviors point to revenue risk or revenue opportunity?

That is why this role matters so much in SaaS. Product teams are flooded with signals, but only some deserve immediate attention. Behavioral analysis gives leaders a way to separate urgency from importance.

If your team also analyzes spoken feedback from sales calls, onboarding sessions, or support interactions, tools in the category of Speech to Text Sentiment Analysis APIs can add another layer of context to behavioral work by making unstructured conversations easier to compare with product usage patterns.

A mature SaaS team eventually learns this lesson. Revenue does not move because a chart exists. Revenue moves when someone connects behavior, friction, and action quickly enough to change the outcome.

Frequently Asked Questions

Is a behavioral data analyst the same as a product analyst

Not quite.

A product analyst often focuses on feature performance, funnels, and experiments inside the product. A behavioral data analyst usually works across a wider set of signals, including support conversations, user journeys, account-level patterns, and business outcomes like churn or expansion. In some companies, one person does both jobs. In larger teams, the behavioral role is more cross-functional.

How does a behavioral data analyst handle privacy and ethics

Privacy has to be built into the workflow, not added later.

When handling sensitive behavioral data, SaaS teams need to think beyond health-specific frameworks and account for global requirements like GDPR and CCPA. Security-first practices matter, including zero-retention AI models that are never retrained on customer data, as discussed in this privacy and ethics reference on behavioral data handling.

Good practice usually includes:

- Access control: Limit who can view raw transcripts or account-level records.

- Data minimization: Analyze what is necessary, not everything available.

- Model boundaries: Keep customer data out of training loops when the platform design allows it.

- Clear governance: Define which teams can act on which classes of insight.

Can someone enter this field without direct SaaS analyst experience

Yes, if they can demonstrate the right kind of thinking.

The most transferable skills are SQL, basic statistics, comfort with messy data, and the ability to frame a business question as a behavioral one. Candidates from support ops, growth analytics, BI, or product ops often adapt well if they understand user journeys.

A good portfolio project is more convincing than a generic certificate. Analyze a public product dataset, define a key workflow, identify a friction point, and make a recommendation.

What should the first month look like after hiring one

A strong first month is usually practical:

- Audit event quality and naming.

- Identify the product’s critical user journeys.

- Define a short list of behavior-based risk and value signals.

- Deliver one analysis that changes an active roadmap decision.

That last point matters. The role gains credibility when it affects a real decision early.

If your team is drowning in support noise, scattered usage data, and roadmap debates that lack evidence, SigOS can help operationalize behavioral analysis by connecting feedback and product signals into a workflow product and growth teams can use.

Ready to find your hidden revenue leaks?

Start analyzing your customer feedback and discover insights that drive revenue.

Start Free Trial →