OKRs for Product Managers: A High-Impact Framework

Ditch output-driven goals. Learn our step-by-step framework for writing OKRs for product managers that tie directly to revenue, churn, and customer behavior.

Most advice about okrs for product managers starts in the wrong place. It starts with templates, scoring scales, and workshop rituals. That's why so many teams end up with polished documents that don't change what gets built.

The problem isn't the OKR framework. It's that teams write goals that protect activity instead of exposing impact. "Launch the dashboard." "Improve collaboration." "Increase engagement." Those sound strategic, but they let everyone stay busy without proving the product changed customer behavior or business performance.

Strong OKRs do something harsher and far more useful. They force a product team to answer three uncomfortable questions. What customer behavior needs to change? How will we measure it? Why does that change matter to retention, expansion, or revenue?

When you write OKRs that way, the framework stops being a quarterly ceremony. It becomes a filter for roadmap decisions, trade-off calls, and cross-functional alignment.

Why Most Product OKRs Fail And How Yours Won't

Product OKRs usually fail before the quarter starts. The team already has a roadmap, leadership wants the comfort of a planning artifact, and someone rewrites planned features as objectives. The result looks strategic in a slide deck and behaves like a checklist in real life.

That is how product teams end up with goals such as "improve onboarding" or "launch reporting." Nobody can use those statements to decide what to cut, what to delay, or what deserves more engineering time. Worse, they hide the only question that matters. What customer behavior needs to change enough to show up in retention, expansion, or new revenue?

I have seen this pattern more than once. A team ships everything it promised, declares the quarter a success, and then has to explain why activation stayed flat and upsell pipeline did not move. The problem was never effort. The problem was that the OKRs measured completion instead of commercial impact.

What failure looks like in practice

Weak product OKRs tend to break in predictable ways:

- Objectives sound ambitious but stay vague: They create alignment theater, not real prioritization.

- Key results describe team activity: releases shipped, tickets closed, interviews run, experiments launched.

- Review meetings reward reporting: Teams explain progress against plan instead of evidence from customer behavior.

- The roadmap stays fixed: OKRs are documented after decisions are made, so they cannot shape trade-offs.

One rule catches a lot of bad key results fast.

Practical rule: If a key result can be achieved before customer behavior changes, it is probably tracking output, not impact.

Good OKRs create useful pressure. Product, engineering, sales, support, and marketing have to agree on a measurable change that matters to the business. That might be more teams reaching first value in week one, more accounts adopting a sticky workflow, fewer support tickets from failed setup, or a higher share of customers using a premium capability tied to expansion.

That pressure is healthy because it exposes trade-offs early. If an objective is tied to faster activation, the team may decide not to ship three smaller requests and instead fix one onboarding step that is blocking conversion. If an objective is tied to retention, the right answer may be less roadmap breadth and more work on reliability, usability, or handoff friction.

The same mistake shows up outside quarterly planning. Teams trying to enhance code review efficiency often track reviews completed instead of the business outcome they wanted, such as shorter cycle time or fewer releases delayed by handoff gaps. Product OKRs fail for the same reason. They count motion and miss the result.

Your OKRs will hold up if they start with business risk, translate that risk into customer behavior, and only then define the work. That is how goals stop being administrative overhead and start acting like a profit filter for the roadmap.

From 'Ship It' to 'Shift It' Outcomes Over Outputs

Product teams love shipping because shipping feels final. A feature launched. A ticket closed. A release announced. Outputs create visible progress, and visible progress is comforting.

But customers don't buy outputs. They buy solved problems, saved time, lower risk, and better results. That's why the shift from outputs to outcomes is the central habit behind useful okrs for product managers.

The feature factory problem

An output mindset sounds like this:

- Launch a new dashboard

- Ship the integrations page

- Run a pricing test

- Complete onboarding redesign

None of those statements tell you whether the work mattered. A team can deliver every item on time and still miss the quarter.

An outcome mindset sounds different. It asks what should change because the work exists. Did users reach value faster? Did more accounts adopt the capability? Did fewer customers contact support? Did expansion conversations become easier? Those are product questions with business consequences.

Shipping is a means. Product managers get judged on whether behavior moved after the release.

A simple test for every draft OKR

Take any proposed key result and ask one question: if the team hits this, would a CFO care?

That doesn't mean every KR has to be a finance metric. It means every KR should connect to a chain of evidence that reaches revenue, churn, or cost. Feature adoption can matter. Retention can matter. Support deflection can matter. But only if the team can explain why the metric represents business value instead of local optimization.

Modern tooling helps. Analytics platforms, CRM data, support systems, and product intelligence tools can connect what customers say to what they do. In practice, that means fewer roadmap debates driven by the loudest anecdote and more decisions anchored in behavior patterns, friction points, and account impact.

What to stop writing

Product teams usually know weak OKRs when they see them, but they keep writing them because they're easy to defend.

Stop writing key results like these:

- Conduct customer interviews

- Launch feature X

- Create sales enablement assets

- Improve stakeholder alignment

Those are important activities. They're not proof of impact. Activities belong in the initiative plan, not in the key results.

How to Define Your Product's North Star Objectives

The strongest objectives don't come from brainstorming sessions. They come from pressure. Pressure from churn, stalled expansion, weak activation, support burden, competitive gaps, or a strategic move the company has already committed to.

For effective implementation, product managers should set 1 to 5 ambitious objectives per quarter, and the process should begin with a focal objective tied to the product's North Star metric, such as reducing churn rather than exclusively launching features. Managing more than five objectives dilutes focus and leads to worse outcomes, according to monday.com's guidance on OKRs for product management.

Use the three-direction test

I use a simple test before approving any objective. Look up, look sideways, and look inward.

- Look up at company strategyIf the company needs stronger retention, the product objective can't drift into low-stakes polish work. The objective has to support the company goal directly.

- Look sideways at cross-functional realityProduct goals fail when they ignore dependencies. Marketing may need a cleaner activation flow. Sales may need proof points for a new segment. Support may be drowning in preventable ticket categories.

- Look inward at the product thesisThe objective should reflect what kind of product you're trying to build. If your product wins on speed, don't write an objective centered on breadth for the sake of breadth.

A North Star objective sits where those three lines intersect.

What a strong objective sounds like

An objective should be qualitative, directional, and demanding. It shouldn't read like a task list or a metric dump.

Better examples:

- Make first value unmistakable for new users

- Turn the product into a stronger retention engine for SMB accounts

- Remove the friction blocking expansion in high-intent accounts

- Create a support experience that reduces avoidable churn risk

Weak examples usually expose a team that's still thinking in deliverables:

- Launch onboarding improvements

- Release enterprise feature set

- Improve reporting

- Refactor billing

Keep the count low and the stakes high

Many teams don't struggle because they lack ambition. They struggle because they split attention across too many priorities. Once a team has too many objectives, every roadmap argument becomes winnable and nothing gets real force behind it.

Use a single focal objective when the business is under pressure. Add more only when they represent distinctly different value streams and the team can still protect focus. If you need help clarifying the metric that should anchor the objective, this guide to a product North Star metric is a useful reference point.

A good objective creates productive constraint. It tells the team what matters now, and just as important, what doesn't.

Crafting Key Results That Actually Measure Impact

A product objective can sound sharp and still fail the business. The failure usually sits in the key results.

I see the same pattern every planning cycle. Teams choose KRs that are easy to update in a slide deck and impossible to tie back to customer behavior, retention, or revenue. "Launch onboarding v2" gets marked green. Churn stays flat. Expansion does not move. The team shipped work, but the business got no proof of value.

Start with a format that removes wiggle room

Use a simple structure:

Verb + metric + starting point + target + timeframe

Examples:

- Reduce onboarding drop-off from 30% to 20% this quarter

- Increase trial-to-paid conversion from 18% to 22% by quarter end

- Grow the share of active accounts using the reporting workflow from 35% to 50%

- Cut time-to-first-value for new admins from 3 days to 1 day

That structure does two useful things. It forces a baseline, so nobody hides behind relative improvement language. It also forces a target, so the team has to decide what meaningful change looks like before work starts.

A useful rule from earlier OKR guidance still holds here. Good KRs describe a measurable shift, not a completed task. Keep that standard.

Pick metrics that map to value, not motion

The best KRs sit close to one of five signals:

- Activation, whether new users reach first value

- Adoption, whether a capability changes repeated behavior

- Retention, whether customers keep getting value over time

- Expansion, whether product usage creates more revenue potential

- Efficiency, whether the experience reduces support cost or friction

Satisfaction can belong here too, but only when it connects to something commercial. A CSAT increase on its own is weak. A CSAT increase paired with fewer cancellation requests or fewer support contacts is far more useful.

Product judgment matters. A PM can make almost any roadmap item look successful if the KR is vague enough. A disciplined PM chooses the metric that could prove the idea wrong.

Output KRs create false confidence

Here is the test I use. If a KR can hit 100% while customer behavior stays the same, it is probably an output. Rewrite it.

| Goal | Weak KR | Strong KR |

|---|---|---|

| Improve onboarding | Launch new onboarding flow | Reduce the percentage of signups who abandon setup before activation |

| Increase engagement | Ship notifications feature | Increase weekly active usage among previously inactive accounts |

| Improve retention | Release admin dashboard | Reduce churn risk in accounts with low weekly usage |

| Grow expansion revenue | Publish new pricing page | Increase upgrade conversion from eligible accounts |

| Reduce support load | Release self-serve billing tools | Cut billing-related support tickets per 100 accounts |

The difference matters more than teams admit. Output KRs reward shipping. Outcome KRs force product, design, and engineering to ask whether the change improved the business.

Pair lagging metrics with early proof

Revenue and churn matter, but they move slowly. If those are your only KRs, you usually discover failure too late to correct it.

Pair the lagging metric with a leading indicator the team can influence every week. For a retention objective, that might be activation of a sticky workflow, repeat usage in the first 14 days, or a drop in support tickets tied to a known friction point. For an expansion objective, it might be increased usage in seats, projects, or integrations that historically show up before an upgrade.

That pairing is what turns OKRs into a decision tool instead of a quarterly scorecard. It also keeps the team honest. Leading indicators tell you whether the work is changing behavior now. Lagging indicators tell you whether that behavior translated into money later.

If your instrumentation is weak, fix that before you argue about targets. A clean product metrics and reporting framework gives you the baseline, segmentation, and reporting cadence needed to write KRs that survive scrutiny.

Build key results from the revenue path

In SaaS, every meaningful KR should connect to one of three questions.

- Did more of the right users reach value?

- Did more accounts stay because the product became harder to leave?

- Did more customers expand because usage proved willingness to pay?

That lens cuts a lot of fluff. "Achieve feature parity" is not a business result. "Increase win rate in competitive deals where reporting gaps blocked purchase" is much closer. "Improve NPS" is broad and often toothless. "Reduce cancellation requests from accounts that contact support about billing friction" gives the team something real to change.

I have made the opposite mistake before. We once wrote a KR around shipping a major workflow redesign because everyone agreed it was important. The release landed on time. Adoption barely moved because the underlying problem was data setup, not interface clarity. A better KR would have tracked setup completion, first successful report, and conversion among accounts that reached that point. The team would have found the bottleneck in two weeks instead of one quarter.

For teams that struggle to keep goals concrete, TimeTackle's goal setting insights are a useful complement, especially when planning sessions drift toward ambition without measurement discipline.

A strong key result proves the product changed customer behavior in a way the business can monetize.

Your Quarterly OKR Planning and Review Workflow

A quarter with good OKRs has a rhythm. Without that rhythm, even well-written goals decay into static text. Teams stop updating them, leadership stops trusting them, and roadmap decisions drift back to whoever shouts loudest.

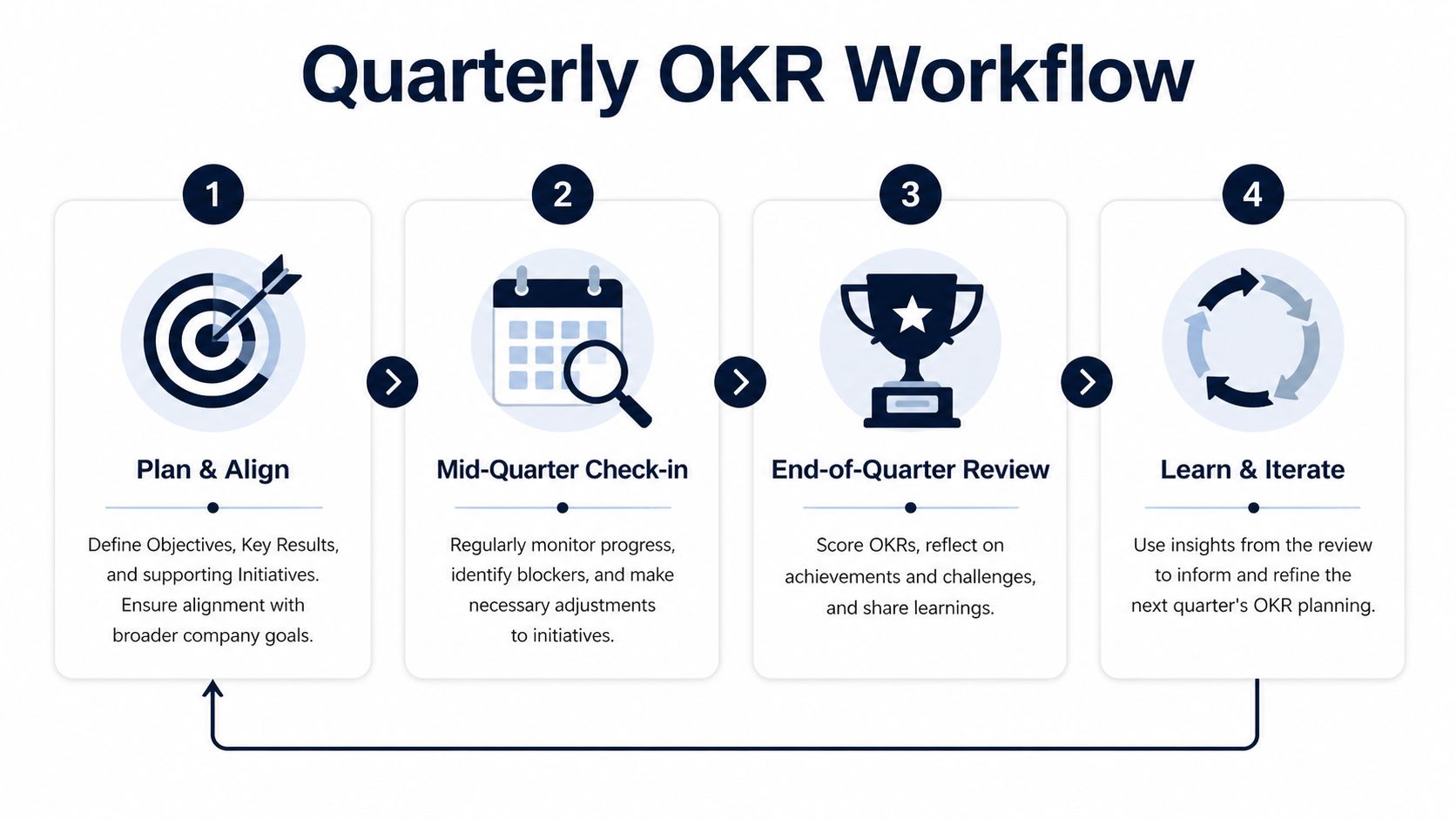

The workflow matters because OKRs aren't just a planning artifact. They're a decision system.

The quarter in motion

A healthy cycle usually looks like this:

- Plan and alignProduct, engineering, and partner teams draft objectives and key results together, which exposes weak dependencies early.

- Weekly check-insThe team updates progress, confidence, and blockers. The useful part isn't the score. It's the conversation about whether the current approach is still the right one.

- Mid-quarter adjustmentSome initiatives should change when the evidence changes. Adaptive teams learn faster than rigid ones.

- End-of-quarter reviewScore the OKRs, capture what was learned, and decide what carries forward.

Why rigid OKRs break under real market conditions

A rigid quarterly plan often looks disciplined from the outside and disconnected from reality on the inside. That gets worse in expansion work, where local usage patterns, customer expectations, and support pressure can shift faster than a centrally planned roadmap.

A 2025 McKinsey Global Survey cited in Aakash Gupta's product OKR examples says 62% of SaaS PMs expanding to new markets face 28% higher churn from unlocalized priorities. The same source notes that rigid, non-adaptive OKRs can reduce agility by 19%, while adaptive OKRs reviewed and adjusted quarterly can boost revenue by 33%.

That doesn't mean objectives should wobble every week. It means initiatives should. The target can stay clear while the route changes.

Score the quarter without creating fear

Many teams benefit from treating OKR review as an operating discussion, not a performance trial. Stretch goals only work when people can openly report yellow or red.

The scoring conversation should answer questions like these:

- Did the metric move for the reason we expected

- Which initiatives created evidence worth repeating

- Which assumptions failed early enough to teach us something useful

If the roadmap and OKRs live in separate universes, fix that first. A product roadmap should show the bets that support the OKRs, not a detached sequence of outputs. This guide to product roadmap development is useful if your team still plans features before defining the result they need.

Good reviews don't ask, "Who missed?" They ask, "What did we learn about what drives value?"

High-Impact SaaS OKR Examples for Growth and Retention

Bad OKR examples train teams to admire tidy wording instead of business movement. Good ones force a harder question. Did customer behavior change in a way that improves retention, expansion, or revenue?

That standard matters in SaaS because growth and retention are tightly linked. A team can hit a launch milestone, drive trial signups, and still miss the quarter if those users never reach repeat value or convert into durable revenue.

Growth OKR for early traction

ObjectiveProve that new users reach enough value to come back.

Key results

- Achieve 40% user retention after 8 weeks

- Reach 100 weekly leads

- Grow weekly active users from 15 to 100

- Secure 5 paid customers while maintaining NPS above 40

I like this structure because it prevents the usual early-stage mistake. Teams ship onboarding changes, call the work done, and never test whether those changes increased repeat usage or willingness to pay. This objective keeps the bar where it belongs. On behavior and revenue signals.

The trade-off is focus. If one team owns activation, another owns acquisition, and nobody owns conversion quality, this OKR gets noisy fast. In practice, I would narrow ownership and review the funnel weekly so lead volume does not hide weak product value.

Retention OKR for support-heavy products

ObjectiveRemove the product friction most likely to trigger avoidable churn.

Key results

- Reduce support tickets by 80% after the feature launch

- Maintain 5% monthly churn for SMB segments

- Increase supported integrations by 50%

- Achieve 4.5/5 customer ratings for the integration experience

This is the kind of OKR that works when support pain is not just an operational problem but a revenue problem. If integration confusion drives ticket spikes, slows onboarding, and pushes smaller accounts to cancel, product should treat that friction as a churn lever.

One practical option is to use a system that combines support tickets, chat transcripts, sales calls, and usage patterns to identify issues correlated with churn and expansion. SigOS does this and reports correlation between those signals and churn or expansion patterns, based on the company information provided.

A short explainer can help teams align on the mechanics before planning their own set:

Expansion OKR for monetization

ObjectiveImprove monetization without damaging customer trust.

Key results

- Target 15% ARPU uplift through pricing optimization

- Drive 10% MRR growth via upsells

- Migrate 10% of customers to new pricing models

Pricing OKRs fail when teams measure the rollout instead of the response. A new pricing page shipped on time means very little if win rates drop, upgrade confusion rises, or customer success spends the month calming angry accounts.

The better approach is to pair commercial upside with a guardrail. ARPU and MRR growth are strong targets. They should sit next to churn, downgrade rate, sales cycle impact, or expansion conversion so the team can see whether monetization improved the business or just shifted pressure downstream.

Satisfaction OKR for post-launch quality

ObjectiveMake a newly launched capability feel dependable enough to become part of the workflow.

Key results

- Achieve 4.5/5 customer ratings

- Reduce support tickets by 80% post-launch

- Partner with three key platforms

This type of OKR is useful after launch, when the key question is adoption depth. Customers do not build habits around a feature because it exists. They build habits when it works reliably, fits their workflow, and solves a problem often enough to earn repeat use.

The pattern across all four examples is simple. Every key result ties back to customer behavior, customer sentiment, or commercial performance. That is the difference between OKRs that drive profit and OKRs that decorate a planning doc.

Beyond the Framework Making OKRs Your Superpower

The best okrs for product managers don't create more process. They create better product judgment. They make it easier to say no to roadmap clutter, easier to align cross-functional teams, and easier to explain why a product decision matters in business terms.

That's the primary upgrade. Not prettier goals. Better decisions under constraint.

When a PM learns to anchor objectives to the North Star, write key results around customer behavior, and run a steady quarterly review rhythm, the team stops managing by hope. It starts managing by evidence. That changes how features get prioritized, how trade-offs get resolved, and how product earns credibility with leadership.

Start smaller than you think. Pick one objective that matters. Tie it to a real business problem. Write key results that would still matter if no one praised the team for shipping. Then run the quarter truthfully.

If the OKR doesn't change what gets built, what gets delayed, or what gets discussed in weekly reviews, rewrite it.

If your team wants clearer visibility into which customer issues and feature requests affect churn, expansion, and revenue, take a look at SigOS. It helps product teams turn noisy feedback and usage data into measurable priorities that support stronger OKRs.

Keep Reading

More insights from our blog

Ready to find your hidden revenue leaks?

Start analyzing your customer feedback and discover insights that drive revenue.

Start Free Trial →