Prioritization of Projects: A Data-Driven Guide

Learn the prioritization of projects from A to Z. Compare methods like RICE, avoid common pitfalls, and see how to use data to make revenue-driving decisions.

Monday starts the same way in a lot of SaaS teams. Sales wants a feature for a deal that's stuck. Support wants the bug that's driving ticket volume fixed now. Marketing needs launch work pulled forward. Engineering is asking for time to clean up an area everyone knows is fragile. Then leadership adds one more request that "can't wait."

By noon, the roadmap hasn't become clearer. It's become louder.

That chaos usually isn't a people problem. It's a system problem. Teams aren't failing because they lack urgency or commitment. They're failing because they don't have a shared way to decide what matters most, what can wait, and what should be stopped entirely. In practice, prioritization of projects is less about sorting tasks and more about protecting focus.

Why Your Team Is Drowning in 'Top Priorities'

The fastest-growing teams often create this problem themselves. They say yes to customers, yes to internal stakeholders, yes to strategic bets, and yes to cleanup work. For a while, that flexibility feels like responsiveness. Then the backlog turns into a pile of competing promises.

That pile gets worse when capacity is already tight. Over 50% of project practitioners identified resource management as their top challenge, and 77% reported insufficient resources to meet project demand, according to Epicflow's review of project prioritization data. This environment is common for teams. Not abundant capacity. Managed scarcity.

What chaos looks like in real SaaS teams

A chaotic roadmap usually has familiar symptoms:

- Sales-driven spikes: A prospect asks for something specific, and the request jumps the queue without any comparison against retention work, reliability issues, or broader product goals.

- Support fatigue: Ticket volume becomes a proxy for importance, even when the loudest problem affects a narrow slice of users.

- Executive overrides: A senior leader asks for a dashboard, integration, or launch item, and no one feels comfortable asking what gets delayed to make room.

- Engineering whiplash: Teams start one initiative, pause it for another "urgent" item, then revisit the first one later with more context switching and less momentum.

None of this is unusual. It's what happens when a company treats prioritization as a meeting instead of an operating system.

Practical rule: If every stakeholder can create a top priority, your team has no priority system. It has an escalation system.

Why effort alone doesn't fix it

Teams often try to work harder their way out of the problem. They add more planning meetings, more status reviews, more Slack threads, and more executive check-ins. That doesn't solve the core issue. It just increases the cost of indecision.

The shift happens when the team accepts a hard truth. Not everything important can happen now. Once that becomes explicit, prioritization of projects stops being political theater and starts becoming a disciplined trade-off process.

Thinking Beyond a Simple To-Do List

A backlog isn't a strategy. It's inventory.

Strong teams treat prioritization more like air traffic control than list management. An air traffic controller doesn't just line up planes in the order they appear. They account for fuel, weather, runway availability, route conflicts, and what has to land safely first. Product and project leaders face the same kind of dynamic system. Every initiative carries a different mix of value, risk, dependency, and timing.

The difference between sorting and steering

A simple to-do list answers one question: what's next?

Good prioritization answers harder questions:

- What creates the most value if we do it now

- What blocks other work if we delay it

- What looks attractive but pulls us away from strategy

- What exceeds our actual team capacity

- What deserves a place on the roadmap at all

That last question matters most. Teams often debate sequencing before they've decided whether the work belongs in the portfolio.

For teams that are still building that muscle, a feature prioritization matrix for product decisions is a useful bridge. It forces comparison. It makes trade-offs visible. It also exposes how often teams confuse urgency with value.

Capacity changes the answer

A project can be worthwhile and still be the wrong choice right now. That's where many roadmap discussions go off track. Stakeholders assume the debate is about whether an idea is good. The key question is whether it's the best use of constrained capacity at this moment.

Prioritization gets cleaner when teams stop asking, "Do we like this idea?" and start asking, "What are we choosing not to do if we commit to it?"

This is why prioritization of projects has to be continuous. Inputs change. Customer behavior changes. Dependencies move. A project that ranked high last quarter can drop if the evidence weakens, if effort expands, or if another issue starts threatening retention or revenue more directly.

Comparing Popular Prioritization Methods

Most frameworks are useful. Few are sufficient on their own.

The right method depends on what you're prioritizing, how much evidence you have, and how disciplined your organization is about trade-offs. Some methods are fast but subjective. Others are more rigorous but take more operational maturity to use well.

Project Prioritization Frameworks Compared

| Framework | Best For | Key Advantage | Potential Downside |

|---|---|---|---|

| RICE | Product features, growth experiments, backlog comparison | Adds numerical discipline through reach, impact, confidence, and effort | Estimates can look precise even when inputs are weak |

| MoSCoW | Release planning, scope negotiation, stakeholder alignment | Fast way to separate critical work from nice-to-haves | Teams often label too many items as must-haves |

| Weighted scoring | Cross-functional portfolios, roadmap planning, executive reviews | Creates a shared scoring model across strategy, ROI, risk, and demand | Requires agreement on criteria and weights before it works |

| Impact-effort matrix | Early triage, workshop settings, idea sorting | Easy to explain and quick to use in group discussion | Doesn't handle dependencies or strategic nuance well |

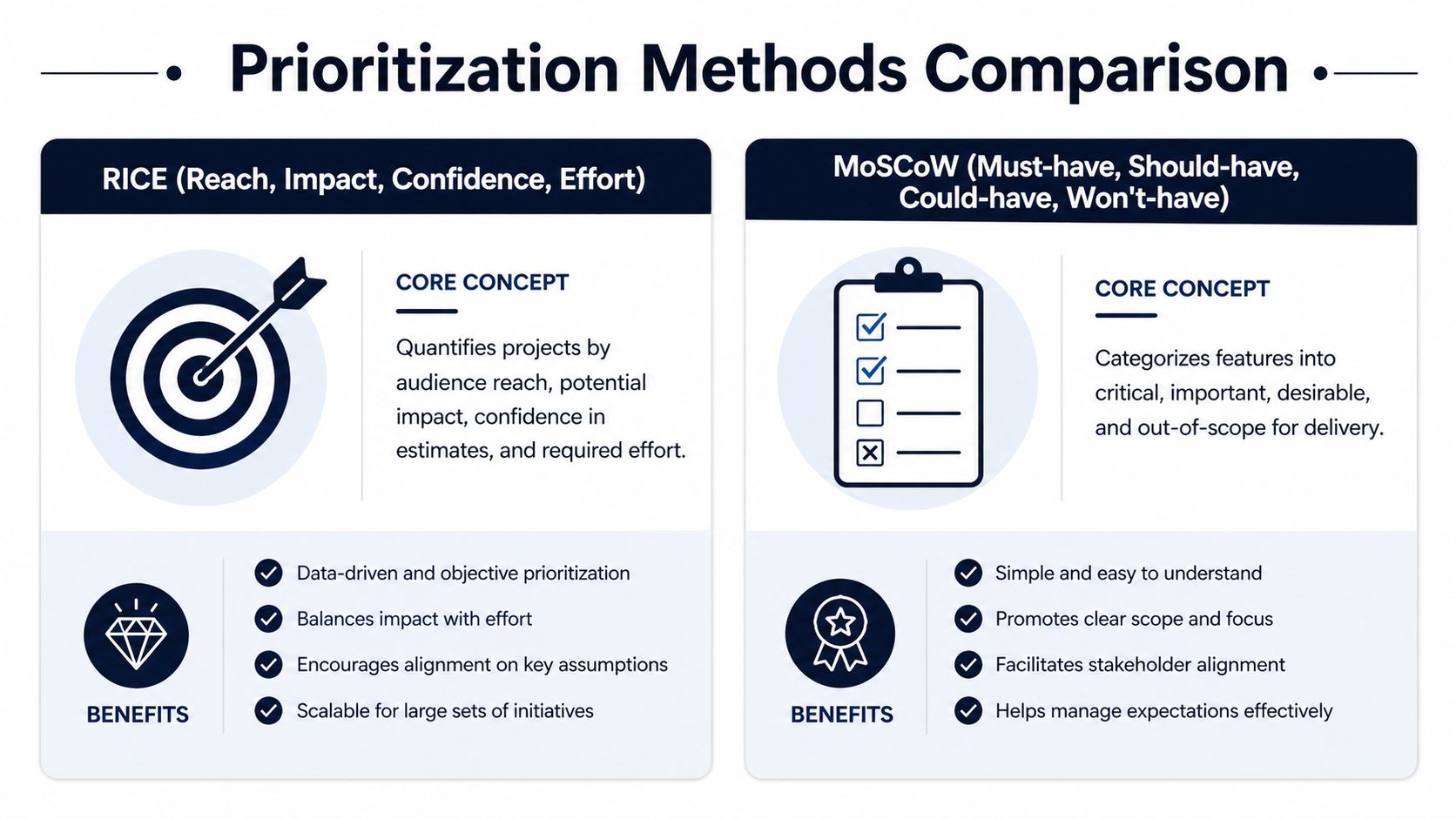

Where RICE works well

RICE is useful when product teams are choosing among many feature requests, bugs, or experiments. The model uses Reach, Impact, Confidence, and Effort. It works because it forces teams to make assumptions visible rather than burying them in opinion.

If a feature affects a broad set of users, has a meaningful business effect, carries strong evidence, and doesn't consume too much effort, it should rise. If it has vague upside and large implementation cost, it should fall. That's a healthier conversation than "enterprise wants it" or "this feels important."

Where MoSCoW helps

MoSCoW is less mathematical and more operational. It splits work into must-have, should-have, could-have, and won't-have. That makes it useful in release planning, especially when teams need a scope boundary that everyone can understand quickly.

The risk is obvious. Without discipline, every stakeholder argues that their request belongs in the must-have bucket. When that happens, MoSCoW becomes a labeling exercise, not a prioritization method.

Why weighted scoring tends to hold up

For cross-functional prioritization of projects, weighted scoring usually scales better than a single-framework approach. Weighted scoring models evaluate initiatives across criteria like strategic alignment, often weighted at 30 to 40 percent, and expected ROI, often weighted at 20 percent. Triskell notes that using such models can boost on-time delivery by 28% by improving capacity validation and reducing resource waste on low-value work in its guide to project prioritization frameworks.

That matters in SaaS because product, support, sales, and engineering rarely optimize for the same thing by default. A weighted model gives them a common language.

A useful parallel exists outside product management too. Sales teams that shorten sales cycles with methodologies do the same thing conceptually. They replace improvisation with a repeatable structure. Prioritization works the same way. The framework doesn't remove judgment, but it stops judgment from drifting every week.

Working rule: Use lightweight methods for local decisions. Use weighted scoring when the decision affects shared capacity across multiple teams.

Key Metrics That Drive Smart Decisions

A framework is only as good as the inputs behind it. Teams often say they're using RICE when they're really using confidence theater and effort guesses.

The model itself is straightforward. The RICE scoring model uses the formula (Reach × Impact × Confidence) / Effort, and Atlassian's prioritization guide notes evidence from Triskell showing weighted scoring models like RICE improve delivery performance by 25% by enabling transparent comparisons and reducing subjective bias.

Reach isn't "a lot of users"

Reach should come from actual exposure data. In a SaaS environment, that might mean the number of active accounts touching a workflow, the volume of support tickets tied to a bug pattern, or the set of customers blocked in a renewal-sensitive area.

If a team can't point to a real source for reach, the input should be labeled as directional, not treated as hard evidence.

Impact needs a business definition

Impact breaks first because everyone defines it differently. Product may think in adoption. Support thinks in friction reduction. Sales thinks in deals. Finance thinks in revenue durability.

Pick one business lens for each decision cycle and make it explicit. If churn reduction is the priority this quarter, impact should reflect that. If expansion is the focus, use that lens instead. Teams that need a stronger reporting foundation often benefit from tightening their product metrics and reporting practices before they try to score everything.

For leaders trying to connect operational product work to broader company outcomes, this guide to fueling business growth is also useful context. It frames how customer success metrics can support better prioritization rather than sit in a separate dashboard no one uses.

Confidence is where honesty shows up

Confidence shouldn't reward optimism. It should reflect evidence quality.

A healthy confidence conversation sounds like this:

- High confidence: We have usage data, support patterns, and repeatable customer evidence pointing in the same direction.

- Medium confidence: We have partial signals, but causation isn't fully clear.

- Low confidence: This is a strategic bet, intuition-led request, or isolated anecdote.

The point of confidence isn't to make ideas look safer. It's to price uncertainty into the decision.

Effort needs the right level of precision

Don't ask for false granularity. For most roadmap prioritization, relative effort is enough. The useful question isn't whether a project takes a perfectly estimated duration. It's whether this project consumes meaningfully more capacity than the alternatives competing with it.

When teams tighten these four inputs, prioritization of projects stops sounding like advocacy and starts sounding like decision-making.

Building Your Repeatable Prioritization Process

A good framework without a process still collapses under pressure. The teams that get this right build a repeatable cycle that survives executive requests, customer escalation, and quarter-end panic.

Step 1 through Step 3

- Create one intake pathEvery request should enter through the same mechanism, whether it comes from sales, support, leadership, or product. If requests bypass the system, the system isn't real. Keep the intake simple: problem, customer or business impact, evidence, urgency, dependencies, and requested timeline.

- Separate intake from commitmentLogging a request shouldn't imply roadmap approval. This sounds obvious, but many teams accidentally treat "we've captured it" as "we're doing it." Separate those two moments clearly.

- Define the scoring criteria onceThe criteria shouldn't change every time a politically sensitive request appears. Set the decision model in advance. Revisit it on a planned cadence, not in reaction to individual pressure.

Step 4 and Step 5

- Run a cross-functional reviewProduct shouldn't score alone. Bring in engineering for feasibility, support for recurring pain patterns, and revenue-facing teams for commercial context. This reduces blind spots and cuts down on post-decision resistance.

- Publish decisions with trade-offs attached"We're doing X" isn't enough. The mature version is: we're doing X because it scored highly on our current priorities, and choosing it means Y moves later. That last part builds trust because people can see the logic, even when they disagree with the outcome.

What makes the process stick

Most prioritization systems fail for boring reasons:

- No regular cadence: Reviews happen only when the roadmap feels broken.

- No visible archive: Teams can't see how or why decisions were made.

- No stop mechanism: Work gets added faster than work gets removed.

- No ownership: Everyone contributes input, but no one owns the final call.

A workable cadence is one that stakeholders can predict. Some decisions belong in a regular portfolio review. Others belong in a faster weekly operating rhythm for urgent issues. The point isn't to make every decision slow. It's to make every decision legible.

Leadership habit: When a senior stakeholder requests a change, ask which existing commitment should move to make room. That single question keeps the process honest.

The Future Is Automated and Data-Driven

Manual frameworks help. Static prioritization doesn't hold up well in a live SaaS environment.

What changes week to week isn't just backlog size. Customer behavior changes. Ticket patterns shift. Usage drops in one segment and expands in another. A feature request that looked marginal can become urgent when support, sales, and product usage start pointing to the same underlying risk. Organizations often don't catch that fast enough because their prioritization inputs live in separate tools and are reviewed too infrequently.

Why static scoring falls behind

Traditional prioritization methods assume people will gather the evidence, interpret the patterns, update the scores, and push the result into the roadmap. In reality, that often means a monthly or quarterly review powered by stale screenshots and selective anecdotes.

That's why the emerging shift matters. Existing guides overlook integrating real-time signals from support tickets and usage metrics to prioritize by dollar value. The PMO Outlook Report 2023 reveals 92% of projects misalign with business goals, and AI platforms can fill part of that gap by achieving high correlation accuracy such as 87% in predicting revenue effects from customer feedback, as summarized in Float's discussion of project prioritization.

The broader move toward evidence-led decisions is worth watching across software teams. This overview of Elyx AI for data-driven insights is useful because it frames the organizational side of the shift, not just the tooling.

What dynamic prioritization looks like in practice

The practical change is simple to describe and harder to build manually:

- Behavioral signals come in continuously from support tickets, chat logs, sales calls, and product usage.

- Patterns get grouped automatically so teams see recurring issues instead of isolated anecdotes.

- Revenue impact is estimated so a bug or request is tied to business consequences, not just volume of complaints.

- Scoring updates flow into execution tools so Jira, Linear, or GitHub reflect current priorities rather than last month's assumptions.

One platform built around that model is AI for product development. In practice, this kind of system connects qualitative feedback with behavioral data, then pushes quantified prioritization signals into backlog tools instead of leaving teams to reconcile spreadsheets by hand.

A quick walkthrough helps make the operating model concrete:

The core value isn't automation for its own sake. It's that teams stop debating whose opinion is loudest and start reacting to evidence that updates fast enough to matter. That's where prioritization of projects becomes dynamic rather than ceremonial.

Common Prioritization Pitfalls to Avoid

Even a solid system can get undermined by familiar anti-patterns. The mistake isn't having pressure. Every SaaS team has pressure. The mistake is letting pressure replace judgment.

Anti-patterns and healthy patterns

- Anti-pattern: The HiPPO overrideA senior leader jumps the queue without using the same criteria as everyone else.Healthy pattern: Allow executive input, but run it through the same scoring and capacity discussion as any other request.

- Anti-pattern: The squeaky wheel winsTicket volume, a single big prospect, or an internal champion dominates the roadmap.Healthy pattern: Distinguish between loud signals and broad signals. Repeated evidence across multiple sources matters more than a single heated thread.

- Anti-pattern: New features always beat maintenanceTeams keep shipping visible work while technical debt, reliability issues, and workflow friction quietly degrade delivery.Healthy pattern: Reserve explicit decision space for infrastructure, quality, and foundational work so they aren't forced to compete only on surface-level excitement.

- Anti-pattern: Analysis paralysisThe team keeps refining the framework and never commits.Healthy pattern: Make the decision with the evidence you have, record assumptions, and revisit on a defined cadence.

The financial cost of getting this wrong isn't abstract. **Poor prioritization wastes up to 20% of total project investment through inadequate strategic alignment and resource overload. For a company with a ****50 million project budget, that represents **10 million in preventable waste, according to Tempo's analysis of project prioritization.

The best prioritization system isn't the most sophisticated one. It's the one your team will actually use when a hard trade-off shows up on a busy Tuesday.

If your team is still prioritizing through spreadsheets, opinion battles, and fragmented customer feedback, it's worth looking at SigOS. It helps teams turn support conversations, sales signals, and product behavior into revenue-linked prioritization inputs so roadmap decisions reflect what customers do, not just what stakeholders are arguing for.

Keep Reading

More insights from our blog

Ready to find your hidden revenue leaks?

Start analyzing your customer feedback and discover insights that drive revenue.

Start Free Trial →