A Modern Product Prioritization Framework Guide

Discover the right product prioritization framework to make smarter decisions. Learn to use RICE, MoSCoW, and AI to build roadmaps that drive real revenue.

Think of a product prioritization framework as a structured system that helps your team decide what to build next. It's a way to move beyond gut feelings, subjective opinions, or who shouts the loudest, and instead use an objective, data-driven method to rank every feature and initiative.

The whole point is to make sure your limited development resources are focused on the work that delivers the absolute highest value—both for your customers and for the business.

Why a Product Prioritization Framework Is Your Blueprint for Success

Imagine you’re a city planner trying to decide how to spend a limited budget. Should you build a new hospital, upgrade the subway system, or create a public park? Each option has passionate supporters and clear benefits. Making the wrong call could mean wasting millions and failing to address the community's most urgent needs.

A product prioritization framework is the exact same concept, but for your product. It’s the blueprint you use to make those tough calls.

This kind of system brings order to the chaos that a growing backlog inevitably creates. Without one, teams easily fall into common traps—like building features for the most demanding customer, prioritizing based on the Highest-Paid Person's Opinion (HiPPO), or just tackling whatever seems easiest. This reactive approach almost always leads to wasted effort, a confusing product, and a demoralized engineering team.

From Chaotic Wishlist to Strategic Roadmap

A solid framework is what turns that messy list of ideas into a clear, ranked roadmap. It gives everyone a shared language and a consistent set of criteria to evaluate work, making sure everyone from engineering to marketing is on the same page about what truly matters.

Here’s what that gets you:

- Objective Decision-Making: It pulls emotional bias and internal politics out of the process.

- Improved Transparency: Stakeholders can finally see why certain features are being prioritized over others.

- Strategic Alignment: It guarantees that every feature you build connects directly to your larger business goals.

- Efficient Resource Allocation: You can invest your most precious resource—your team's time—where it will make the biggest splash.

A product prioritization framework isn’t just about making lists; it’s about making commitments. It forces those difficult but necessary conversations about trade-offs and ensures every decision is a deliberate step toward a defined goal, not just a reaction to the latest request.

In this guide, we'll walk through how to use these frameworks to build that crucial alignment. We'll even explore how modern tools like SigOS can supply the data you need—like the real revenue impact of a feature request—to make these frameworks truly work for you.

You can also learn more about how to prioritize a product backlog to better connect your team's work to tangible business outcomes. By grounding your decisions in real data, you can turn prioritization from a source of conflict into a genuine strategic advantage.

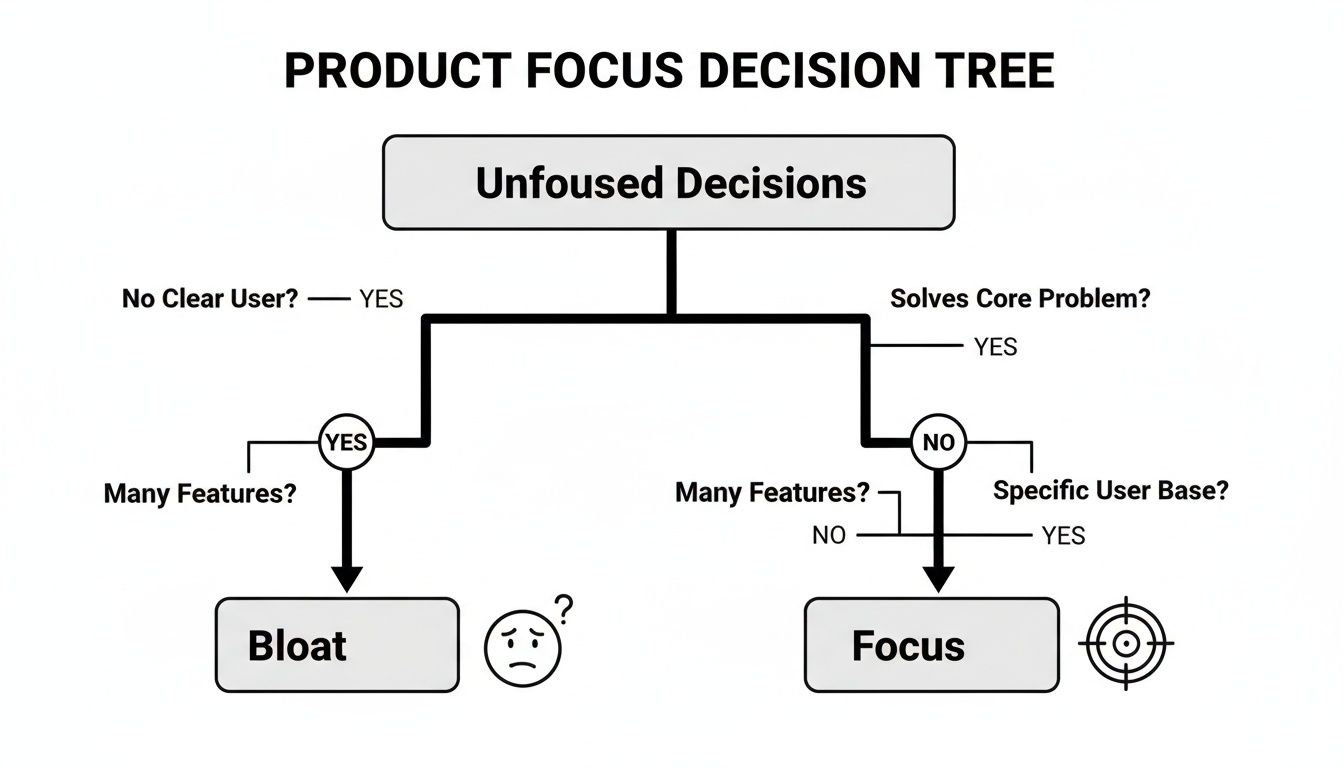

The Hidden Costs of Unfocused Product Decisions

Imagine a promising software company trying to be everything to everyone. The product team gets excited about every new idea and every customer request, no matter how small or niche. On the surface, this looks like they’re being super responsive. In reality, it’s a recipe for disaster.

Before you know it, the product becomes a bloated, confusing mess of half-baked features. It’s a classic "feature factory"—a place where development cycles are spent churning out code, but very little real value is ever created. When you operate without a clear product prioritization framework, your budget vanishes into this black hole of busywork. The true costs, however, go far beyond wasted money; they seep into every corner of the organization.

The Domino Effect of Poor Prioritization

Without a disciplined way to make decisions, a single "yes" to a minor feature can trigger a chain reaction that harms the entire business.

These hidden costs show up in a few key areas:

- Mounting Technical Debt: Adding features haphazardly creates a tangled web of code. It becomes a nightmare to maintain and even harder to build on top of, making future development slower and more expensive.

- Demoralized Engineering Teams: Nothing burns out a talented developer faster than shipping features that nobody uses. When engineers see their hard work consistently fail to make an impact, motivation plummets and turnover skyrockets.

- Rising Customer Churn: A cluttered product is a confusing product. New users get overwhelmed, and existing customers get frustrated when the core problems they hired your product to solve are neglected in favor of random additions.

- Missed Strategic Opportunities: While your team is busy building low-impact features, your competitors are focusing on what truly matters. This lack of focus means you’re always reacting to the market instead of leading it.

This isn't just a theoretical problem. The consequences are tangible and can absolutely threaten a company's survival.

Lessons From a Legendary Turnaround

One of the most powerful examples of ruthless prioritization comes from Apple's dramatic comeback. When Steve Jobs returned in 1997, the company was teetering on the edge of bankruptcy, juggling an absurd portfolio of over 350 different products.

His first move was a masterclass in focus. He slashed the product line down to just 10 core offerings. This wasn't just about cutting costs; it was a disciplined application of prioritization that ultimately saved the company.

This story highlights a critical truth: Saying "no" is often more powerful than saying "yes." The value you create comes not from the volume of features you ship, but from the impact of the right features.

Without this kind of focus, teams fall into a costly trap. In fact, studies show that without a strong product prioritization framework, teams can waste up to 30% of their development cycles on features with a low return on investment. You can find more details on these industry benchmarks in this ultimate guide to product prioritization. That's a massive drain on resources that no company can afford.

Turning Data Into Your Financial Radar

So, how do you escape the feature factory trap? The answer is to shift from subjective opinions to objective data. Instead of guessing which features will move the needle, you need a system that can quantify their potential value. This is where modern tools can completely change the game.

Platforms like SigOS act as a financial radar for your product. By analyzing data from support tickets, sales calls, and user behavior, SigOS can pinpoint which user problems are actively costing you money—either through churn risk or missed expansion opportunities.

It connects customer feedback directly to revenue, allowing you to see which bug fixes or feature requests will have the biggest financial impact. This transforms your prioritization framework from a theoretical exercise into a powerful, data-driven engine for growth, ensuring you never build a feature with a low ROI again.

Comparing Popular Prioritization Frameworks

Choosing the right product prioritization framework is a lot like a chef picking the right knife. You wouldn't use a cleaver for a delicate garnish, and you wouldn't try to butcher a whole chicken with a tiny paring knife. Each framework is a tool, purpose-built for a specific context, team, and type of decision.

The goal isn't to find the one "perfect" framework to rule them all. It's about understanding the strengths of a few key models so you can pull out the right one for the job at hand.

Let's break down three of the most popular frameworks to see where they really shine—and what trade-offs you're making when you use them.

Without a structured approach, it’s easy to get lost. You end up chasing every new idea, leading to a bloated, unfocused product that doesn't do anything particularly well. A good framework forces clarity.

As you can see, a deliberate framework is what guides a team toward real focus, helping you sidestep the resource drain and customer confusion that an undisciplined "yes to everything" approach inevitably creates.

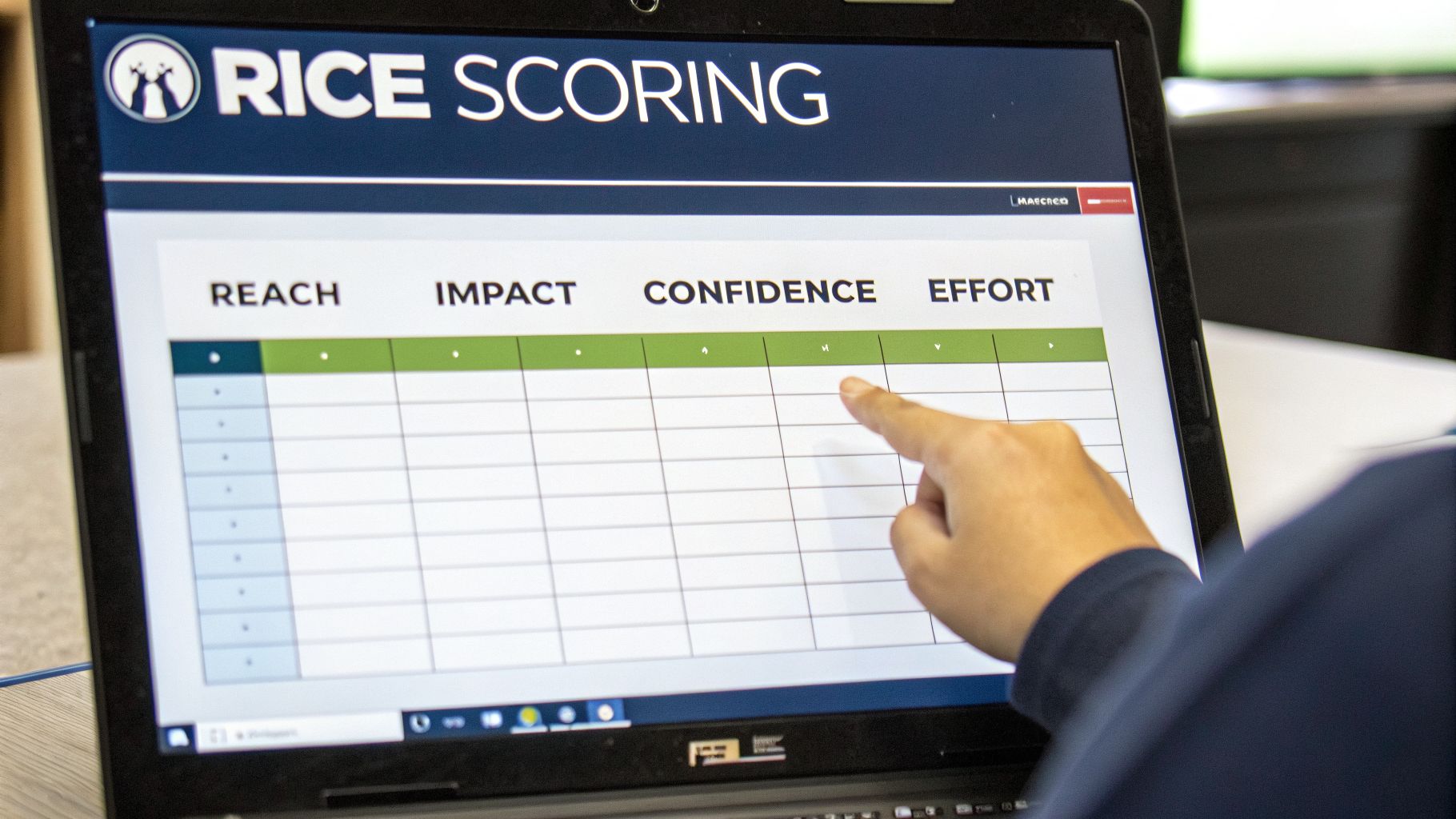

RICE For Data-Driven Teams

The RICE scoring model is a godsend for data-obsessed teams. It’s designed to inject quantitative rigor into what can often be a very subjective process. It works by scoring every initiative across four distinct factors:

- Reach: How many customers will this actually touch in a given period?

- Impact: How much will this move a key metric we care about, like conversion or adoption?

- Confidence: How sure are we about our estimates for Reach and Impact? (This is a great gut check!)

- Effort: How much time will this really cost our product, design, and engineering teams?

You plug these into the formula—(Reach x Impact x Confidence) / Effort—and get a single score. This makes it incredibly easy to stack-rank competing ideas with a healthy dose of objectivity. RICE is perfect for mature product organizations that have solid data and want to cut through the noise of stakeholder opinions.

MoSCoW For Clear Scope and Deadlines

If you're staring down a tight deadline, the MoSCoW method is your best friend. It’s a qualitative framework that excels at defining scope and managing everyone’s expectations by bucketing features into four simple categories:

- Must-have: Absolutely non-negotiable. The product simply can't ship without these.

- Should-have: Important features that add major value but aren't mission-critical for the initial launch.

- Could-have: Those "nice-to-have" features that would be great if there's time and resources left over.

- Won't-have: Items that are explicitly out of scope for this release. This is key for preventing scope creep.

The real power of MoSCoW is its simplicity as a communication tool. It gets everyone on the same page about what’s guaranteed for a release, keeping the team focused and on track. If you need to align stakeholders and define a Minimum Viable Product (MVP), this is a fantastic choice. To see how this fits into the bigger picture, it helps to understand different methods of project management for software engineering like Scrum.

Value vs. Effort For Quick Strategic Sorting

Think of the Value vs. Effort Matrix as the strategist's go-to whiteboard tool. It's a simple 2x2 grid that helps you rapidly sort ideas based on how much customer value they might deliver versus the effort needed to build them.

This framework quickly groups your initiatives into four quadrants:

- Quick Wins (High Value, Low Effort): These are the no-brainers. Do them now.

- Major Projects (High Value, High Effort): These are your big strategic bets that require careful planning.

- Fill-Ins (Low Value, Low Effort): Tackle these when you have downtime, but don't expect them to move the needle.

- Money Pits (Low Value, High Effort): Avoid these like the plague.

This visual approach is perfect for high-level planning sessions or quarterly roadmapping when you need to make fast, directional decisions without getting bogged down in spreadsheets.

Your choice of a product prioritization framework shapes your team's conversations. RICE forces a discussion about data, MoSCoW forces a discussion about scope, and Value vs. Effort forces a discussion about strategy.

Choosing Your Product Prioritization Framework

No single framework is a silver bullet, and experienced teams know this. The best approach often involves mixing and matching models for different contexts. For instance, a team might use the Value vs. Effort matrix for annual planning and then switch to the RICE model for nitty-gritty sprint prioritization. Having a toolkit of different backlog prioritization techniques gives your team the flexibility to adapt to any challenge.

To help you decide, here’s a quick comparison of the top frameworks and where they fit best.

| Framework | Best For | Key Strengths | Potential Weaknesses |

|---|---|---|---|

| RICE | Mature teams with access to quantitative data and a need for objective ranking. | Data-driven; removes bias; forces evidence-based discussions. | Can be slow if data is hard to gather; requires a data-savvy culture. |

| MoSCoW | Time-sensitive projects, MVP definition, and managing stakeholder expectations. | Excellent for scope control; simple to understand; facilitates clear communication. | Can be subjective; risk of too many "Must-haves" if not disciplined. |

| Value vs. Effort | High-level strategic planning, quick decision-making, and team alignment workshops. | Fast and visual; great for sorting large backlogs; easy for anyone to use. | Lacks granularity; "Value" and "Effort" can be highly subjective. |

Ultimately, the best framework is the one your team will actually use consistently. Start with one that feels like a good fit, test it out, and don't be afraid to switch or adapt it as your team and product mature.

How to Use the RICE Scoring Model

So, how do we take the RICE product prioritization framework from a neat theory and turn it into a practical, everyday tool? The real magic of this model is that it forces teams to stop relying on gut feelings and start using quantifiable estimates. It transforms those classic subjective debates into data-backed discussions by making you evaluate every single idea against four clear criteria.

The formula itself is straightforward: (Reach x Impact x Confidence) / Effort. This simple calculation produces a single, comparable score for every initiative on your plate. It gives you a surprisingly objective way to stack-rank your backlog, ensuring the most valuable work naturally rises to the top.

Breaking Down the RICE Formula

To really get the hang of this product prioritization framework, you have to treat each part of the formula as a specific question that demands a real number for an answer. Vague responses just won't cut it—RICE is all about the evidence.

1. Reach: How Many People Will This Affect? Reach is all about the number of unique users or customers who will actually interact with your new feature over a set period, like a month or a quarter. The key here is realism. It’s not about how many people might use it, but how many will, based on your data.

- What to avoid: "This will reach a lot of users."

- What to aim for: "Based on analytics for this user segment, we estimate this feature will be used by 3,000 users per month."

2. Impact: How Much Will This Move the Needle? Impact is where you quantify how much a feature will affect a key business goal when a user engages with it. Will it boost conversions, improve adoption, or cut down on churn? To keep things simple and consistent, many teams use a t-shirt sizing scale.

- 3 = massive impact

- 2 = high impact

- 1 = medium impact

- 0.5 = low impact

This is where a tool like SigOS can really shine. It can automate this step by directly connecting feature requests to their potential revenue impact, giving you a data-driven score instead of an educated guess.

Reality Checks and Resource Costs

The last two elements, Confidence and Effort, are what truly ground the RICE framework in the real world. Think of them as essential checks and balances against your team's natural optimism and limited resources.

3. Confidence: How Sure Are You? This is the model's built-in reality check. Confidence is a percentage that shows how certain you are about your Reach and Impact numbers. If you're working with solid, quantitative data, your confidence might be 100%. But if your estimate is more of a hunch, it could be as low as 50%.

A low confidence score is like a brake pedal for exciting but unproven ideas. It forces you to either go find more data or deprioritize the initiative until you can actually prove its value. This one metric is a powerful defense against personal bias.

4. Effort: How Much Time Will It Take? Effort represents the total time your product, design, and engineering teams will need to deliver the feature. The best way to measure this is in "person-months" or "developer-weeks," as this captures the total resources being consumed. While abstract story points can work, tying effort to a time-based unit keeps the estimates grounded.

A SaaS company that adopted the RICE model for a year saw some pretty incredible results: churn dropped by 28%, MRR grew by 42%, and its NPS score shot up by 35 points. As an analysis from Atlassian points out, RICE is effective because it forces clear metrics, shifting debates from "This feels important" to "With 90% confidence, this will reach 10,000 users for a $500,000 uplift." You can explore more of these data-driven prioritization techniques to see how others are doing it.

By making teams bring evidence to the table for each of these four factors, the RICE model helps transform prioritization from an art into more of a science.

Applying MoSCoW and Other Qualitative Models

Let's be honest: not every product decision fits neatly into a mathematical formula. While quantitative models like RICE are fantastic for comparing initiatives with clear, hard data, sometimes you need a different approach. Qualitative frameworks are built for those moments—when you need to build alignment with stakeholders and bring order to complex, fast-moving projects.

These models trade precise calculations for structured conversations. They shift the focus from "how much?" to the more fundamental questions of "why?" and "for whom?"

Think of it this way: a quantitative framework helps a chef perfect a recipe by measuring ingredients down to the gram. A qualitative framework helps that same chef and the restaurant owner agree on what kind of food they should be serving in the first place. It's all about setting the right direction and making sure everyone is bought into the vision.

Clarifying Scope with the MoSCoW Method

When a deadline is looming and the pressure is on, the MoSCoW method is one of the best tools you have for managing scope. It forces your team to have the tough, but absolutely necessary, conversations about what is essential for a release. Developed by Dai Clegg at Oracle way back in 1994, it’s a simple system for categorizing every feature or task into one of four buckets.

- Must-have: These are the non-negotiables. Without them, the product or release simply won’t work. Think of them as the foundation of a house—everything else is pointless if the foundation isn't there.

- Should-have: Important features that add real value but aren't critical for the initial launch. These are like the high-end kitchen appliances; they’re great to have, but you can still cook a meal without them.

- Could-have: Desirable but minor improvements. These are your classic "nice-to-have" items, like decorative landscaping, that you only tackle if you have extra time and resources.

- Won't-have: Items that are explicitly ruled out for the current release. This category is surprisingly crucial for preventing scope creep and managing everyone's expectations.

The MoSCoW framework has proven its worth time and again in agile settings. Some companies have seen project delays get slashed by up to 40%, with teams successfully shipping 81% more 'Must-haves' on schedule. You can discover more about how MoSCoW drives agile success and see why it’s a go-to for over half of agile teams around the world.

Uncovering Customer Delight with the Kano Model

While MoSCoW is laser-focused on project scope, the Kano Model is all about customer satisfaction. It helps you understand how users will feel about certain features, sorting them into categories based on their emotional response. This framework is perfect for finding opportunities to go beyond just meeting expectations and actually create moments of delight.

The model defines three main types of features:

- Basic Features: These are the absolute minimum. Customers don't even notice them when they're present, but they get incredibly frustrated when they're missing. Think of Wi-Fi in a coffee shop—it’s expected, not celebrated.

- Performance Features: For these, more is always better. The more you invest, the happier your customers become. Faster loading speeds or more storage capacity are perfect examples.

- Excitement Features: These are the unexpected surprises that create true delight and set you apart from the competition. Remember the first time you used a phone that unlocked with your face? That was an excitement feature.

The Kano Model is a powerful reminder that not all features are created equal in the eyes of the customer. Neglecting a 'Basic' feature can cause significant churn, while a single 'Excitement' feature can create passionate brand advocates.

Finding Hidden Opportunities with Opportunity Scoring

Finally, Opportunity Scoring (sometimes called Opportunity Analysis) helps you pinpoint exactly where your customers feel underserved. It's a simple but incredibly effective method rooted in the Jobs-to-be-Done theory. You just ask customers to rate different outcomes on two scales: how important is it to them, and how satisfied are they with the current solution?

By plotting these answers on a chart, you can instantly see which features are high in importance but low in satisfaction. These are your golden opportunities—the areas where any innovation will be hugely valued. It's a great way to avoid over-investing in features that are already good enough.

Even these qualitative models become exponentially more powerful when you can inject a bit of quantitative data. For example, you could use a tool like SigOS to prove a bug fix is a 'Must-have' by showing how it directly impacts your highest-value customer accounts. Suddenly, a subjective debate becomes a data-backed decision.

How AI Supercharges Your Prioritization Framework

Let's be honest. Any prioritization framework, whether it's RICE or MoSCoW, has a fundamental weakness: the quality of the data you feed it. We all know the old saying, "garbage in, garbage out." And that’s exactly what happens when scoring is based on manual data gathering, which is almost always slow, subjective, and prone to human bias.

This is where AI steps in and completely changes the equation. Instead of relying on educated guesses or a few standout customer anecdotes, AI automates the heavy lifting. It digs through all your data to find the objective, quantifiable insights you need to make your framework genuinely powerful.

From Subjective Guesswork to Objective Data

Think of modern AI platforms like SigOS as the engine that breathes life into your static prioritization spreadsheets, turning them into dynamic, real-time decision systems. Imagine this: no more long, drawn-out debates over a feature's potential "Impact" score. An AI system can analyze thousands of data points—from support tickets and sales calls to user behavior patterns—to give you a clear, data-backed number.

For instance, SigOS can connect a specific feature request not just to a vague feeling of importance, but to a tangible dollar value. It can show you that building this one thing could reduce churn risk or unlock a specific expansion opportunity. Suddenly, the conversation shifts from opinions to outcomes.

AI doesn't replace the product prioritization framework; it validates it. By supplying objective, revenue-centric data, it ensures that scores for impact, reach, and effort are grounded in financial reality, not just team sentiment.

This data-first approach systematically removes the personal bias and "loudest-voice-in-the-room" problem that so often derails manual scoring. An organization’s ability to make smart decisions is directly linked to the data it uses. In fact, companies that use data-driven prioritization are 2.5 times more likely to be high performers, yet many teams still use only about 50% of their available data when making decisions. AI is the key to closing that gap.

Quantifying Every Decision with Real-Time Insights

The true power of AI becomes crystal clear in day-to-day scenarios. Instead of a team agonizing over whether a bug fix is a 'Must-have' or a 'Should-have' in a MoSCoW session, an AI platform can cut right through the noise. It can show you that the bug is directly contributing to $10,000 in monthly revenue loss. The decision just made itself.

This level of insight allows your team to act with incredible precision:

- Spot High-Value Opportunities: AI can surface feature requests that are consistently mentioned by your most valuable accounts, automatically flagging them for your attention.

- Predict Churn Risk: By detecting patterns in user complaints or seeing a dip in engagement, AI can warn you about issues that are actively pushing customers toward the door.

- Validate Effort Estimates: AI can analyze your historical data to help refine effort scores, comparing past estimates with the actual development time for similar tasks.

As you start using AI for these tasks, it's helpful to understand the AI speed-accuracy trade-off. This helps you strike the right balance between getting quick answers and making sure you have the right data to make the best call. Ultimately, bringing AI into your process supercharges your prioritization framework by connecting every decision directly to your bottom line.

If you want to dig deeper, check out our complete guide on how AI for product development is reshaping the way modern teams build products.

Answering Your Top Questions About Prioritization Frameworks

Even with the best intentions, rolling out a new product prioritization framework isn't always a smooth ride. Let's walk through some of the most common questions and roadblocks teams run into when they shift from making gut-calls to building a more structured, data-backed culture.

How Do I Get Stakeholders on Board with a New Framework?

The key is to show, not just tell. It’s one thing to talk about efficiency; it's another to present hard data on wasted development cycles or features with disappointingly low adoption rates. This highlights the very real cost of your current ad-hoc process.

Frame the new framework as a way to bring transparency and fairness to the roadmap, rather than just another layer of red tape. A great way to do this is by running a small pilot program. Pick a single, contained project and use a straightforward model like Value vs. Effort to score a quick, objective win. Proving the concept on a small scale builds trust and makes the benefits tangible for everyone.

To truly shift the conversation from personal opinions to shared business objectives, anchor discussions in objective data. Showcasing the revenue impact of a decision is the fastest way to achieve alignment.

Can We Combine Different Prioritization Frameworks?

Absolutely. In fact, blending frameworks is often a sign of a mature product team that understands there's no one-size-fits-all solution. Think of it like a photographer choosing the right lens for the shot—a wide-angle for the big picture and a macro lens for the fine details.

For instance, you might use a high-level Value vs. Effort matrix during quarterly planning to set your broad strategic themes. Then, when it comes to the nitty-gritty of sprint planning, you can switch to a more granular model like the RICE framework to rank the initiatives within those themes. It’s all about using the right tool for the job.

What’s the Biggest Mistake Teams Make When Adopting a Framework?

The most common trap is treating the framework's output as an unbreakable rule instead of what it really is: a strategic guide. A score or a category is meant to start a conversation, not end it.

Teams that just blindly follow the numbers without layering on their own context, customer empathy, and strategic judgment often lose sight of the bigger picture. The goal is to inform your decisions with data, not to let a spreadsheet make them for you.

Ready to stop guessing and start quantifying your product decisions? SigOS uses AI to analyze customer feedback and connect every feature request to its real revenue impact. See how much your user problems are actually costing you and prioritize with confidence. Discover the power of data-driven prioritization by learning more about SigOS.

Ready to find your hidden revenue leaks?

Start analyzing your customer feedback and discover insights that drive revenue.

Start Free Trial →