Unlock Growth with Feedback About Product

Stop guessing. Capture, analyze, & prioritize feedback about product. Quantify its impact on revenue, churn, and growth with our proven playbook.

Product teams rarely have a feedback shortage. They have a sorting problem.

On any given week, feedback about product shows up in Zendesk tickets, Intercom chats, Gong call notes, Slack threads, onboarding calls, app store reviews, G2 comments, and scattered spreadsheets someone promised to clean up later. Everyone says they are listening to customers. Very few teams can prove they are listening to the right customers, at the right moment, with enough context to make a revenue-aware decision.

That gap gets expensive fast. Teams ship what the loudest account asked for. Support escalations hijack the roadmap. A bug that looks minor in isolation drags down activation for a valuable segment. A feature request sounds strategic until you realize it came from users who never adopted the last three things they requested.

The fix is not collecting more comments. The fix is building a system that turns messy language into operational decisions. The strongest teams do three things well: they centralize every useful signal, structure it consistently, and connect it to behavior and commercial outcomes. Once that happens, feedback stops being anecdotal. It becomes a prioritization layer.

Introduction From Chaos to Clarity

Most product leaders already know where feedback lives. The problem is that each channel tells only part of the story.

Support tickets surface breakage. Sales calls reveal objections and missing capabilities. Reviews show what buyers say publicly when they compare you to alternatives. Internal Slack messages often capture the fastest signal because customer-facing teams start reporting patterns before formal systems do. If these streams stay separate, product ends up reacting to fragments.

That is a risk because only 1 in 26 customers will directly complain about a negative product experience, while the rest leave, according to Lyfe Marketing’s summary of customer feedback research. In practice, that means the team often sees only the visible edge of a much larger problem.

A workable operating model starts with one shift in mindset. Stop treating feedback as something to review in meetings. Treat it as a data pipeline.

Move from passive collection to active ingestion

Passive collection looks organized on the surface. There is a survey tool, a support inbox, maybe a product board. But each source has its own owner, format, and cadence. Nothing is normalized. Nothing is easy to compare.

Active ingestion is different. Every meaningful signal enters one system continuously, with source metadata attached. The raw text matters, but so does the context around it: account, segment, ARR band, stage, plan type, feature usage, renewal date, and whether this came from a trial user or a long-term customer.

A practical pipeline usually starts with these inputs:

- Support systems: Zendesk, Intercom, Help Scout

- Revenue conversations: Gong, Chorus, call summaries from account teams

- Public review channels: G2, Capterra, app marketplaces

- In-product collection: microsurveys, feedback widgets, onboarding prompts

- Internal escalations: Slack, CRM notes, implementation handoff docs

Tip: If a source influences roadmap conversations today, it belongs in the ingestion layer even if the data is messy.

The goal is simple. Build one stream of customer language that can be analyzed like product telemetry. That is how chaos starts turning into signal.

Building Your Unified Feedback Collection Engine

A unified engine is less about storage and more about disciplined capture. If the data arrives late, incompletely, or without customer context, analysis gets distorted before it starts.

The first rule is to stop debating whether some channels “count.” They do. Public reviews, especially, are not optional. 98% of consumers consider online reviews an essential part of their purchase decision, and 93% say reviews directly impact buying choices, which is why SaaS teams need G2 and Capterra in the collection layer, not in a separate brand-monitoring workflow, as noted by MeetYogi’s review and feedback roundup.

What to ingest and why it matters

Different channels expose different classes of truth.

| Channel | Best for | Common weakness |

|---|---|---|

| Zendesk and Intercom | Bugs, UX friction, repeated workflow failures | Skews toward existing users already motivated to contact support |

| Gong and sales notes | Deal blockers, procurement objections, enterprise feature gaps | Sales language often compresses nuance into shorthand |

| G2 and Capterra | Competitive perception, unmet expectations, trust signals | Public reviews can lack account-level metadata |

| Slack escalations | Early pattern detection from success and support | Informal language creates duplication |

| In-app prompts and microsurveys | Moment-based sentiment near a workflow | Easy to over-ask and create sampling bias |

That table matters because teams often overweight one source. Support-heavy teams build for current pain. Sales-heavy teams build for prospects. Review-led teams sometimes optimize for perception over retention. A unified engine protects against single-channel bias.

Build a simple collection schema first

Do not start with a giant taxonomy. Start with fields your team will maintain:

- Source: Zendesk, Intercom, Gong, G2, Slack

- Account identifier: Company, user, workspace, or domain

- Lifecycle stage: Trial, onboarding, active, renewal, expansion, churned

- Feedback type: Bug, feature request, confusion, praise, competitor mention

- Product area: Onboarding, billing, integrations, analytics, permissions

- Raw text: The original message or transcript snippet

- Timestamp: So you can analyze changes over time

That structure is enough to support real queries later.

Automation beats cleanup projects

Many teams try to “organize feedback” with periodic manual exports. The result is always the same. A PM or analyst spends hours copying comments into a sheet, tags decay within days, and the backlog becomes a museum of old opinions.

Use integrations instead. Tickets should flow in automatically. Sales call snippets should sync after calls end. Review sites should be checked continuously. If your team is evaluating tooling, a practical reference point is this roundup of Top Customer Feedback Analysis Tools, which is useful for comparing collection and analysis approaches before you commit.

It also helps to document your intake standards in one place. A concise walkthrough like this guide on how to collect feedback from customers is useful internally because collection breaks down when each team invents its own process.

A flexible tag set for SaaS teams

Use tags that map to action, not vague sentiment buckets alone.

- Bug: Something is broken or unreliable

- Feature request: A clear missing capability

- UX friction: The feature exists, but the workflow is confusing or costly

- Adoption blocker: Users are not reaching value because setup or discovery fails

- Competitive gap: Buyers or customers reference another tool’s advantage

- Expectation mismatch: Marketing, sales, and product promise different things

Key takeaway: A good collection engine does not decide priority. It preserves enough context so priority can be decided later with evidence.

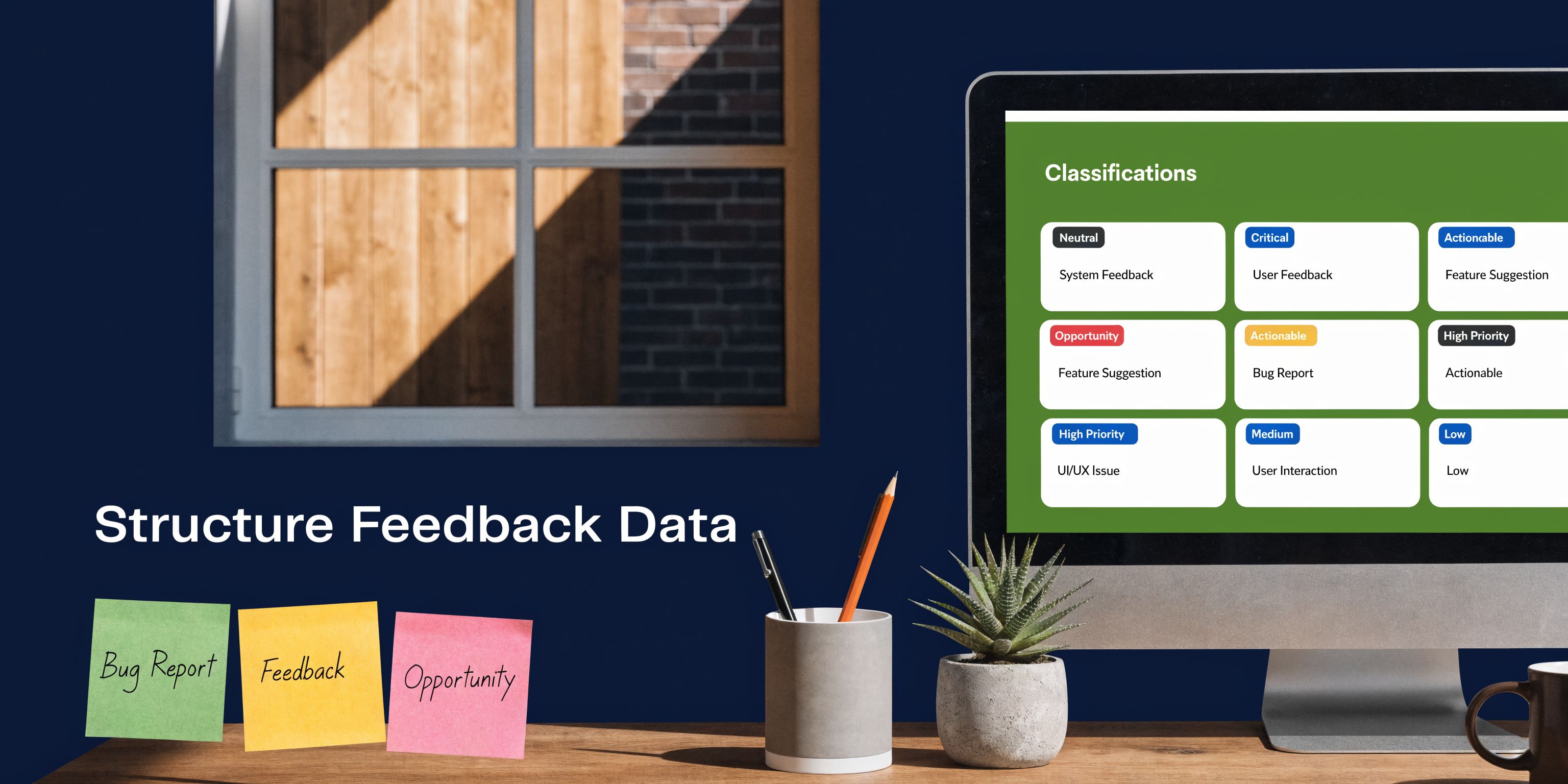

How to Structure Raw Feedback for Analysis

Collection creates a repository. Structure creates a dataset.

Without structure, teams default to weak proxies. They count upvotes. They forward a screenshot into Slack. They remember the last angry enterprise call more vividly than the quieter pattern across smaller accounts. None of that scales, and none of it helps you detect the problems users never describe cleanly.

Use multiple dimensions, not one tag per comment

A single label is rarely enough. “Reporting issue” could mean a defect, performance friction, permission confusion, or an unmet need for custom exports. The structure has to reflect that.

A practical model uses at least these dimensions:

| Dimension | Example values | Why it matters |

|---|---|---|

| Type | Bug, feature request, usability issue, praise | Separates action classes |

| Theme | Dashboard performance, Salesforce integration, billing permissions | Creates roadmap-level grouping |

| Sentiment | Positive, negative, mixed, neutral | Helps interpret the nature of the mention |

| Intent | Complaint, question, comparison, cancellation risk, upgrade request | Clarifies what the customer is trying to achieve |

| Segment | SMB, mid-market, enterprise, trial, partner | Prevents broad conclusions from narrow cohorts |

Once you do this consistently, feedback becomes queryable. You can ask which enterprise accounts mention integration friction with negative sentiment during renewal windows. You can separate workflow confusion from true feature gaps. That is where prioritization gets sharper.

Why manual tagging breaks down

Manual tagging works for a while, usually right up until the volume becomes politically important.

The common failure modes are predictable:

- Inconsistent labels: One PM tags “reporting,” another tags “analytics,” support tags “export”

- Missing context: The comment gets copied over, but not the account value or lifecycle stage

- Drift over time: Categories change as the product evolves, so old and new tags stop aligning

- Recency bias: The newest complaints feel larger than long-running patterns

AI-driven NLP becomes practical, not theoretical, at this stage. The value is not just speed. It is consistency.

According to Chameleon’s guide on measuring product experience success, effective feedback analysis includes automated ingestion, categorization by topic, sentiment, and intent, plus emotion mapping and trend lines from longitudinal sentiment data. That is the difference between a comments archive and a usable operating system.

What a strong classification workflow looks like

A mature setup usually follows this sequence:

- Ingest raw text from support, reviews, surveys, and conversations.

- Normalize the language so shorthand, duplicates, and messy phrasing do not splinter themes.

- Apply structured labels for type, theme, sentiment, and intent.

- Attach account and behavioral context from CRM and product analytics.

- Review edge cases where the model is uncertain or the issue is strategically sensitive.

If your team needs a grounded primer on turning unstructured comments into a usable analysis process, this piece on what is qualitative data analysis is a helpful reference.

Tip: Treat positive feedback as data, not decoration. It shows what users value enough to mention, which is often where expansion and positioning opportunities hide.

Voting systems are easy and misleading

Feature voting boards feel democratic, but they favor vocal users and obvious asks. They are weak at detecting hidden workflow failures, especially when users do not know the right solution to request.

Structured analysis gives you something better. It lets you find repeated “why is this so hard?” signals that point to an adoption blocker, even when no one writes a polished feature request. Often, that is where the work with the greatest impact lies.

Finding the Signal in Customer Feedback Noise

The hard part is not finding patterns. The hard part is deciding which patterns deserve roadmap attention before they become a retention or revenue problem.

Teams usually fail here in one of two ways. They either overreact to anecdotes, or they reduce everything to volume. Both create blind spots. The loudest issue is not always the biggest issue. The most-mentioned request is not always the most valuable one to build.

Look for convergence, not just frequency

A useful signal appears in more than one place.

If support tickets mention confusion during setup, sales calls mention implementation friction, and review sites mention time-to-value concerns, that is not three separate issues. It is one cross-channel pattern with different language attached.

Strong analysis asks questions like these:

- Are multiple teams hearing the same problem in different words?

- Does the issue appear in one segment or across several?

- Is sentiment deteriorating around a product area over time?

- Did a release change the volume or tone of comments?

Trend analysis matters at this point. Chameleon notes that effective feedback analysis includes trend lines from longitudinal sentiment data, and that AI can process thousands of reviews in minutes to turn qualitative input into quantitative benchmarks. In practice, that means you stop looking at feedback as isolated comments and start reading it as movement.

Separate one-off asks from systemic friction

A good filter is to classify requests by pattern shape.

| Pattern | What it often means | What to do |

|---|---|---|

| A few detailed requests from ideal customers | Legitimate strategic demand | Validate against roadmap and revenue context |

| Many vague complaints around one workflow | Usability or onboarding issue | Investigate the journey, not just the text |

| Repeated public criticism with internal support echoes | Trust or reliability problem | Escalate quickly and track post-fix sentiment |

| Frequent requests from low-fit users | Misaligned ICP or messaging problem | Do not let this dominate roadmap decisions |

A systemic issue usually shows up as convergence between words and behavior. Users say something is confusing, then fail to activate. Users complain about reliability, then usage drops. Users ask for an integration, and the same requirement appears in lost deals.

Behavioral correlation exposes silent suffering

This is the piece many teams skip because it is hard manually.

Comments tell you what some users can articulate. Behavior tells you what many users are experiencing even if they never write it down. If you want to get better at this discipline, this guide on how to analyze qualitative data is useful because it reinforces the need to move past surface-level theme counting.

When product teams correlate language with product usage, a different kind of signal appears:

- Accounts mentioning setup friction often show lower activation depth

- Users praising a capability may also have stronger adoption in related workflows

- Complaints about “complexity” can cluster around one step in a multi-step journey

- Requests for “better reporting” might map to discoverability or permissions issues

That is why raw volume is such a poor prioritization method. It ignores account quality, lifecycle stage, and actual downstream behavior.

Key takeaway: The strongest signal is not “most requested.” It is “most consistently linked to important customer behavior.”

Practical review cadence

This work needs a rhythm or it becomes another dashboard no one trusts.

Use a cadence like this:

- Daily: Scan new critical patterns and fast-moving defects

- Weekly: Review theme shifts, segment-level changes, and new clusters

- Monthly: Re-rank recurring issues using product, success, and revenue data

- Post-release: Watch whether sentiment and behavior move in the expected direction

Once that cadence is in place, feedback about product stops being noisy commentary. It becomes evidence you can operationalize.

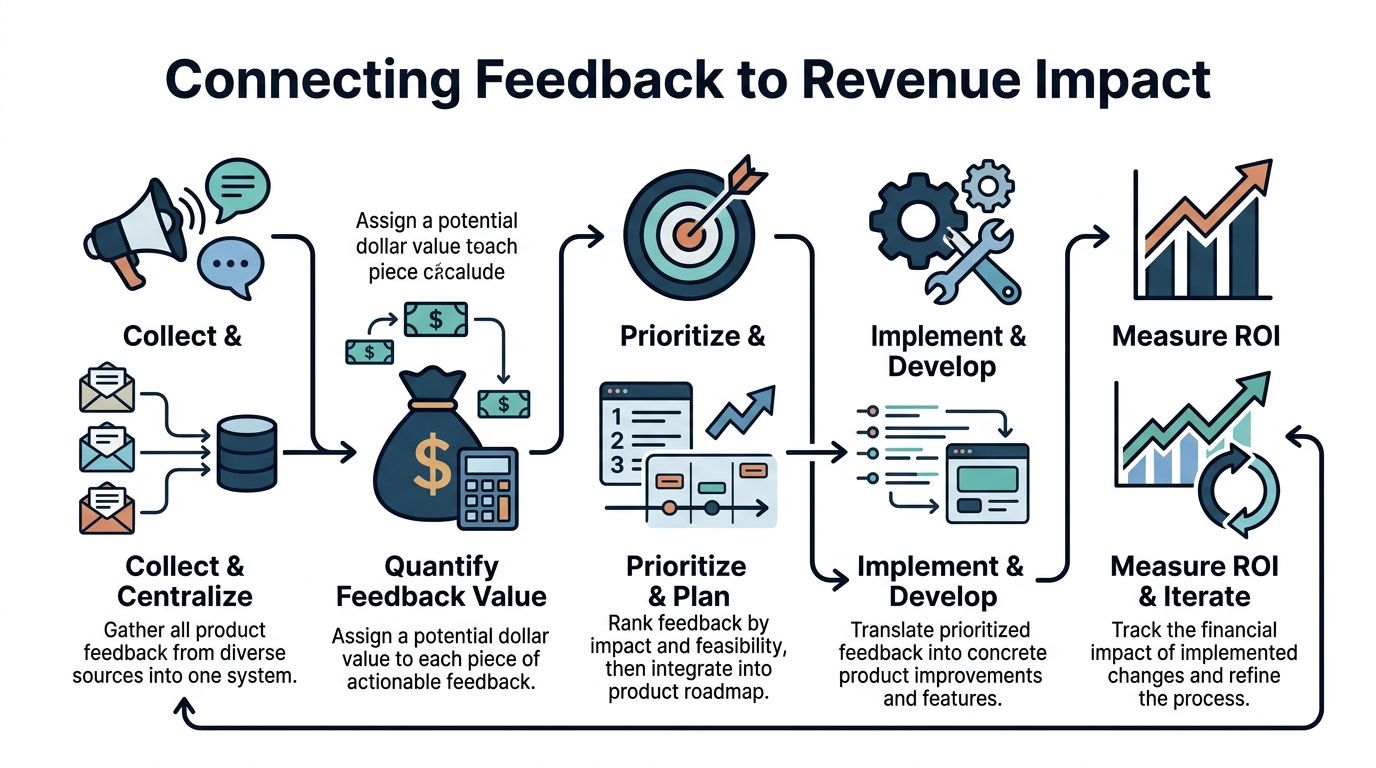

Connecting Feedback to Concrete Revenue Impact

Many teams stop too early. They collect feedback, tag it, maybe cluster it, and then prioritize from intuition anyway.

The useful leap is to connect each meaningful pattern to commercial exposure. That means asking a harder question than “what are customers asking for?” Ask “what revenue is affected if we ignore this?”

Build the commercial join

To do this well, you need one joined record that combines:

- feedback text and tags

- account metadata from the CRM

- product usage data

- lifecycle stage and renewal context

- expansion opportunity context

- issue status in Jira or Linear

This is the missing layer in many stacks. The feedback system knows what users said. The CRM knows which accounts matter commercially. Product analytics knows what users did. Roadmap tools know what is being built. If these remain disconnected, priority remains subjective.

An underserved angle in this space is exactly this correlation problem. Userlens highlights the challenge of linking qualitative feedback with quantitative behavior to quantify revenue impact, and notes that a 2024 survey found 89% of top sellers adopted AI tools for research. The direction is clear. Teams want automation because manual joins do not hold up under real operating speed.

A practical scoring model

You do not need a perfect financial model to get much better than gut feel. Start with a weighted score that uses a few concrete inputs.

| Input | Example interpretation |

|---|---|

| Account value | Higher-value accounts increase urgency |

| Customer stage | Renewal and expansion windows raise commercial stakes |

| Theme recurrence | Repeated mentions across channels indicate systemic risk or demand |

| Behavioral severity | The stronger the usage drop or workflow failure, the stronger the signal |

| Strategic fit | Some requests align tightly with target market direction, others do not |

This creates a revenue impact score, even if the first version is directional rather than exact.

A bug reported by one trial user with no activation may matter less than a workflow issue mentioned by multiple enterprise accounts during implementation. A request that appears in open opportunities may matter more than a heavily upvoted request from low-fit users. That is the point. Scoring should force context into the decision.

Example workflow from feedback to development

Here is a realistic pattern.

An enterprise admin opens an Intercom chat because the Salesforce sync appears incomplete. Support tags it as an integration issue. The same week, sales call notes mention concerns about Salesforce field mapping in two late-stage opportunities. Review data also shows recurring frustration around setup complexity in integrations.

A joined system should do the following automatically:

- Cluster these mentions under one theme.

- Attach the affected accounts and open pipeline context.

- Check whether accounts with this issue show weaker activation in the integration workflow.

- Generate a priority score.

- Create a Jira or Linear ticket with the evidence attached.

That is where one platform like SigOS customer feedback analysis workflows fits in. The practical value is not generic “AI insights.” It is the operational step of connecting support tickets, conversations, and behavior into a revenue-scored issue that engineering can act on.

What to include in a revenue-scored ticket

A product ticket should not just say “customers want X.” It should include:

- Theme summary: Clear description of the underlying issue

- Affected cohort: Which segment or account type is involved

- Supporting evidence: Representative comments, not dozens of screenshots

- Behavioral signal: What users do differently when this issue appears

- Commercial context: Renewal, churn risk, expansion block, or deal friction

- Recommended action: Bug fix, UX improvement, messaging change, or feature work

Tip: Some high-value feedback should not become a feature ticket. It may belong to onboarding, docs, implementation, or pricing.

Why this changes roadmap quality

Once product leaders can quantify impact, roadmap conversations improve immediately.

Sales gets a way to distinguish a strategic request from a one-off concession. Success can point to recurring friction that affects retention. Engineering sees why a fix matters beyond “support said it was urgent.” Finance and leadership can understand why some small workflow issues outrank larger-looking feature requests.

This is the point where feedback becomes an economic input, not a listening exercise.

Automating the Feedback-to-Development Workflow

Manual handoffs are where good analysis dies.

A support lead spots a pattern. They send a Slack message. A PM copies the issue into Notion. Someone promises to look at it in planning. By the time engineering sees it, the customer context is stale and the urgency is gone. The company still claims to be customer-centric, but the operating system says otherwise.

The goal of automation is not to remove judgment. It is to remove delay, duplication, and selective memory.

What should happen automatically

A high-functioning workflow usually automates these moments:

- Ingestion: New tickets, chats, review comments, and call notes enter one stream

- Classification: Themes, sentiment, and issue type get tagged consistently

- Enrichment: Account, segment, and behavioral context attach to the record

- Escalation: High-impact patterns trigger alerts to product and success

- Ticket creation: Jira or Linear issues are created with structured evidence

- Follow-through: Post-release sentiment and behavior are monitored

That sequence matters because every manual handoff strips context away. By the time a ticket reaches engineering, it often lacks the original language, the affected segment, and the business reason it matters.

The backlog should reflect market gaps, not just complaints

Automation also helps teams see beyond bug triage.

Negative and three-star reviews are particularly useful because they often contain a more nuanced version of dissatisfaction than one-star anger or five-star praise. According to Convert’s review mining discussion, teams can identify unmet needs and feature gaps by mining negative and three-star reviews, and AI-driven analysis can reveal 20-30% more market voids when combined with keyword tools.

That matters for product strategy. Some recurring feedback points do not indicate a defect. They indicate an underserved use case, a weak integration, or a positioning gap competitors are leaving open.

A workable closed-loop pattern

Use a flow like this inside the business:

- Customer signal appears in support, reviews, or sales conversations.

- The system clusters related mentions instead of creating duplicate noise.

- Product gets one issue, with supporting comments and the affected cohort attached.

- Engineering ships a fix or improvement.

- Success and support get notified so they can close the loop with customers.

- The team watches post-release behavior and sentiment to verify the change worked.

The video below shows the kind of workflow thinking teams should aim for when moving from insight to execution.

Stakeholder buy-in comes from evidence, not evangelism

If you want this system adopted across product, support, sales, and engineering, do not pitch it as a better inbox. Pitch it as a better decision model.

Leaders buy in when they see these changes:

| Team | Old experience | Better experience |

|---|---|---|

| Support | Repeats the same escalation in different channels | Escalates once with durable context |

| Product | Prioritizes from anecdotes and meeting pressure | Prioritizes from patterns and evidence |

| Engineering | Receives vague tickets lacking customer context | Receives scoped issues with rationale |

| Sales and CS | Feels ignored after surfacing customer pain | Sees visible movement and loop closure |

Key takeaway: A feedback-driven culture is not soft discipline. It is an execution advantage because it shortens the path from customer pain to shipped change.

Embedding Feedback into Your Company Culture

Systems break when teams treat feedback as product’s job alone.

The strongest companies make feedback visible across the business, but they do not dump raw noise on everyone. They translate it into useful views for each function. Sales needs to know which objections are increasing. Success needs to know which workflows predict frustration. Marketing needs the phrases customers use when they describe value. Engineering needs clean issue context, not another opinions channel.

Make the roadmap legible

A healthy culture explains why work is being prioritized in language other teams can use.

That means product should regularly share:

- What patterns are rising

- Which segments are most affected

- What the team is building in response

- What changed after release

This closes an internal trust gap. Without it, support and sales keep escalating the same issues because they assume nothing is happening.

Report back to customers when it matters

Closing the loop externally matters too.

A customer who took time to explain a workflow problem should hear when it has been improved. Public reviews that point to a resolved issue often deserve a response. That habit changes how customers view the relationship. It also trains teams to treat feedback as the beginning of a product cycle, not the end of a support interaction.

Keep the culture disciplined

Feedback-driven cultures still need boundaries.

Not every request should shape roadmap direction. Not every complaint reflects the needs of your target market. Teams need a shared standard for what counts as evidence, what gets escalated, and when a pattern is strong enough to justify a roadmap change.

Use recurring forums to keep that standard healthy:

- Weekly review: Product, support, and success inspect emerging themes

- Monthly business review: Revenue and product leaders assess impact and trade-offs

- Post-release check: Teams review whether fixes changed behavior or sentiment

That operating cadence matters more than slogans. When teams see the same evidence and use the same language, feedback about product becomes a company asset instead of a political weapon.

If your team is drowning in comments but still prioritizing from intuition, SigOS is worth evaluating. It analyzes support tickets, chats, sales calls, and usage signals together so product and growth teams can identify which issues correlate with churn, expansion, and revenue impact, then route that evidence into development workflows.

Keep Reading

More insights from our blog

Ready to find your hidden revenue leaks?

Start analyzing your customer feedback and discover insights that drive revenue.

Start Free Trial →